Do you trust AI more than humans?

I noticed an interesting pattern in my surroundings:

People are very sensitive about their data (GDPR, etc.)

But the same people are willing to share their health, partner problems, intimate relationships, etc., with LLM.

Chat GPT becomes a therapist.

Why do people trust AI so much, even though they are uncomfortable sharing sensitive data?

I understand that AI can be more tolerant of answers and create a certain sense of security, but it is still a system that can be hacked.

Aren't we sharing too much with artificial intelligence?

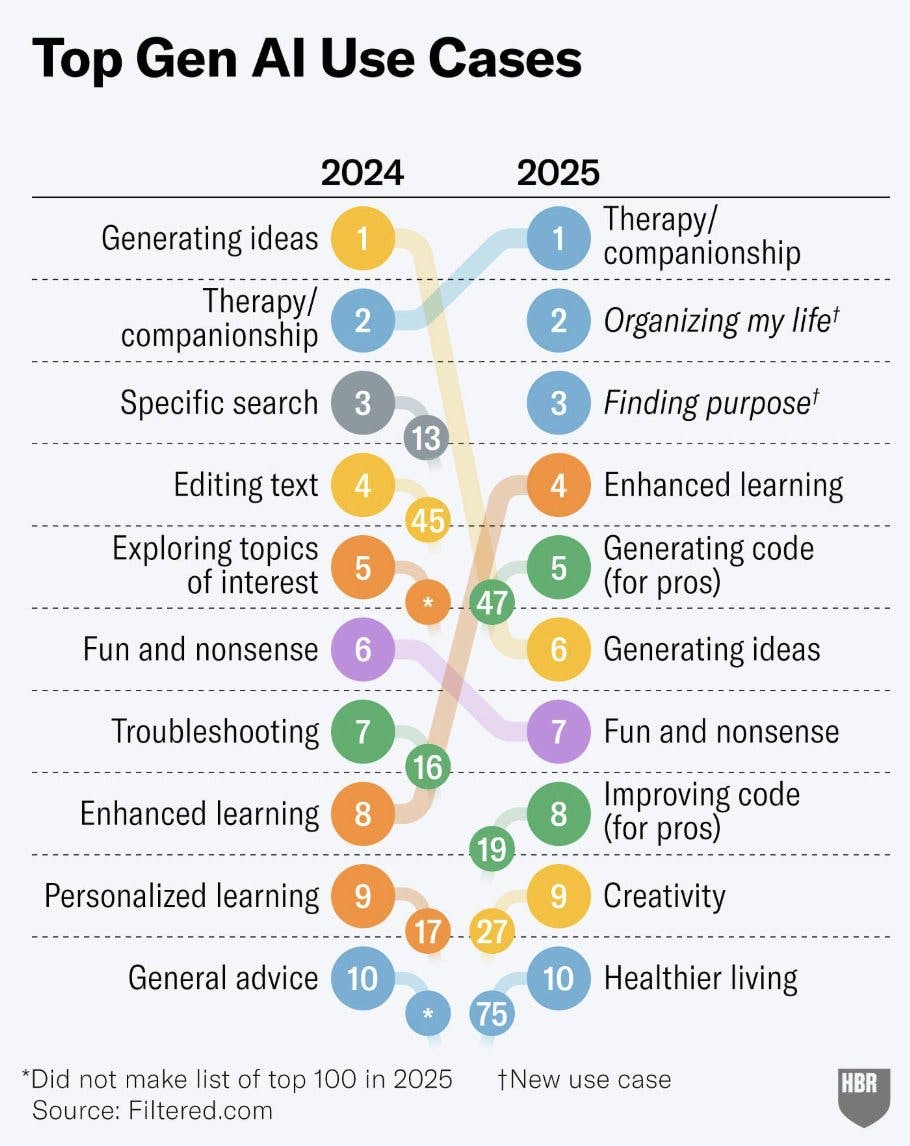

The last thing I am sharing – the infographic by Harvard Business Review on how we started using AI in 2025 compared to 2024:

454 views

Replies

YouMind

I don't think the question itself has an answer. Whether it's a human or AI, we need to make subjective judgments and cross-validate when acquiring information, otherwise it's worthless

minimalist phone: creating folders

@hi_caicai What do you prefer? To collaborate with AI or not? I think that this one can have a clear answer :)

YouMind

Of course I like working with AI, you just need to be clear about their capabilities

AINave

It's an interesting paradox. From my perspective, I see AI primarily as an information aggregator and don't personally view it as a substitute for human emotional support.

However, I can tell you that Data Privacy is just a myth. We are all willingly or unwillingly giving away our data to all major companies in the world. So, to me, sharing with an LLM doesn't feel significantly different or riskier than other online activities.

minimalist phone: creating folders

@ramitkoul I saw this image on Twitter: https://x.com/sergeynazarovx/status/1927692919835664870

Your words reminded me this post :D

Product Hunt

Well, based on that infographic, I use AI very differently than most people haha. I'd say my usage is 95% data/coding related and writing cleanup/brainstorm. For anything personal, I'm definitely on team human.

minimalist phone: creating folders

@jakecrump I think that you got it right. Data-related things are good to abstract from (or thanks to) ChatGPT.

On the other hand, I feed ChatGPT with personal data, so I am a good sample for your surveys/experiments/data abstraction. :D The universe is balanced.

It's a long debate but I still prefer to use remote staffing to manage AI tasks so human and AI are total different subjects in some fields.

I reckon it's a combination of the lack of consequences when over-sharing with a third party (the faceless chat aspect also contributes to that - it's easier to divulge private info over a phone call than with cameras on) and the speed of response that validates the situation while offering some break to the paralysis or thought spiral.

minimalist phone: creating folders

@ranahmbg Probably. I have noticed one pattern (at least in myself): the better I know a person, the more embarrassed I feel about sharing something private.

Pokecut

While AI might feel like a safe confidant, it’s really better suited for crunching data and tackling practical tasks than for handling our messy, deeply human emotions. For those personal, raw moments, humans still hold the edge, with all our flaws and warmth intact.