TuneSalon AI - I built a no code finetuning platform and benchmarked it. Here's everything.

Hello! I don't have a developer background. But I got obsessed with fine-tuning and couldn't find a tool that let me do it without writing code. So I built one.

TuneSalon AI lets you fine-tune open-source models without writing a single line of code. Upload your data, pick a model, hit train. It has a built-in dataset generator, chat with your fine-tuned model, GGUF export, and a marketplace to share or sell your adapters. There's a website using cloud GPUs, so you don't need to have one, and a desktop app that runs fully local on your own hardware. Desktop app is completely free and open source. I wanted to actually prove that fine-tuning works. So I ran proper benchmarks.

The Benchmark

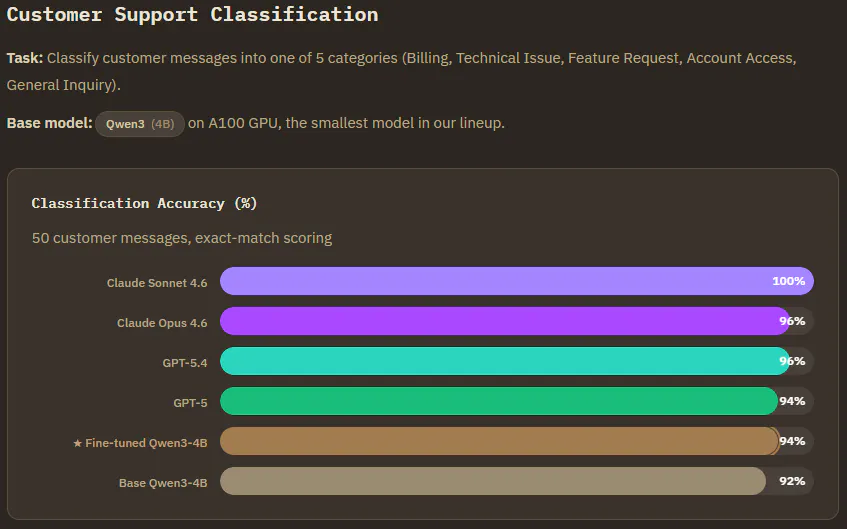

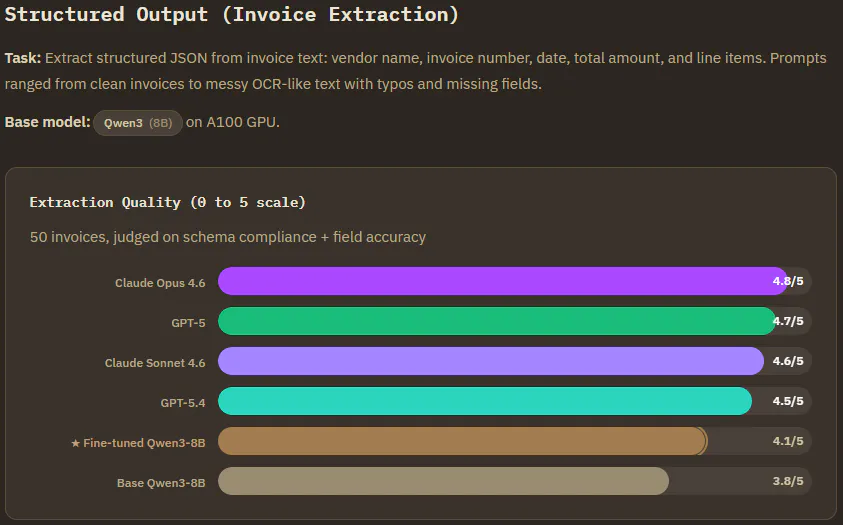

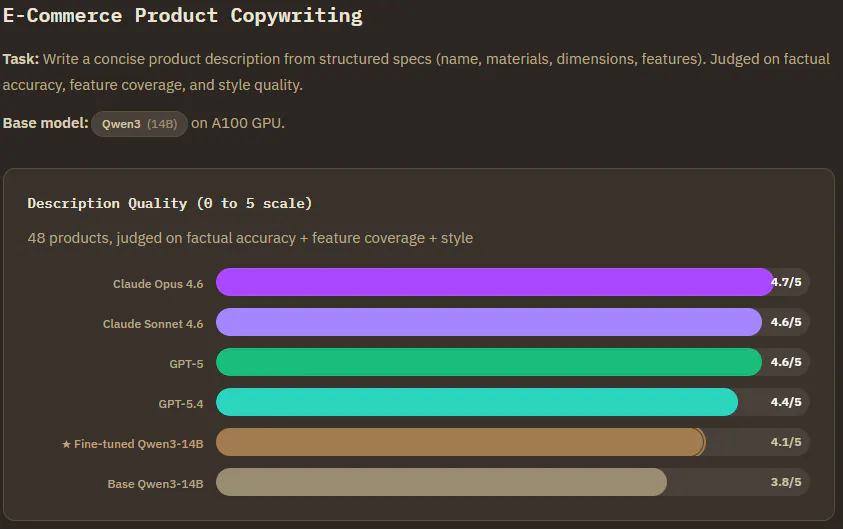

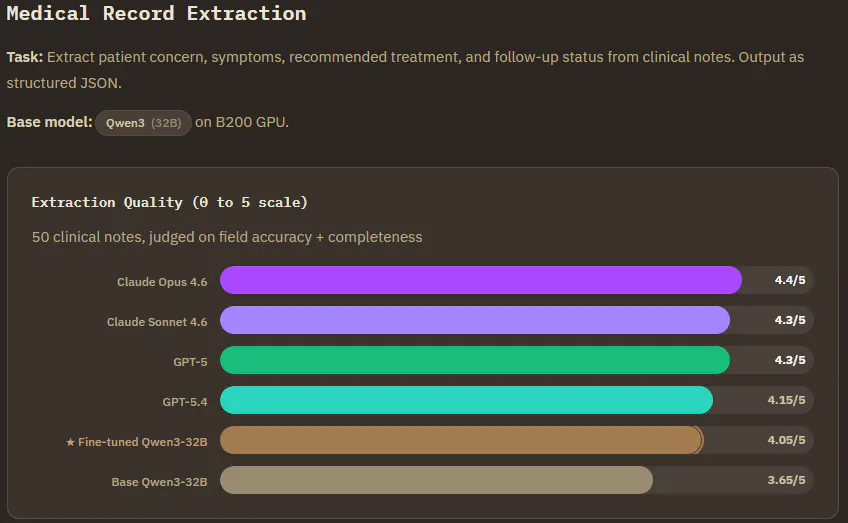

I tested fine-tuned Qwen3 models (4B to 32B) against frontier models(Claude, ChatGPT) and base Qwen3 on general tasks, 250 prompts total. All finetuned models were trained with 500 examples, 3 epochs, LoRA rank 16. I used Perplexity to create prompts and judge independently. The observations below are based on Perplexity's evaluation.

Customer Support Classification (Qwen3-4B) : The improvement compared to base model was small, but definite. Edge cases where the base model confused "Account Access" with "Technical Issue", and feature requests it kept mislabelling. Finetuning fixed those.

Invoice Extraction (Qwen3-8B) : Frontier still leads here, but the fine-tuned model fixed something that matters in production. The base model kept dumping reasoning text into the JSON output. After finetuning, it never broke schema. It also became more conservative about hallucinating invoice numbers on ambiguous inputs. It would rather leave a field empty than make something up. On clean invoices all models performed nearly identically. The gap only showed on messy OCR inputs with discounts and deposits.

E-Commerce Copywriting (Qwen3-14B) : Frontier wins on stylistic polish. But here's the thing. The finetuned model had the lowest hallucination rate of every model tested. It never invented features like "military grade protection" that weren't in the product spec. It preserved every dimension, capacity, and warranty detail without embellishment. Feature coverage went from 75-80% to 85-90% after fine-tuning, and the repetition problem the base model had (product names appearing multiple times in a single description) was completely eliminated.

Medical Record Extraction (Qwen3-32B) : This was the closest race. The biggest gain was in the treatment field. The base model frequently left it completely blank, while the finetuned model learned to provide specific treatment plans matching clinical patterns. The most interesting finding from the whole benchmark was here. Frontier models sometimes scored lower because they were too smart, adding guideline level recommendations instead of extracting what the note actually said. The finetuned model better matched the expected extraction style, correctly distinguishing "yes" for chronic conditions vs "no" for routine procedures in follow-up flags.

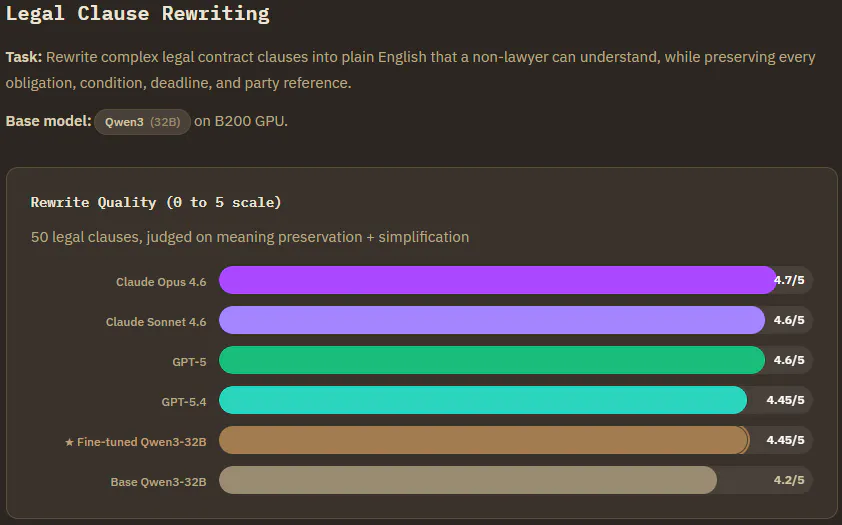

Legal Clause Rewriting (Qwen3-32B) : Tied with one of the top frontier models, within 0.25 of the best. The finetuned model learned to explicitly restate each legal qualifier in simple terms rather than glossing over them. It preserved temporal details like "2 year post employment period" that the base model sometimes dropped. Frontier models added useful extras like mini glossaries, but that goes beyond the rewrite brief. The finetuned model stuck to the task.

Frontier models still win every task on raw score. But fine-tuned models don't hallucinate, don't add things you didn't ask for, produce consistent output every time, and learn your specific edge cases. And these were general tasks. With your own specific data, the gap would narrow further!

How TuneSalon compares to other options

There are a few ways to finetune nowadays. Here's the comparison.

OpenAI finetuning is polished and easy but you can't download your model. It lives on their servers, you pay per token forever, and if they deprecate it, it's gone.

Unsloth is great if you're technical. Fast training, less VRAM, fully local. But you need to set up Python, CUDA drivers, and have a compatible GPU. Their new Studio UI helps a lot though.

AutoTrain works well in the HuggingFace ecosystem but has no built-in chat and dataset generation is a separate tool.

DIY gives you maximum control but requires real ML experience.

TuneSalon AI is built for people who aren't developers. No code, built-in dataset generator, built-in chat, adapter marketplace, and you own everything you create. Cloud GPUs if you don't have hardware, or the desktop app for complete privacy, fully free and open source.

I launched TuneSalon AI today on Product Hunt. Check it out and let me know what you think!

Launch Page : https://www.producthunt.com/products/tunesalon-ai

Desktop app (open source) : https://github.com/Amblablah/tunesalon-ai-desktop

Website : https://tunesalonai.com

Replies