I generated 2,800+ programmatic SEO pages for my n8n project yesterday. Good idea?

Hi makers! 👋

I'm building a search engine for n8n workflows (n8nworkflows.world).

The problem with n8n is that finding specific integration pairs (e.g., "Notion to Slack" or "Gmail to Sheets") is surprisingly hard on the official forums.

So, this weekend, I decided to experiment with Programmatic SEO.

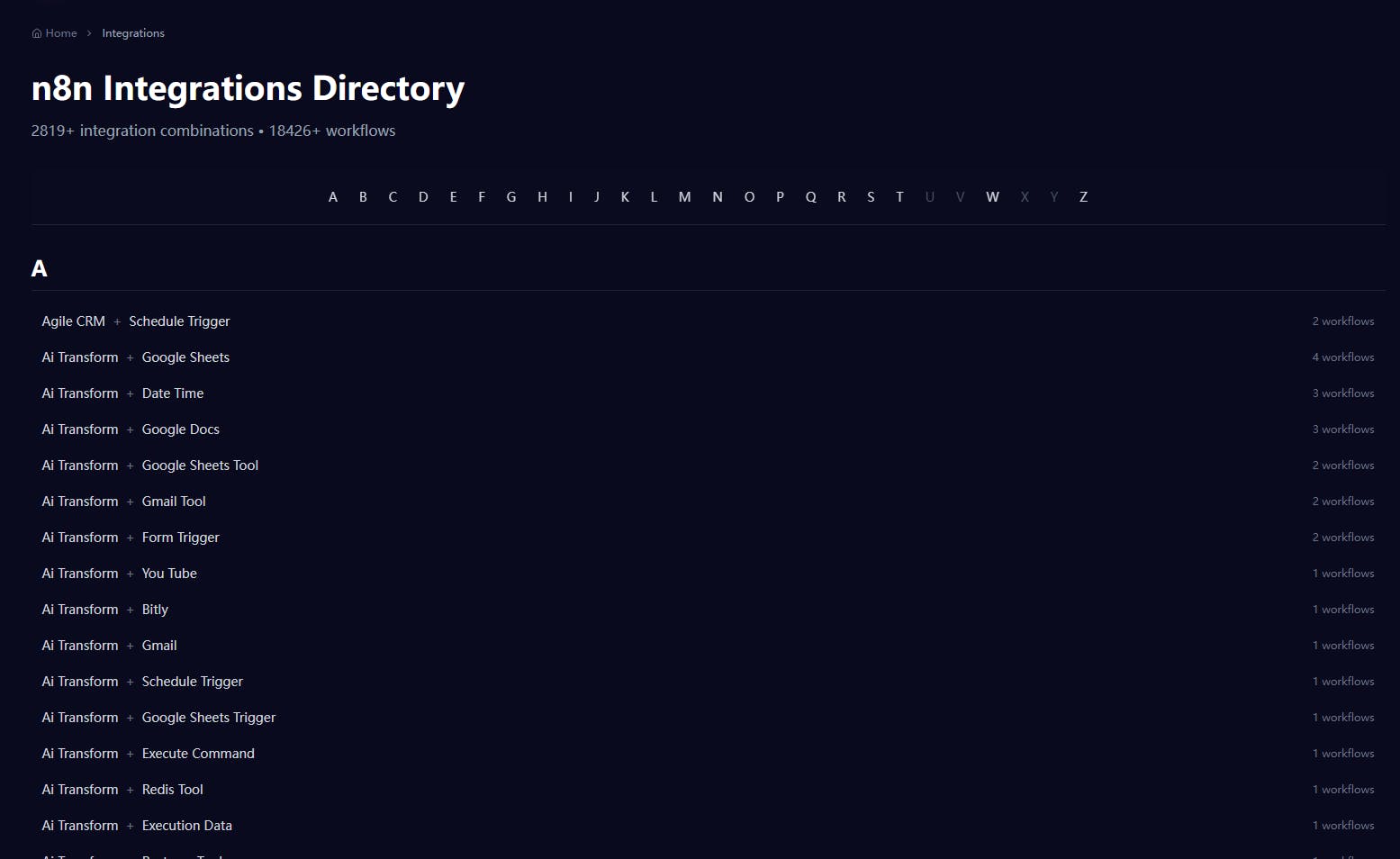

I built a directory that automatically generates a page for every single integration combination.

👉 Result: 2,819+ new landing pages.

👉 Goal: To capture long-tail search traffic for specific automation needs.

You can see the directory here:

https://n8nworkflows.world/integration

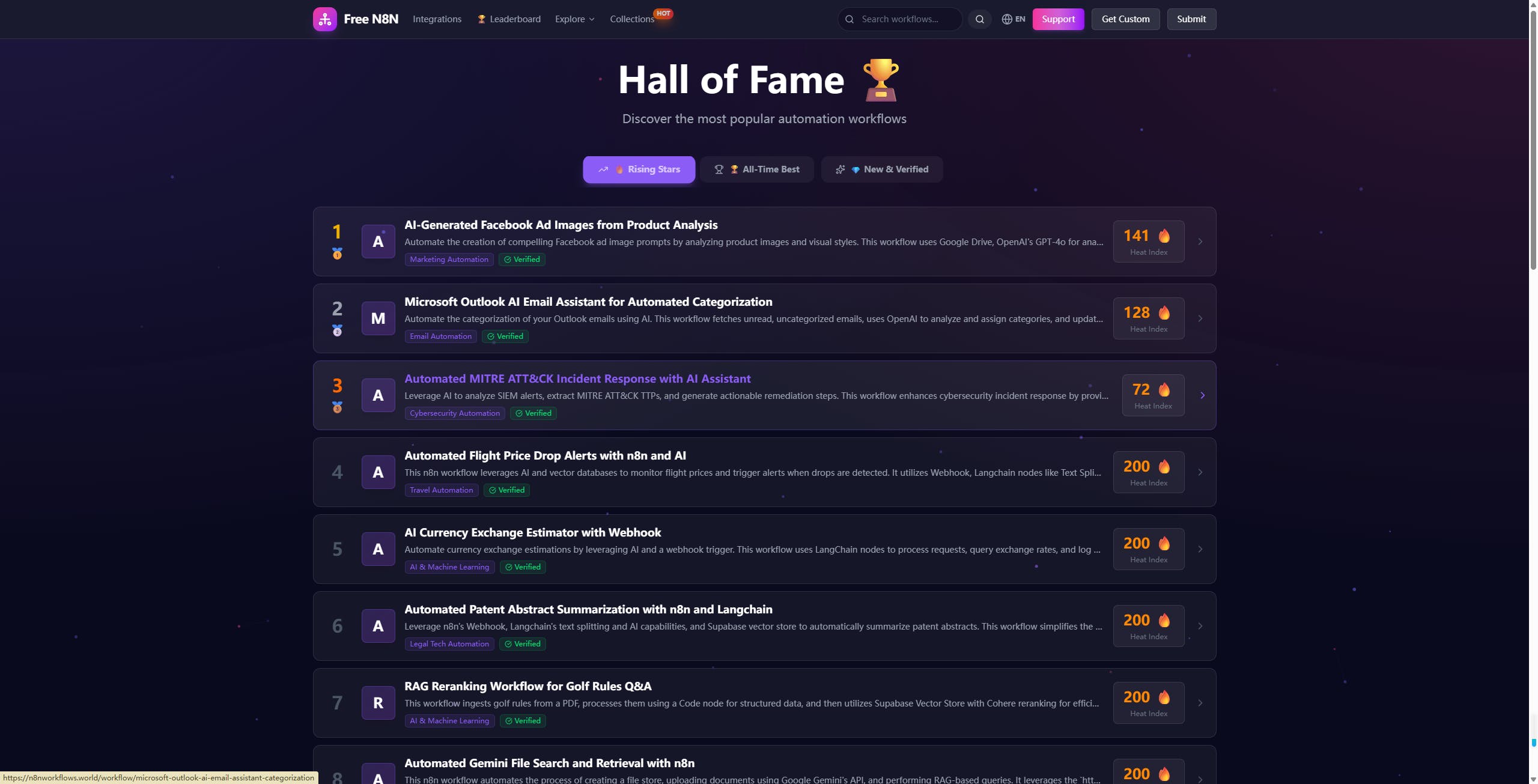

And the "Hall of Fame" leaderboard here:

https://n8nworkflows.world/leaderboard

I'm using Next.js + Supabase.

Question for the community:

Has anyone else tried this "mass page generation" strategy? Did Google index your pages quickly, or did you get penalized?

Would love to hear your feedback on the UX!

Replies

This is my 9 to 5 :)

Programmatic can be a great way to produce a lot of useful pages. We used them at Hostelworld to build out 50,000 place of interest pages, then x 17 for languages. But the content on them, was curated with a human (me), then we adjusted dynamically what specific elements needed to be called for each page. Created a lot of commercial traffic. Similarly I've seen this done poorly and have been brought in to tidy up after a content audit etc.

If there is a genuine use case for each page and each requires their own page, then totally. It's the right thing to do.

If you are essentially making doorway pages, where there is little need to have that level of nuance for each page, when a universal page could suffice, you may see that most get crawled not indexed.

Smaller sites with lower site authority (not DA or PR, but just general search engine trust) can struggle to get all of their bulk pages indexed and ranked, but that doesn't mean they can't. So I guess all I would say is as above, make sure they have unique distinctions about them, and of course ensure your pages add value vs the top 5 pages that rank for the keywords that page is targeting and you will be good.

Good luck. And if you want a free tracking tool to monitor your keywords for a bit, I'm launching Rankdeck.co.uk on here in a week, and would love some testers, so will be dishing out pro plans for a year for free :)

David

@rankdeck David, the Hostelworld story (50k x 17 languages!) is the dream benchmark. Thanks for sharing that context.

You completely diagnosed my current bottleneck: "Fresh domain vs. Bulk pages."

I realized that without "third-party validators" (backlinks), Google just sees 2,800 orphan pages. To prove this isn't just "doorway spam," I actually just finished writing a technical deep-dive on how I architected this using Next.js + Supabase to ensure every page has unique, verified JSON data.

Question for you: Since you've seen this struggle before, for a solo dev with $0 budget, what's your #1 tip to get those first few "quality validators"? Is it just about grinding guest posts, or something more clever?

P.S. Just joined the Rankdeck waitlist! Looking forward to testing it.

@pan_zo So sorry i didn't reply sooner. Great question. I've actually put more work into Rankdeck being a content publisher focused SEO workflow tool. Adding qualitative insight rather than ambiguous quantitative data that a lot of these tools produce. Essentially using my SEO brain as inference to add an expert layer on data.

Right, that's my self promo done. Your question.... :)

My three tips for this.

1. Sign up to Qwoted. Build a really fleshed out PR profile for you as a founder etc. And respond to any PR journo requests for related field experts.

It's a grind but it does work. Just takes time and you will answer some requests amazingly and hear nothing. Then two months later you get a message from a journo at the Boston Globe who does a feature and includes you. It goes like that. It's wild.

2. Look at all of the blogs that link to your competitors. If you can get access to those, then you can see which ones have real domain traffic and are legit blogs, and then when you visit them, if you see an advert popup, you realise they are a pay to play website. Scoll to the bottom, look for an advertising page link or a contact. And just ask them if they do any marketing work or have any marketing opportunities for someone in your space given the correlation of what they do and you do. Focus on the sites being related. Don't go for unrelated.

3. There are facebook groups that do link swaps. Travel has a lot of them. You will likely find a whole bunch in different niches. I know travel as that was/is my personal favourite. But that's a good route.

4. If there are any organisations that you can become a member of , that include a profile page which is indexable and includes a link to your site - great.

5. Do you have any ability to reach out to local small business charities/orgs and offer free triage clinics etc for your own expertise, you may find they list you on local resources etc. This is good for B2B.

All of these are a bit of a slog, but it does pay off. Just do an hour of this a day, or one half day a week at first to keep consistent and not send yourself mad. Keep a spreadsheet of what day you messaged, what you messaged, their name, their email. And then you can keep tabs on it all.

StreamAlive - Interactive PPT slides

That looks like a tonne of work! We use programmatic SEO pages at @StreamAlive - Interactive PPT slides .

My advice is to go slowly, a website that suddenly appears with 2,800 pages might be interpreted as spam.

Also, just because you have published 2,800 pages doesn't mean that Google is obligated to crawl and index all of them. If your website is brand new and has no backlinks it's very likely that Google won't crawl and index them.

@peterclaridge Thanks Peter, that's a solid reality check.

I was definitely worried about the "spam" signal. That's why I'm focusing heavily on the data quality—ensuring that if a user lands on "Notion to Slack," they actually get a working JSON file, not just AI fluff.

I'm currently using IndexNow to drip-feed the pages rather than dumping them all at once, trying to stay under the radar while building up some domain authority.

Appreciate the warning!

StreamAlive - Interactive PPT slides

@pan_zo You're welcome, and good luck!

As a follow up. The travel booking site I mentioned in the last message, had their pages indexed in 48 hours for the first lot and in around 3 - 4 weeks for the full lot, give or take a percentage.

I think it comes down to whether the space is empty, or you're a trusted name for that particular grouping of entities/n-grams/queries etc. If they are organised into topically related folders, sub folders, breadcrumbed together etc, you may want to work to build some citations to your hub pages to ensure search engines see that these pages are externally trustworthy too.

Ideally backlinks that you'd tell your parents about. Ones that relate to your sector, have significant domain traffic, the link is in an article that relates to what you do, the link is in a passage of text that relates to what it is going to link to your site on and is neither an orphan page, nor on the subdomain of that root domain.

Sites I've worked on that pumped out a lot of pages, even if they have value but are on a newish domain and had no third party quality validators such as relevant backlinks, tended to struggle more in getting programmatic results to stick

D