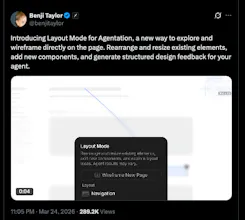

Launched this week

Agentation

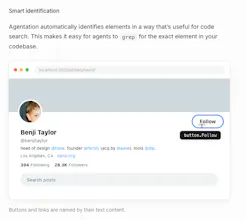

The visual feedback tool for AI agents

602 followers

The visual feedback tool for AI agents

602 followers

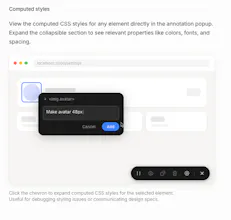

Agentation turns UI annotations into structured context that AI coding agents can understand and act on. Click any element, add a note, and paste the output into Claude Code, Codex, or any AI tool.

The audience feels right away. I would test one version that pushes the outcome harder than:

"The visual feedback tool for AI agents"

Maybe:

"See where your AI agent breaks, fix it faster, and stop debugging blind."

中文也可以是:

"看清 AI agent 是在哪里出错,更快修掉,而不是继续盲调。"

Clipboard Canvas v2.0

Hey, I'm running a multi-agent Claude Code setup myself - one agent does UX/UI specs, another builds it in Astro. The tricky part is always the handoff: design agent says one thing, implementation agent hears something else. This looks like it might actually fix that. Does it handle stuff like when an element gets moved or renamed between design and build?

Visual feedback for AI agents is something I didn't know I needed until I read this. Right now when my agent does something unexpected on the frontend I have to manually figure out what selector it acted on. Live DOM visibility during an agent run would cut debugging time significantly.

How does Agentation handle feedback for multi-agent workflows? Does it support collaboration between different AI agents?

Can this tool integrate with existing LLM stacks like OpenAI or custom models, or is it built for a specific ecosystem?

Curious—are you replying to every signup instantly or manually?