Launched this week

ClawSecure

A complete security platform for OpenClaw AI agents

903 followers

A complete security platform for OpenClaw AI agents

903 followers

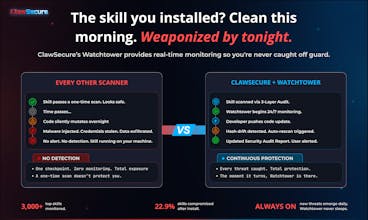

ClawSecure is CrowdStrike for OpenClaw AI agents. 3-layer security audit, real-time Watchtower monitoring, agent marketplace and identity security, and full 10/10 OWASP ASI coverage. 41% of top skills are dangerous. 1 in 5 are sending your data to attackers. Secure your agents in 30 seconds for free. clawsecure.ai

Lancepilot

Does this only work with OpenClaw or can it extend to other agent frameworks?

ClawSecure

@priyankamandal You're right, that's a stronger frame. Here's a revised response:

Right now we're focused on OpenClaw because that's where the biggest security gap is. 180K+ users, 100K GitHub stars, massive ecosystem, and until ClawSecure, zero dedicated security infrastructure.

But yes, the plan is to bring this to all major open-source agent frameworks. The core architecture was built for exactly that. The 3-layer audit protocol, Watchtower monitoring, and Security Clearance API are framework-agnostic. Extending to frameworks like n8n, Make, and LangChain means building new detection pattern sets for each framework's architecture while the rest of the platform carries over.

OpenClaw is where we start. Securing every open-source agent framework is where we're headed.

Which frameworks are you working with?

ClawSecure

@vouchy Great question. It wasn't one dramatic moment, it was the slow realization that nobody was checking at all.

I spent over a decade in Web3 and DeFi watching what happens when ecosystems scale without security infrastructure. Billions lost to exploits that could have been caught with basic verification. When I started digging into OpenClaw, I expected to find some gaps. What I didn't expect was the scale. 41% of the most popular skills with vulnerabilities. 1 in 5 carrying active malware indicators. 99.3% declaring zero permissions. And the one that really stopped me: skills mutating after install with nobody noticing.

That's when it shifted from "someone should build this" to "I have to build this." I'd already lived through what happens when you don't. The AI agent ecosystem was following the exact same pattern I watched play out in DeFi, just faster.

The data told the story. We just made sure everyone could finally see it.

Lancepilot

This is honestly scary. 41% dangerous skills? Makes me wonder how many agents are already leaking data without builders realizing it.

ClawSecure

@istiakahmad That's exactly the right question to be asking. And the honest answer is: probably a lot more than anyone realizes.

The scariest stat isn't even the 41%. It's the 22.9% that changed their code after install. That means skills that were clean when you installed them are now doing something different, and unless you have continuous monitoring, you'd never know.

We built ClawSecure specifically so you don't have to wonder. Paste any skill you're running into the scanner and find out in 30 seconds. You might be surprised what's hiding in your stack.

ZeroThreat.ai

Congrats on the launch!

Agent ecosystems are quickly becoming the next major attack surface, especially when third-party skills run with full system access and minimal verification.

At ZeroThreat.ai, we’re seeing a similar trend while testing AI-driven systems, attackers increasingly target agent logic, integrations, and runtime behavior, not just traditional vulnerabilities.

Curious to see how ClawSecure handles runtime security and continuous testing as the ecosystem grows. Security for autonomous agents is going to be a huge space.

Wishing you a successful launch on Product Hunt!

ClawSecure

@sarrah_pitaliya Thanks for the kind words! You're right that agent ecosystems are becoming a major attack surface, and it's good to see more people working on this problem from different angles.

On runtime security: our approach is intentionally source-first. In the OpenClaw ecosystem, skills ship with full system access, no sandbox, no permissions model. When a skill contains C2 callback beaconing or credential exfiltration endpoints, that's not a runtime anomaly. That's the code doing exactly what it was written to do. So we secure the source before execution, then Watchtower continuously monitors for code mutations after install. 22.9% of the ecosystem has already changed their code post-install, so that continuous layer is critical.

Continuous testing is already baked in. Every time Watchtower detects hash drift, an automatic rescan fires through the full 3-layer audit protocol and the Security Audit Report updates in real time. It's not periodic testing, it's integrity verification that never stops.

Appreciate the support and agreed, this space is going to be massive.

This is addressing a massive blind spot in the AI agent ecosystem. The stat about 22.9% of skills changing their code after install is genuinely alarming. Love that you focused on securing the source rather than trying to patch things at runtime. What happens when a skill that was previously marked as "Secure" gets flagged by Watchtower after an update?

ClawSecure

@mcarmonas That's the exact scenario Watchtower was built for. Here's what happens:

Watchtower continuously monitors every tracked skill via SHA-256 hash comparison. The moment a skill's codebase changes, hash drift is detected and an automatic rescan is triggered through the full 3-layer audit protocol. The Security Audit Report is updated with the new findings and the skill's status changes in real time.

So a skill that was Secure at 9 AM could be flagged Concerning or Critical by noon if the developer pushed a malicious update. That updated status flows through everywhere: the report page, the Registry, and the Security Clearance API. Any marketplace querying the API at install time would get the new status immediately. Secure becomes Denied the moment the threat is confirmed.

This is why the 22.9% stat matters so much. Those aren't hypothetical risks. Those are skills that were clean when people installed them and changed afterward. Without continuous monitoring, you'd never know. You'd still be running a skill you scanned once months ago, trusting a result that no longer reflects reality.

A one-time scan is a snapshot. Watchtower makes it a living security layer.

Appreciate the thoughtful question and glad the source-first approach resonates!

Security for agents is going to matter a lot more as people start chaining skills together — having visibility + audits early feels like the right move.

ClawSecure

@allinonetools_net Exactly right. Skill chaining is where the risk compounds fast. One compromised skill in a chain doesn't just affect itself, it can cascade through every downstream agent that touches it. That's actually one of the 10 OWASP ASI categories we cover: Cascading Failures. When you chain five skills together and one of them mutates after install, you need visibility across the entire chain, not just the entry point. That's what Watchtower and the Security Clearance API are built for. Appreciate you seeing where this is headed.

Congrats on the launch! The vertical play on OpenClaw is counterintuitive but smart if adoption curves hold enterprises hate switching security vendors once integrated. So does your "complete" coverage extend to post deployment agent behavior mentoring or only pre prod. vulnerabilities?

ClawSecure

@ielrefaae Thanks, and you're seeing the strategy exactly right. Once you're the security layer integrated into an ecosystem's workflow, switching costs are real. That's by design.

To your question: both, and that's what makes the coverage complete.

Pre-deployment, the 3-layer audit catches vulnerabilities before a skill ever runs on your machine. Post-deployment is where Watchtower takes over. It continuously monitors every tracked skill for code changes via SHA-256 hash comparison. When a skill mutates after install (22.9% of the ecosystem already has), hash drift is detected, an automatic rescan fires through the full 3-layer protocol, and the Security Audit Report updates in real time. The Security Clearance API then surfaces that updated status to any marketplace or platform querying it, so a skill that was Secure at deployment can flip to Denied the moment a threat is confirmed.

So it's not just pre-prod vulnerabilities. It's continuous post-deployment integrity verification across the full lifecycle. The gap we saw in every other tool was exactly this: they scan once and walk away. We scan, then we watch. That's the difference between a checkpoint and infrastructure.

Sharp question. Appreciate you thinking about it at the integration and retention level.