Launching today

JsonFixer

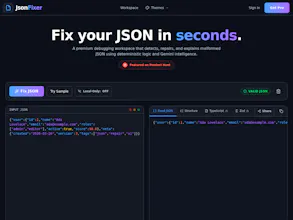

Fix malformed JSON from LLMs, APIs, and config files.

2 followers

Fix malformed JSON from LLMs, APIs, and config files.

2 followers

JsonFixer is a free AI-powered JSON repair workspace. Paste broken JSON — from LLM outputs, API responses, or config files — and a four-stage self-healing engine (WASM → jsonrepair → AI) fixes it in under 200 ms. REST API available.

Hey PH! I'm Dechefini, the architect of JsonFixer.

This started when I kept losing 20 minutes per sprint dealing with malformed JSON from ChatGPT, Claude, and API responses. JSON.parse would throw, I'd stare at a 2,000-character blob, and manually hunt for the offending character. After the tenth time I thought: there has to be a better way.

JsonFixer runs broken JSON through a four-stage self-healing pipeline:

WASM heuristics — catches 90% of cases in ~5 ms, entirely in-browser

jsonrepair — handles structural issues like trailing commas and Python literals

AI — for ambiguous or severely mangled LLM output

AI retry — last resort with recursive strategy

Stages 1–2 run entirely in your browser. Zero data leaves your machine unless you explicitly request AI repair — and there's a Local-Only mode toggle to prevent that entirely.

There's also a REST API, npm SDK (npm install jsonfixer), schema conversion to TypeScript/Zod/Pydantic/Go, and repair history.

Free forever for the web tool. Would love to hear how you're using it!

— Dechefini

If you’ve ever copied JSON from ChatGPT, pasted it into your codebase, and spent 15 minutes hunting a missing quote… that’s exactly the problem this fixes.

This software just fixed something I spent 2 hours trying to figure out myself. I used an LLM to generate code for a project I'm working on and it gave me a broken Json. I checked it with another LLM and it said that it should be working fine. I couldn't for my life figure out the issue when I stumbled on your software. It fixed my issue in seconds and gave me an explanation to help me avoid this issue in the future. Thank you so much.

Curious if people are seeing more issues from API payloads lately or mostly LLM output?