JuryArena

Beyond vibe eval: AI-jury picks the right LLM for you.

7 followers

Beyond vibe eval: AI-jury picks the right LLM for you.

7 followers

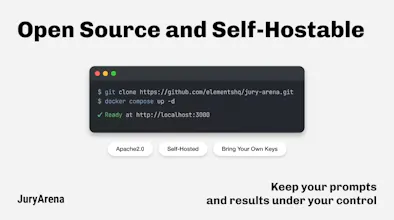

Choosing the right LLM for production shouldn't be based on intuition. JuryArena runs arena-style trials on your real prompts — an AI-jury watches two models go head-to-head, picks the winner, and saves every result as a reviewable trace. No ground truth needed. Open source and self-hostable.

Hi, I'm Fujisawa from ELEMENTS.

We built JuryArena because I got tired of deciding which LLM to use based on vague impressions.

Every time a new LLM came out, we went through the same cycle: try a few prompts, have a long team debate,

pick one, and later wonder whether we chose the right model. It always felt too important to leave to intuition,

but building evaluation criteria that actually fit our product was never as simple as it sounds.

That frustration is what led to JuryArena.

I wanted a way to compare models on real prompts, make the process more systematic,

and keep a clear record of why we made that choice, without needing ground truth for every task.

So we built a tool that helps teams do exactly that.

If you're also tired of vibe-based evaluation, give JuryArena a try — I'd love to hear what you think.