Launching today

Design Agent by Lokuma

The designer for your AI agents (Openclaw, CC, Codex)

604 followers

The designer for your AI agents (Openclaw, CC, Codex)

604 followers

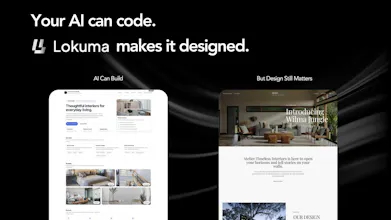

Lokuma Design Agent, is an AI designer your agents can call, a design intelligence layer for agents like OpenClaw, Claude Code, or Codex. AI can generate almost anything. But generation isn’t design. Turning raw outputs into something clear, structured, and visually refined still requires design thinking. Built by design tool makers, Lokuma helps AI reason about layout, typography, and visual balance — transforming outputs into landing pages, websites, and campaign pages that feel designed.

I've been part of the marketing team on two AI website builder tools. We watched the category explode and then hit a ceiling. The category grew fast, but the outputs started converging. Generic layouts, same patterns. Hard to keep up with what actually feels current. AI got really good at generating. It never got a good sense for design.

So when Lokuma decided not to build "yet another AI website builder" and instead focus on being the design layer for AI agents, I thought that was the right call. Crowded category and it really was the yesterday's problem.

It works as a skill that your AI agent can directly call. You just tell Claude Code or Codex to " install and design with Lokuma," and it actually reasons through the layout before generate anything.

It's still early, and to be honestly there's a lot Lokuma can't do yet. But the overall direction feels right to our team. Your agent handles the logic, Lokuma handles how it actually comes together. Hierarchy, balance, what guides the eye.

@mu_li @big_claw @qian_712

Hey Team,

really interesting idea — the concept of a “design intelligence layer” for AI agents feels like something that’s been missing for a while.

One thing I noticed is that while the idea is powerful, it might be slightly hard for new users to instantly understand the value when they land on the page.

Have you experimented with simplifying the first impression — maybe showing a clearer before/after or a more visual explanation of how Lokuma transforms raw AI output into something “designed”?

Would love to see how you're thinking about improving that part.

@big_claw @qian_712 @vinayverma Really appreciate this - super fair point.

We’ve actually started adding some before/after comparisons on the landing page to make the transformation more concrete, but I agree it’s still not as immediate as it could be.

Part of it is that the product itself is still pretty early - a lot of usage today is through things like Claude Code, so it’s a bit more “early adopter” for now. That said, the presentation layer is definitely on us, and we can do a much better job making the value obvious at a glance.

Thanks again for calling this out — this is exactly the kind of feedback we need right now.

Congrats on launch. Just a friendly suggestion, but it triggered my OCD hahaa, please center "Random Typography" vertically on: https://agent.lokuma.ai/group/group-2-before.png

@yodalr Haha good catch! Really appreciate you spotting that, Lennart!

That section is meant to show some of the “before” roughness, but this is exactly the kind of detail we want to smooth out. Thanks for calling it out, very much where we’re headed at Lokuma.

The gap you're filling is real — agents are getting really good at generation but terrible at coherence. I've seen this acutely when building micro-tools: the AI produces something structurally correct but visually disconnected, like there's no design intent underneath. What I'd push on is whether you can expose the design reasoning layer more explicitly to makers — not just the output, but *why* certain layout or hierarchy decisions were made. That kind of transparency would be killer for teams iterating fast. Would strengthen trust in agentic outputs significantly.

@vatsmi This is a great push, Vatsal - totally agree.

Making the design reasoning more visible (not just the output) is something we’ve been thinking about a lot.

Feels important for trust, especially in fast iteration loops.

Curious how would you want that surfaced in practice?

Been building stuff with Claude Code lately and the design gap is real. Everything comes out looking like a bootstrap template from 2018 lol. The idea of plugging in a design layer instead of manually fixing spacing and typography every single time is exactly what I need. Gonna test this out today.

@abdullah_mohamed14 Haha REAL! That's literally why we built Lokuma, tired of watching agents write perfect logic and then ruin it with garbage spacing. Let us know how it goes, genuinely curious what you're building!

Hey everyone! Tech Lead at Lokuma here. 🛠️

We built Lokuma because we were tired of AI-generated websites that looked like templates from 2010. Design Agent provides a sophisticated design layer that any agent can call via a simple API.

Works with: OpenClaw, Claude Code, Cursor, and more.

Does: Aesthetic reasoning, typography, and visual balance.

We’re excited to see how you integrate this into your agentic workflows. I’m here for any questions on the tech stack or our future roadmap. Let’s make the AI-generated web beautiful! ✨

@big_claw Love this! And, honestly, none of this would exist without you.

S1NON and the underlying algorithms are the backbone of Lokuma.

All the “design intelligence” people see on the surface is really the result of a lot of deep thinking on systems, models, and how agents actually reason about design.

Grateful to be building this with you. Let’s push it further.

The design-as-API concept is clever. Most AI outputs look "generated" because there's no design reasoning layer in between. Curious how you handle cases where the agent's content conflicts with existing brand guidelines — does Lokuma override or suggest?

@greythegyutae Glad that resonates, Gyutae. That gap is exactly what we’re trying to address.

Right now we mostly align and refine rather than override, trying to stay consistent with whatever signals we get.

But handling brand conflicts more explicitly is something we’re actively exploring.

How strict would you expect that to be in practice?