Launched this week

Promptly

An AI Cost Optimization Infrastructure for LLM Applications

45 followers

An AI Cost Optimization Infrastructure for LLM Applications

45 followers

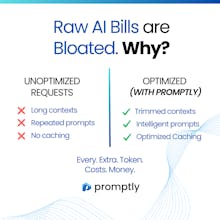

Promptly is an OpenAI-compatible proxy that cuts your LLM spend by up to 60% with smart routing, prompt optimization, semantic caching, and context pruning. Works with OpenAI, Anthropic, and Google.

What started as a constant frustration while building AI has now turned into something we truly believe in.

The future of AI isn’t just about powerful models it’s about making them efficient, scalable, and accessible.

Really excited to finally share Promptly 🚀

Really excited to finally share Promptly 🚀

If you’re working with LLMs, you’ve probably seen how quickly costs can scale in production.

Promptly helps optimize requests, reduce unnecessary tokens, and make AI systems more efficient - without changing your existing setup.

Would love to hear your thoughts and feedback 🙌