Launched this week

SNEWPapers

The World's First AI Newspaper Archive

139 followers

The World's First AI Newspaper Archive

139 followers

I taught machines to read newspapers, gave them 250 years of data, extracted everything (6 million+ stories so far), separated the ads from the content, and categorized it all. You can search semantically or with you own AI research assistant and get the actual articles with full text extraction, as well as build and share collections. As far as I know, this has never been done before, the data isn't on Google or in any LLM, only on SNEWPAPERS

SNEWPapers

Hey Product Hunt! 👋

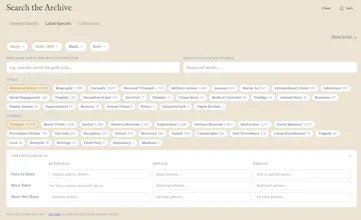

I'm excited to share SNEWPapers — the world’s first AI-powered historical newspaper archive. We’ve read and organized 6 million+ stories from 250 years of American newspapers (1730s–1960s) so you can finally explore history by meaning, not just broken keywords.

Maybe the biggest news since sliced bread for digital humanities, historians, researchers, genealogists?

I built this after trying to research references in The Fourth Turning. Traditional archives dumped me into faded page scans with terrible search. So I created my own.

The result: clean, summarized articles and nearly perfect full-text OCR extractions + The Sleuth (your personal AI research assistant), smart categorization (24 categories / 1,000+ sub-categories), Collections for sharing, and a fun Today in History daily feed.

Quick start (10 minutes): → Tutorials

A few things I’d love your thoughts on:

Today in History — Would you actually open this daily?

Search + Sleuth — How useful is semantic search and the AI assistant for your research?

Collections — Would you use/share public collections?

Pricing: 7-day free trial. I priced it ~50% below traditional archives because we actually deliver usable, intelligent access. Product Hunt special: Use PRODUCTHUNT20 for 20% off any plan (valid until May 8).

Huge technical journey. I had to figure out how to acquire, store and process nearly a million high-resolution newspaper images, build custom multi-modal systems to detect and segment articles, massively improve OCR on centuries old ink, train models to understand newspaper layout and context, run prompt engineering at scale, balance cost vs quality with LLMs and vLLMs, build semantic and agentic search infrastructure that actually works on millions of documents, and scale a cost-effective GPU fleet.

Some “AWS-ish” stats so far:

115,000+ GPU GB-hours (OCR / Layouts)

26,000+ Lambda GB-hours moving data around

44.7 billion LLM/vLLM tokens processed

7 months of 80+ hour work weeks (organic neural network compute)

Would love your honest feedback and discoveries you make in the archive! 🫡 (here or hello@snewpapers.com)

Very interesting! A few things: (1) Is this just the LOC collection of papers, Chronicling America, or are you getting papers from elsewhere too? I've got a few ideas for additional sources. (2) I'd love to know what tech stack you settled on for the OCR/VLM work — I do a lot of work with 19th-c U.S. newspapers and my quest is to figure out the perfect pipeline/workflow. (3) Just FYI, I just signed up for an account and it immediately told me: "Your free trial has expired. Choose a plan to unlock all features." You might want to change that language ("To start your 7-day free trial, choose a plan..."), and you might want to offer some free searches (without access to the results) to let people see if the content they're interested in is in there. That's what British Newspaper Archive does — you try a search, see there are a few golden documents you really want, and then they ask you to pay.

SNEWPapers

@joshua_benton Thanks for pointing out the "free trial thing." I'll fix that right away. Yesterday what I did was make it so that if you authenticate, you can see the summary info in /today-in-history, then today I made it so that when you subscribe for the free trial you don't need a credit card. I'll update the language though on the free trial banner, that's not a good flow at all! I'll look into the free searches option as well

It is just LOC at the moment, and there's still 20 million more pages to pull from there... What other sources are there? Any that aren't on this list? https://en.wikipedia.org/wiki/Wikipedia:List_of_online_newspaper_archives#United_States

For data pipeline:

I'm using various Paddle Paddle models for the initial "feel" i.e. their orientation and layout models with custom class weights, but on complex documents even their state of the art models get it way off sometimes (re: their reading order predictions, esp. on tight columns). So the models are really just a starting point. There's about 30k lines of python with logic and heuristics to massage it from there into my best guess at how a human might read the page. Their english OCR model is pretty good and I use their vLLM for extracting tabular data. The vLLM is also decent at text, but the problem is it loses x,y coordinates, which are useful downstream. LLMs give feedback on your guesses for semantic continuity, then later there are dozens of prompts for connecting the dots and extracting meta and summary data.

I'm using Grok as my main LLM for two reason a) cheaper tokens b) HUGE - they don't censor speech and a there's a lot of stuff in the old papers that would likely get me kicked off another provider (even though this data sits on taxpayer funded government websites), and I don't have the hardware to run my own model locally.

I use OpenAI only for embeddings (0.01% of overall cost) as Grok doesn't provide an API for those yet

Incredible scale!

You mentioned training the model to handle degraded paper and faded ink. Google famously used recaptcha v1 for the same problem, having millions of users unknowingly label words from old NYT archives. How have you coped this issue?

SNEWPapers

@oleksandr_utkin Haha I didn't know about the recaptcha story, that's very clever. There are quite a few decent OCR tools out there that are open source, a lot of getting it to work right is first to understand the settings and limitations, ideal DPI for character recognition, GPU settings for aspect ratios, then of course LLM and VLLM tech can help clean things up, then human verification, which you could then turn into a reinforcement loop for transfer learning on an open weights model

@brett_shinnebarger Truly impressive work — the engineering depth here is rare to see on a launch. The practical questions: how much disk space does the full archive end up taking after extraction?

SNEWPapers

@oleksandr_utkin I appreciate you for saying that! For a million high res images and all the processing artifacts etc.. about 6TB in S3, big but manageable. Easy enough to scale to petabytes without much effort

Honestly, this is quite cool!

Do you plan to expand the newspaper libraries to other countries?

SNEWPapers

@markocki Thank you! I just opened up the "Today in History" summary page a moment ago for any authed-but-not-subscribed users (only the full extraction details are behind the subscription wall now), feel free to check that out! There's plenty more US papers to get to first, but UK would probably be easy as well, and other languages that read left-to-right and have latin character sets. Partially it's also harder to do data validations when you don't know the language, but all possible

Cool!

What APIs did you use to scrap all the Newspaper archive?

SNEWPapers

@akash_mahtani This is a good one, they don't exactly make it easy per se, but it does work https://www.loc.gov/apis/

minimalist phone: creating folders

Can someone else submit newspapers?

SNEWPapers

@busmark_w_nika I hadn't thought of this, but it's a good idea. If there are high res images in the public domain we could create a request process to add them to our pipeline, ideally it wouldn't be just one issue, but the whole history that we could grab, or more ideally other archives that want to use our tech could request for us to process their entire dataset and we host the data and provide SSO for their users. There's a lot of them out there... https://en.wikipedia.org/wiki/Wikipedia:List_of_online_newspaper_archives#United_States

minimalist phone: creating folders

@brett_shinnebarger I think it is a difficult process tho, because some newspapers need to be of a better quality, but imagine having them from all the countries (the biggest database) :)

SNEWPapers

@busmark_w_nika You'd be surprised how well we can process all but the really really degraded papers i.e. bad scans, ripped papers, pieces missing or very faded text that even a human would struggle with. The free US papers alone in English would get Snewpapers probably to 500 million stories or more. Other languages would be possible too, but that brings additional OCR related and technical challenges

minimalist phone: creating folders

@brett_shinnebarger I mean, such an database would be really worth a lot, + imagine how many AI models would like to be trained on that, you could sell licences to big comps.

I have a crazy idea: there are many lost treasures in the world that were never found. In theory, if all printed materials (newspapers, books, etc.) from those times and countries were digitized, then AI could help find them. Do you think this is realistic?

SNEWPapers

@natalia_iankovych Google has scanned millions of books, there's probably ship log books laying in places all around the world, newspapers might play a role too! You'd need a super duper massive graph database or something like that!

@brett_shinnebarger Yes, the task is very difficult. But someone will find the treasure. It’s just a ready-made script for a movie! :)

SNEWPapers

@natalia_iankovych all we need now is a montage