Launched this week

Stablecoin Health Index

The Credit Rating Agency for Stablecoins

3 followers

The Credit Rating Agency for Stablecoins

3 followers

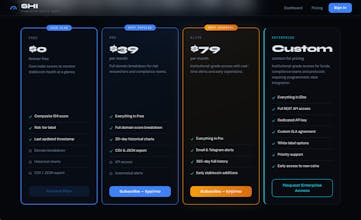

Track stablecoin risk in real time. SHI scores USDC, USDT, DAI, PYUSD, RLUSD and FDUSD daily across peg stability, collateral integrity and on-chain flows. Free forever.

Huge congratulations on launching Stablecoin Health Index. The collapse of UST and the temporary depegging of USDC proved that treating all stablecoins as entirely risk free is a dangerous assumption. Having a traditional rating system is exactly what the Defi space needs for mature risk management.

Looking at your methodology spanning peg stability, structural integrity, and flow risk, I am really curious about how your scoring algorithm weights off chain attestation data versus on chain verifiable collateral. Specifically, how does the model mathematically penalize opaque custodian reporting compared to transparent but potentially volatile crypto backed reserves?

Wishing you a highly successful launch day!

Hi@silaswright

Thank you so much, really appreciate the kind words and what a great question!

You've hit on one of the most interesting tensions in the whole model. The short version is that opacity is penalised harder than volatility, here's why:

For custodian exposure we score based on how concentrated the risk is with a single off-chain entity. USDT for example carries a higher custodian penalty than DAI precisely because if Tether's banking relationship breaks down you have no on-chain mechanism to verify or recover. With DAI the collateral is messy and volatile but you can verify every dollar of it on-chain at any time - that transparency is worth a lot in our model.

Attestation frequency feeds into structural integrity separately - a stablecoin that publishes monthly attestations

gets penalised more than one publishing daily or in real time because the gap between reports is essentially a blind spot.

The philosophy behind it is simple - known risk is manageable, unknown risk is dangerous. UST looked fine right up until it didn't, partly because the opacity of its reserve mechanics meant the warning signs weren't visible until it was too late.

Would love to refine the weighting further as we gather more data - planning to publish the full methodology soon so the community can scrutinise and challenge it. That kind of transparency feels important for a tool that's essentially asking people to trust its scores!