Cekura

Automated QA for Voice AI and Chat AI agents

1.8K followers

Automated QA for Voice AI and Chat AI agents

1.8K followers

Cekura enables Conversational AI teams to automate QA across the entire agent lifecycle—from pre-production simulation and evaluation to monitoring of production calls. We also support seamless integration into CI/CD pipelines, ensuring consistent quality and reliability at every stage of development and deployment.

This is the 3rd launch from Cekura. View more

Cekura

Launched this week

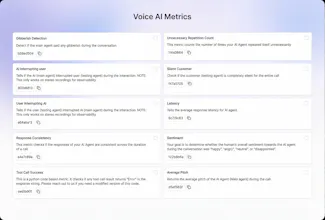

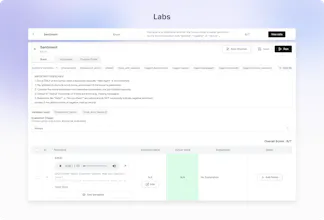

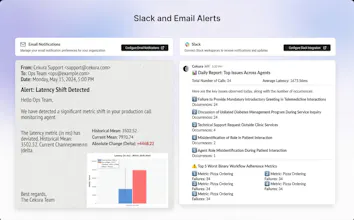

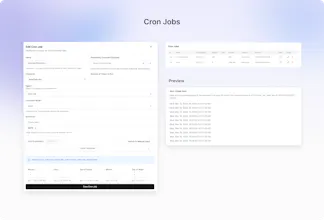

Out-of-the-box 30+ predefined metrics for analysis on CX, accuracy, conversation and voice quality. Compile perfect LLM judges by annotating just ~20 conversations and auto-improve in Cekura labs. Real-time, segmented dashboards to identify trends in Conversational AI. Smart statistical alerts so that you get notified only when metrics shift from historical baselines. Automated system pings to catch silent production failures.

Free Options

Launch Team / Built With

Cekura

Huge day for the Cekura team! 🚀 As a Founding AI Engineer here, launching this feels like a massive milestone.

The reality of Voice and Chat AI right now is that the tooling has lagged behind the models. We built Cekura because we knew that spot-checking a fraction of your calls just doesn't cut it anymore. Finding out your agent went rogue or dropped a compliance step days after the fact is brutal. Cekura exists to finally turn that chaotic wall of production data into clear, actionable truth.

On the technical side, I spent my time deep in the architecture of our metric feedback pipeline and structured simulations. These features completely eliminate "vibes-based" prompt tweaking by auto-compiling perfect LLM judges, while continuously mimicking production traffic to instantly catch silent infrastructure drops.

Really excited for you all to try it out. Let us know what you think of the new features! 🙌

Cekura

Cekura 🚀🚀🚀

A lot more to come!