Cekura

Automated QA for Voice AI and Chat AI agents

1.8K followers

Automated QA for Voice AI and Chat AI agents

1.8K followers

Cekura enables Conversational AI teams to automate QA across the entire agent lifecycle—from pre-production simulation and evaluation to monitoring of production calls. We also support seamless integration into CI/CD pipelines, ensuring consistent quality and reliability at every stage of development and deployment.

This is the 3rd launch from Cekura. View more

Cekura

Launched this week

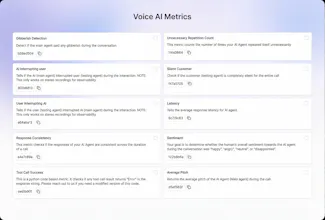

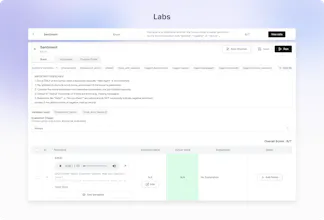

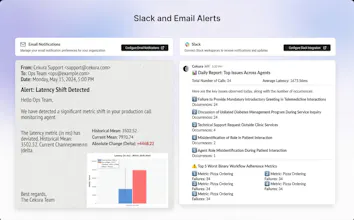

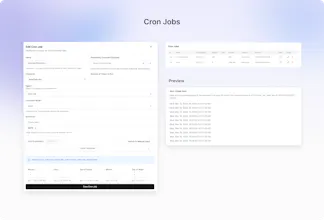

Out-of-the-box 30+ predefined metrics for analysis on CX, accuracy, conversation and voice quality. Compile perfect LLM judges by annotating just ~20 conversations and auto-improve in Cekura labs. Real-time, segmented dashboards to identify trends in Conversational AI. Smart statistical alerts so that you get notified only when metrics shift from historical baselines. Automated system pings to catch silent production failures.

Free Options

Launch Team / Built With

Do you have auto debugging features & mcp support for production issues?

Cekura

@pranayr0 Yes we have Cekura MCP. We also have skills in Claude code which you can use to improve test cases or metrics

OpenFunnel(YC F24)

This is great- especially out of the box metrics. Which ones do people use most in prod?

Cekura

@aditya_lahiri Tool call accuracy and expected outcome are core - they tell you if the agent actually did the job. Latency comes next, since delays quickly break real-time UX.

For voice agents, interruption metrics (AI interrupting user, user interrupting AI, interruption evaluation) plus silence duration are very useful too.

Cekura

We are thrilled to share Cekura Monitoring with the PH community!

Most teams focus solely on whether a voice AI agent reaches the 'correct' outcome, but they often overlook the nuances that actually define the user experience: tone, transcription accuracy, TTS quality, and pronunciation.

While working on scaling to handle thousands of parallel calls, we realized just how easily these small details can degrade at volume. Cekura was built to ensure your agents don’t just work but they sound perfect.

Check out the product and let us know what you think!

Cekura

One of the most common issues we see voice agent makers run into is their agent keeps interrupting the caller. It's frustrating for users and easy to miss during development. With our interruption metric, teams can catch this early and fix it before it reaches real users, and that's just one of the many predefined metrics we offer out of the box, try it now!

Cekura

So excited to see this live! 🎉

Been working closely on Cekura's monitoring features and what makes this special is how much it closes the loop for conversational AI teams — you're not just testing in pre-prod and hoping for the best, you're getting visibility into what's actually happening in production calls.

This one's been a long time coming! 🚀

Cekura

Really excited to see this out 🎉

Working on alerting and simulation quality made it clear how hard it is to catch subtle regressions early—this is a big step toward making that reliable in production.

Glad to finally have this live 🚀

Struct

This is a massive launch for such a critical problem in conversational agents today. Curious, what are the most important metrics tracked by customers in the healthcare space?

Cekura

@nimeshmc Thanks! Healthcare is one of our most active verticals. Expected outcome is critical - did the agent follow required protocols like HIPAA disclaimers, consent, and verification steps?.

Hallucination detection is equally important - the agent must not invent symptoms, dosages, or medical advice.