Happy Wednesday! Don’t forget to subscribe to Maker Stacks. This week, we are interviewing serial founder and Product Hunt community veteran Mubs on his tech stack.

Here’s some news:

🧵 Meta just showed off Threads fediverse integration for the first time.

🌷 Segway just announced a sub $1,000 smart lawnmower.

🤖 After raising $1.3 billion, the Inflection founders are joining Microsoft to lead its AI efforts.

Come hang with the Product Hunt team, Mercury, and Jam.dev at our next event on April 9th. Meet the team, chat with makers, and discover some of the coolest AI products being built today and while vibing with some good food and good drink.

Spots filled up fast for our last event, so be quick!

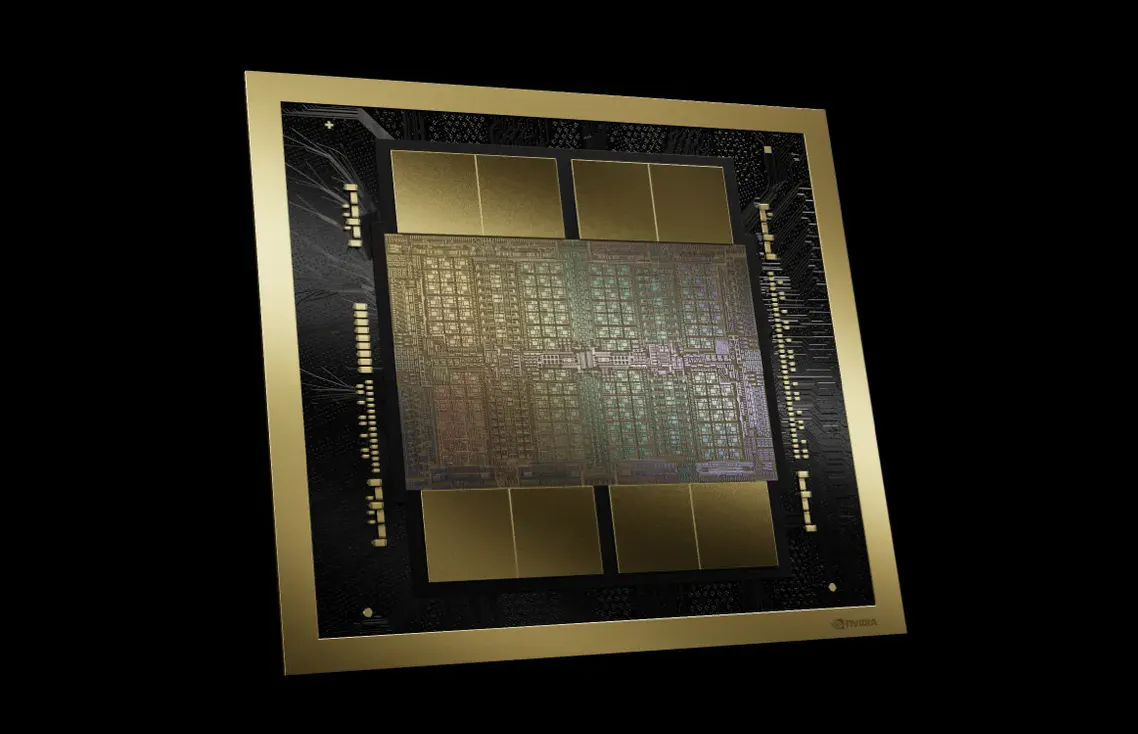

Nvidia just announced new chips to make AI even more powerful

When you think of AI, your mind might immediately go to consumer-facing apps like ChatGPT, Sora, and others. But like everything else in tech, there’s tons going on under the hood (namely chips) and a few companies have stood out amongst the crowd.

Nvidia is one of those standout companies that’s riding the AI wave to untold levels of success, even surpassing both Alphabet (Google) and Amazon in market cap at one point thanks in large part to its AI chip manufacturing efforts, particularly the H100 GPU.

Now, the company plans to cement its lead even further — with its new B200 and GB200 “superchips.“

Announced at an event yesterday dubbed the “AI Woodstock,“ the new chips can offer up to 20 petaflops of FP4 horsepower from its 208 billion transistors and, when combined with a single Grace CPU, could potentially offer up to 30 times more performance for LLM interfaces while being a lot more efficient.

To put that into perspective, the H100 GPU, which was considered the industry standard for AI prior to this, has around 80 billion transistors.

What does all that mean? Well, it means that for the first time in my life, I can confidently say a number with a billion after it is small relative to another.

Secondly, it’s a lot of tech speak to say these chips are really powerful. How powerful? According to Nvidia, training a 1.8 trillion parameter model on the company’s previous line of chips would have taken 10,000 GPUs and 15 megawatts of power. Swap out the old for the new, and that reduces to 2,000 GPUs and just 4 megawatts of power.

According to Nvidia’s CEO, Jensen Huang, the chips will cost somewhere in the ballpark of between $30,000 and $40,000. So yeah, it’s safe to say that you and I won’t be buying one to power our ChatGPT-wrapper side projects anytime soon.

Build agents, automations, and integrations with Tines

Tines’ intelligent workflow platform combines deterministic automation, AI, and human-led steps so you can run workflows you trust in production. With Tines' new Starter Edition, intelligent workflow automation your team can trust is more accessible than ever before.

With Starter Edition, lean teams get:

Get started for free today with Community Edition, and upgrade when you're ready. We can't wait for you to experience the joy of building with Tines!

- Stability AI just released a new text-to-3D video AI model.

- ThinkAny is a search engine that uses AI to curate higher-quality content.

- Inner Vision Pro is a Vision Pro app for your mental health.

Monday through Friday

Our ultra-fast Daily: Three takes on new products. Yesterday’s top ten launches. That’s it.