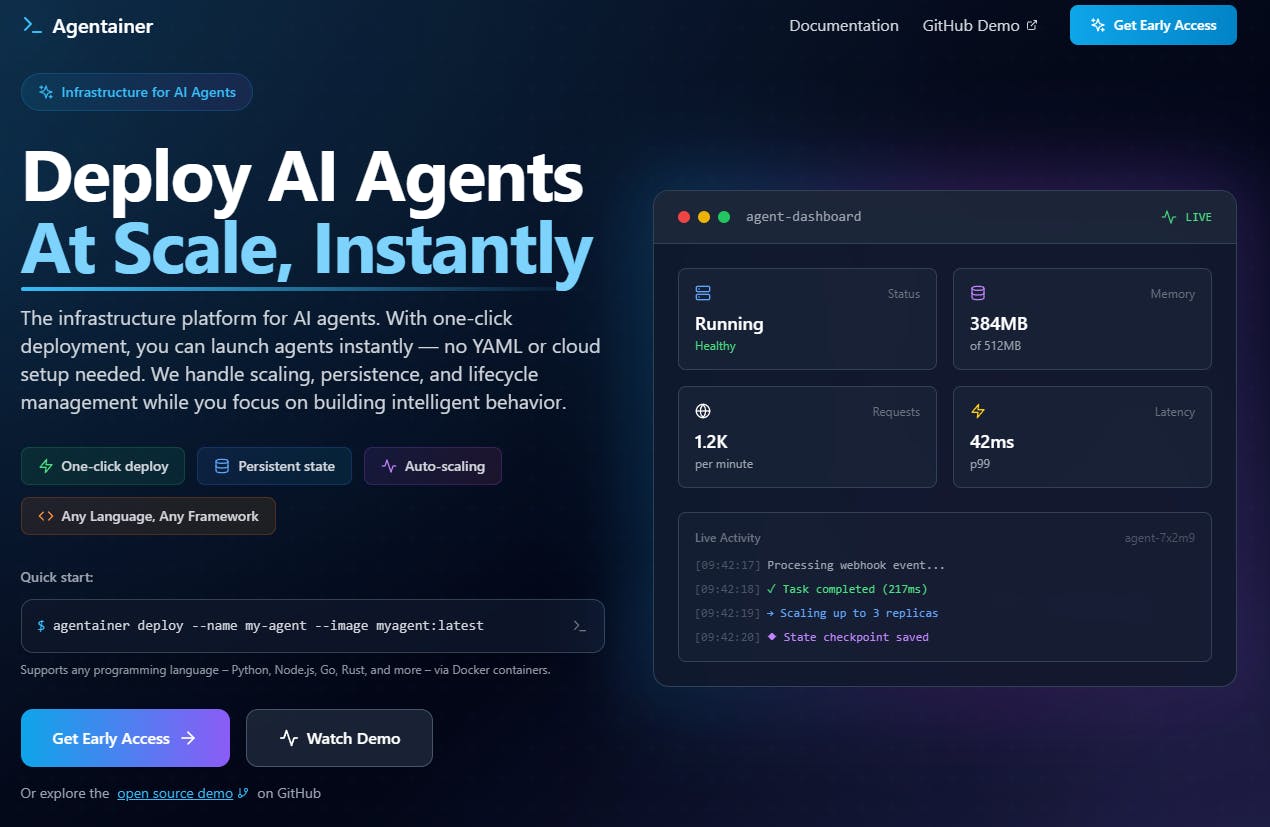

How We Built a Solution Runs Long-Lived LLM Agents

Introduction

Most cloud platforms—AWS, GCP, Azure—are optimized for stateless web apps or short-lived serverless functions. But deploying long-lived, stateful LLM agents is another beast entirely. You need durability, resilience, and observability. When we tried to push our own multi-agent AI system to production, we hit walls with all the complex infrastructure work that not only took hours but unstable.

So we built Agentainer-Lab (GitHub), a local runtime architecture designed specifically for long-running autonomous agents.

Website: Agentainer.io

(Users who sign up for early access will get to try the production-grade service for free.)

The Problem with Naive Docker Setups

Here’s what most developers try first:

# docker-compose.yml

services:

agent:

image: my-agent-image:latest

ports:

- "5000:5000"

restart: alwaysThis works—until it doesn’t. Here’s what goes wrong:

No snapshot of internal agent state after restart

Restart loops silently fail if Docker crashes

No observability/logging without extra setup

No clean API endpoint mapping per agent

We needed something better.

Core Requirements

24/7 runtime

Resilient auto-restart

Dynamic agent API mounting

Redis for runtime memory

PostgreSQL for long-term snapshots

Native Docker support (no K8s locally)

Supervisor: the Go-Based Agent Manager

At the heart of Agentainer-Lab is the supervisor. It’s a Go service that listens to Docker events and acts as an agent lifecycle orchestrator.

func watchDockerEvents() {

cli, _ := client.NewClientWithOpts(client.FromEnv)

events, _ := cli.Events(ctx, types.EventsOptions{})

for msg := range events {

if msg.Type == "container" && msg.Action == "die" {

handleAgentCrash(msg.Actor.ID)

}

}

}

func handleAgentCrash(containerID string) {

agentID := lookupAgentID(containerID)

latestSnapshot := loadFromPostgres(agentID)

restartAgent(agentID, latestSnapshot)

}This lets us handle crashes gracefully, and more importantly, lets us track them.

State Architecture: Redis + PostgreSQL

We split memory usage by time horizon:

Redis: stores ephemeral data like agent heartbeat, in-flight tokens, retry flags.

Postgres: stores agent code metadata and full snapshots.

CREATE TABLE agent_snapshots (

id UUID PRIMARY KEY,

agent_id UUID NOT NULL,

snapshot JSONB NOT NULL,

created_at TIMESTAMPTZ DEFAULT now()

);Snapshots can be saved periodically from within the agent logic or externally via /snapshot API calls.

API Routing

We use Gin (Go framework) to dynamically expose each agent via REST or gRPC endpoints.

router.POST("/:agentId/:path", handleAgentRequest)

func handleAgentRequest(c *gin.Context) {

agentId := c.Param("agentId")

route := c.Param("path")

forwardToAgent(agentId, route, c.Request)

}This gives us clean routes like:

POST /agent-123/processAnd internally reroutes the request to the correct container’s port.

Docker Runtime Per Agent

When we deploy an agent:

docker run \

--name agent-abc \

-p 6000:5000 \

-e AGENT_ID=abc \

-v agent_data:/app/data \

my-agent:latestEach agent gets:

Dedicated network port

Tokenized API key

Isolated volume

Retry + restart config

This creates isolation without requiring a full orchestrator like K8s.

Crash Recovery Flow

When Docker fires a die event:

We detect it in the supervisor

Check Redis → mark agent as unhealthy

Pull latest snapshot from Postgres

Re-spin container with restored snapshot loaded via startup command or /restore endpoint

Sample Use Case

Let’s say you build a scheduling agent that sends email summaries every day at 9 AM. It reads feeds, generates text using GPT, and emails via SendGrid.

The agent logic handles time-based triggers itself. But you still need:

Persistent runtime

Logging

Crash resilience

Daily summary logs (stored in Redis)

All of this is auto-managed via Agentainer-Lab. And you can restart it with a single Docker call.

9. Known Limitations

No container pool yet (one agent = one container)

Limited snapshot versioning (for now)

No inter-agent messaging (coming soon)

Basic WebSocket logging only (Grafana/log aggregation later)

10. Future Features (Soon available on Agentainer.io)

Auto-scaling: Scalability depends on the workload and load-balancing between containers. All the agents share the same memory with our state persistence feature.

Message-bus: Allows multi-agent communication more efficiently internally on the platform.

Enhanced metrics/logs: Provide production-grade metrics and logs dashboard (like DataDog).

Team workspace: A workspace where you can see what others are working on and manage all agents in one place.

Flexible database: Users can connect any external/internal databases as they wish per agent.

White-labeling: Use your own domain for agent endpoints. For example, https://{yourDomain}/agents/{age....

-----------

Agentainer-Lab is a developer-first runtime designed to make agent deployment seamless—not just for devs, but for coding agents themselves. We’ve removed 99% of the ops work required to ship long-lived AI workloads.

GitHub: Agentainer-Lab (GitHub)

Website (Signup for early access): Agentainer.io

(Users who sign up for early access will get to try the production-grade service for free.)

Replies