The gap between local AI tools and what professionals actually need

If you have ever set up Ollama or LM Studio, you know local AI works. The models are good. The inference is fast. For developers, it is great.

But hand that setup to a lawyer at a 200-person firm and ask them to analyze a contract. Or to a hospital compliance officer who needs to research HIPAA enforcement trends. It falls apart, not because the AI is bad, but because the surrounding infrastructure does not exist.

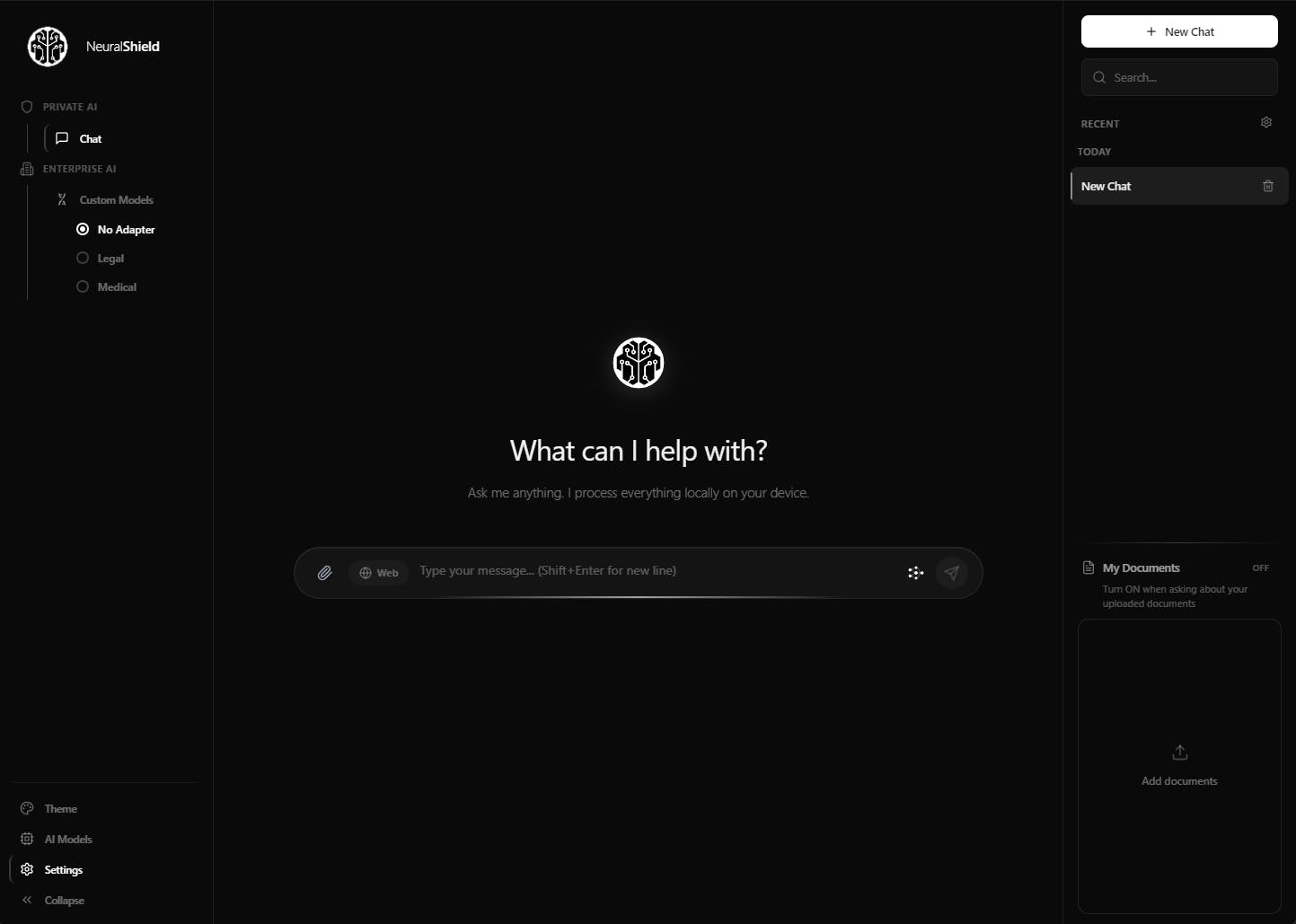

That is the gap we built NeuralShield to close:

A lawyer uploads a 200-page commercial lease. NeuralShield chunks it at clause boundaries, runs hybrid retrieval, and returns an answer citing page 47, section 12.3. Not "here is what I think", here is exactly where it says it.

A compliance officer needs web research. NeuralShield strips PII from the query, shows them every search term before anything leaves the device, runs parallel searches, and returns cited sources with domain authority scoring. No patient names, case identifiers, or client details ever hit an external server.

A new user downloads the app. NeuralShield detects their hardware, scores every available model against their actual VRAM and CPU, recommends the best fit, and downloads it in one click. No terminal. No config files. No guessing.

The core AI is running locally, same models, same weights. What is different is everything around it: the document pipeline, the privacy layer, the hardware intelligence, the citations, and the UX that makes it usable for someone who has never opened a terminal.

We built this for the professionals whose firms banned ChatGPT but who still need AI to do their jobs.

Replies