Launched this week

Agentation

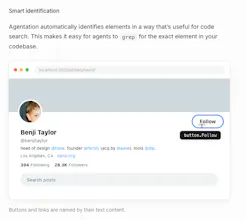

The visual feedback tool for AI agents

607 followers

The visual feedback tool for AI agents

607 followers

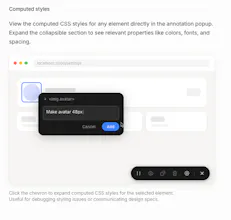

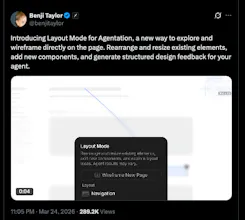

Agentation turns UI annotations into structured context that AI coding agents can understand and act on. Click any element, add a note, and paste the output into Claude Code, Codex, or any AI tool.

Congrats on the launch!

how does it handle errors or failed tasks?

this is exactly what we've been missing. we use Claude Code daily and the biggest friction is always translating "fix that button" into precise technical context. being able to click and annotate the actual UI elements then paste structured output sounds like a game changer. how detailed does the context get - does it capture CSS selectors, component hierarchies, that level of detail?

MacQuit

This solves a very real problem. When I'm working with Claude Code on UI tasks, the hardest part is describing which element I want changed and how. I usually end up writing long descriptions like "the button in the top-right corner of the second card" and hoping the agent understands. Having structured annotations with actual selectors and component hierarchy would make that so much more precise.

The React component detection is especially useful. Being able to point at a UI element and immediately see which component renders it saves a ton of time compared to manually tracing through the source code.

Quick question: does the MCP integration work with Claude Code specifically, or is it more of a general MCP server that any compatible client can connect to?

This solves a real friction point. Right now when I use Claude Code or Codex, I spend a lot of time writing context about which element I mean - "the button in the top-right of the filter panel" etc. Having structured annotations that feed directly into the agent as context is much cleaner. How does it handle dynamic elements that change state? Like a button that’s disabled until a form is valid?

Biteme: Calorie Calculator

@mykola_kondratiuk

yeah curious to see how they handle that edge case - seems like the kind of thing that makes or breaks the actual agent workflow

Thanks for launching, Agentation team! When we annotate a component and feed it to Claude Code, can we keep a history of annotations so the agent knows when the DOM changed? I'd love to see how you handle selector drift.

The audience feels right away. I would test one version that pushes the outcome harder than:

"The visual feedback tool for AI agents"

Maybe:

"See where your AI agent breaks, fix it faster, and stop debugging blind."

中文也可以是:

"看清 AI agent 是在哪里出错,更快修掉,而不是继续盲调。"