Launching today

Arlopass

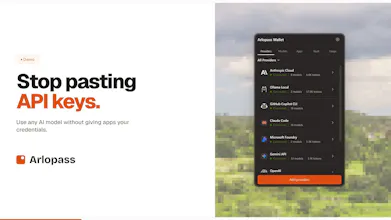

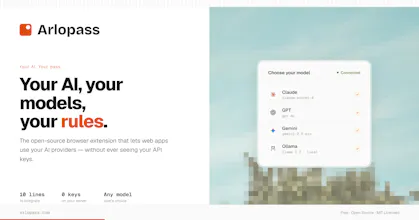

AI wallet that lets web apps use your models, not your keys

51 followers

AI wallet that lets web apps use your models, not your keys

51 followers

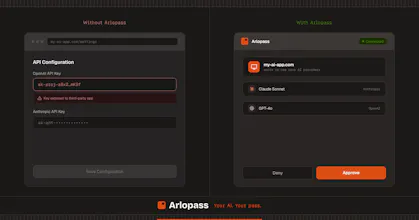

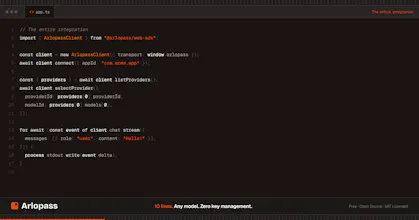

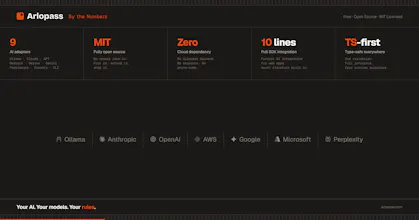

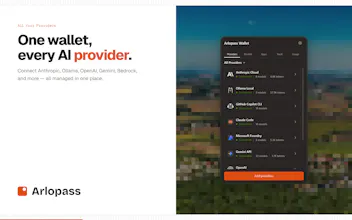

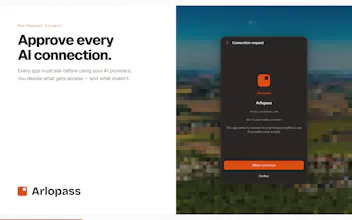

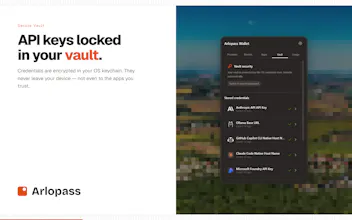

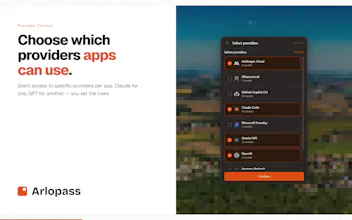

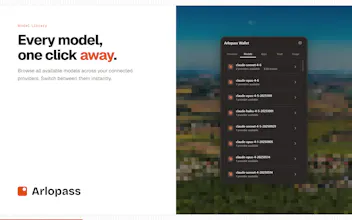

Arlopass is an open-source browser extension and developer SDK that lets any web app use your AI providers — Ollama, Claude, GPT, Bedrock — without ever touching your API keys. You approve each request. You pick the model. Your credentials never leave your device.

Arlopass

I use a bunch of different AI-powered web tools and every single one wants my API key, which means I'm trusting random apps with my credentials and have zero control over which model they actually use. Having a browser extension that acts as a wallet where I approve each request and pick the model myself makes a lot more sense from a security standpoint. Does it work with local Ollama models too, or mainly cloud providers?

Arlopass

@ben_gend it works with Ollama models too, and it also works as a bridge with CLI providers like claude code and GitHub Copilot CLI. Admittedly the CLI requests are a bit slower than the cloud and ollama ones, because of the CLI startup times but they work.

Interesting concept. How do you see people using this most in practice?

Arlopass

@cosmin1907 Great question! Right now I see it mostly used in free/open source web apps that want to add some nice-to-have AI features without worrying about implementation and costs. This gives them a simple and safe way to do that — people who want those features just install the extension and start using them without paying for another service or saving their credentials into yet another app.

If it gains more traction I could also see it as part of paid products that want a privacy-first approach to AI, or that want to offer some AI features to free tier users without paying for tokens themselves.

And once the policy system is fully implemented there's a company use case too — a customized extension with company-wide policies that enforce specific providers, models and usage limits, so teams can build AI features with the SDK instead of having every app manage its own providers and pay for AI usage separately.

This is a massive relief. I’ve spent way too many hours building custom backend proxies just to keep my keys safe, or worse—wasting time manually swapping .env files every time I want to test a new model. It’s such a repetitive time sink that usually kills the momentum of a project.

The local bridge approach is definitely the right architecture. Keeping the keys in the OS keychain instead of the browser's local storage is a huge win for peace of mind. Truly feels like the 'missing link' for privacy-first AI apps. Congrats on the launch!

Arlopass

@lukas_egli I know the pain, the idea for the project was born because of similar problems. I was working on multiple projects that would benefit a lot from some AI features but having to build out the whole AI key management system and everything and make sure I don't leak the users' credentials was a pain that made me skip those AI features. Arlopass now makes it way simpler, I implement the nice-to-have AI features in a few lines and if somebody would like to use them, with any model or provider they can just install Arlopass and do so.

This is so helpful, I had to pay for Open AI API as well as the ChatGPT both this should help to cut down the cost! Thanks Man!