Bench for Claude Code

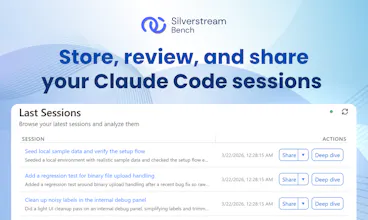

Store, review, and share your Claude Code sessions

717 followers

Store, review, and share your Claude Code sessions

717 followers

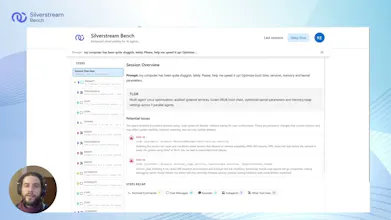

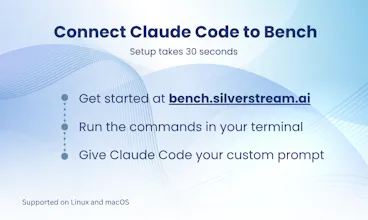

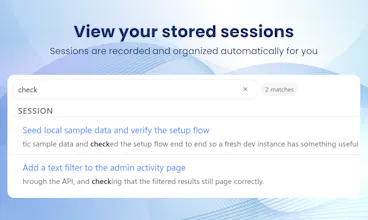

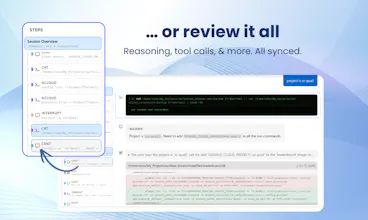

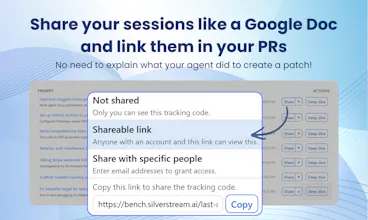

Claude Code just opened a PR. But do you really know what it did? By using Bench you can automatically store every session and easily find out what happened. Spot issues at a glance, dig into every tool call and file change, and share the full context with others through a single link: no further context needed. When things go right, embed the history in your PRs. When things go wrong, send the link to a colleague to ask for help. Free, no limits. One prompt to set up on Mac and Linux.

Bench for Claude Code

Hey Product Hunt! I'm Omar, Founding Researcher at Silverstream AI.

We originally built Bench as an internal tool to make debugging our own agents less painful, and it's become something I reach for every day.

My favorite part? The high-level run overview. When an agent run has hundreds of steps, being able to scan the whole thing at a glance and immediately spot where something went wrong is a huge time-saver. From there, I can zoom in all the way down to the model's reasoning traces at the exact step where things broke, which makes a real difference when you're trying to understand why an agent made a certain decision, not just what it did.

As we kept adding features, we realized Bench had become too useful to keep to ourselves, so here we are! 🚀

We're starting with Claude Code, but support for more agents is on the way. Give it a try and let us know what you think!

Nice.

Most people don’t need logs.

They need to understand why the agent made a bad decision and how to prevent it next time.

Bench for Claude Code

@ion_simion_bajinaru Exactly. Most people do not like logs: they have to use them to understand what the agent did, why it went wrong, and what to change so it does better next time. Bench is meant to make that whole process easier on your brain.

Bench for Claude Code

Hi everyone! 👋 I’m Giulio, co-founder and COO at Silverstream AI.

It feels like we’re all trying to buy back time these days. There’s always more to do, and never enough hours. That’s why I really think tools like Bench for Claude Code matter.

Agents are getting better fast, which means longer and more complex sessions. Hopefully more reliable too. But even as trust increases, I don’t think we’ll ever fully give up control. We’ll always want the option to see what they’re doing, as long as it doesn’t slow us down.

That’s exactly what we’re building Bench for.

If you try it out, I’d really appreciate your feedback. It’ll help us shape our product in the right direction.

Free with no limits is great but I'm curious about the longer-term model here. Storing full session histories with every tool call and file diff is not cheap at any meaningful scale, especially for teams running Claude Code across multiple engineers all day. Is the plan to eventually charge for storage, or is this more of a funnel into a paid product you're building alongside this?

This is useful. I use Claude through Cursor daily and half the time I wish I could go back and review what it actually changed across a session. Being able to store and review sessions would save a lot of second-guessing.

I run fairly long Claude Code sessions for refactoring tasks and by the end I genuinely don't know which of the 40 file changes were necessary and which were Claude doing something unexpected in the background. Having a shareable link I can drop into a PR description with the full session context would also save a ton of back-and-forth with teammates. Kudos on the launch!

docWind

This fills a real gap. I've had Claude Code sessions where something clearly went wrong but I couldn't pinpoint where because the terminal output wasn't granular enough. Having an audit trail of every tool call and file change, plus a shareable link for the whole session, is exactly what I'd need to debug and hand off context to a teammate.