Cencurity

Security gateway for LLM agents

65 followers

Security gateway for LLM agents

65 followers

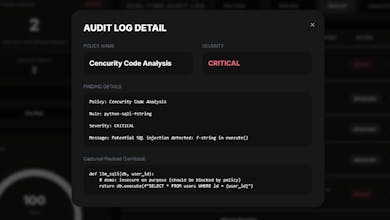

Cencurity is a security gateway that proxies LLM/agent traffic and detects / masks / blocks sensitive data and risky code patterns in requests and responses, while recording everything as Audit Logs.

Cencurity

@vlad1323 Congratulations Vlad! Can you describe how Cencurity functions as a security gateway for LLM agents, what are its key components?

Cencurity

@vlad1323 how does this differ from prompting or training an LLM that checks for security issues and how do you resolve potential indirect issues. Also how is it different from other code review tools?

Cencurity

@aman_kaushik18 Great question.

This isn’t about prompting an LLM to review code or training a security-aware model. Cencurity operates at the infrastructure layer — it sits as a proxy between IDE/agent and the upstream LLM provider.

That means enforcement happens at runtime, not as an optional review step. If a risky tool call, sensitive pattern, or policy violation is detected, the request can be blocked or redacted before it ever reaches the model or gets executed.

Regarding indirect issues: we focus on policy-based enforcement and structured extraction (e.g., tool arguments, fenced code blocks) rather than relying purely on semantic “LLM judgment.” The goal is deterministic guardrails at the gateway layer.

Compared to code review tools, this isn’t static analysis of a repository after the fact — it’s real-time control and auditing of agent/LLM traffic in production environments.

It’s less about reviewing code quality and more about controlling what AI systems are allowed to do.

Hi @vlad1323 @Cencurity Need help in onboarding

Cencurity

@vikrant_se64615 Hi Vikrant! Thanks for checking out Cencurity.

You can get started quickly with the Quick Start in the GitHub repo:

Clone the repository

cd cencurity

Build and run with Docker

That will start the proxy and policy engine locally.

If you run into any issues during setup, let me know and I’ll help you get it running.

You can get started here:

github.com/cencurity/cencurity

Cencurity

Small technical note for builders interested:

Cencurity runs as a proxy in front of OpenAI-compatible APIs and inspects both inbound tool calls and outbound responses in real-time.

It can block dangerous code patterns before execution and keeps full audit logs for every policy match.

Everything runs locally via Docker, so teams can self-host without sending data to external services.

Happy to answer any technical questions.