ClawSecure

A complete security platform for OpenClaw AI agents

631 followers

A complete security platform for OpenClaw AI agents

631 followers

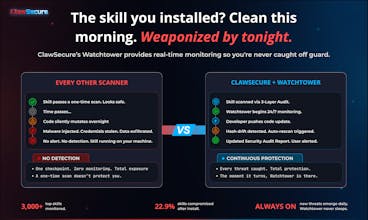

ClawSecure is CrowdStrike for OpenClaw AI agents. 3-layer security audit, real-time Watchtower monitoring, agent marketplace and identity security, and full 10/10 OWASP ASI coverage. 41% of top skills are dangerous. 1 in 5 are sending your data to attackers. Secure your agents in 30 seconds for free. clawsecure.ai

ClawSecure

Hey Product Hunt! 👋 I'm J.D., founder of ClawSecure.

Your AI agents are running third-party skills with full system access, no verification, no permissions, no oversight. 41% of top OpenClaw skills are dangerous. 1 in 5 are quietly sending your data to attackers. 22.9% changed their code after install.

After a decade building and securing AI and Web3 platforms at scale (2x exited founder, JP Morgan, Galaxy Digital, Bloomberg, NYSE), I've watched billions disappear when ecosystems scale faster than their security. It's happening again.

We built what the ecosystem was missing. ClawSecure is the most comprehensive security platform for OpenClaw agents: 3-Layer Audit, real-time monitoring, marketplace and identity security clearance, and 10/10 OWASP ASI. Free. No signup. 30 seconds.

We're excited to bring this to the PH community 🚀

Ask us anything, challenge us, or share what security concerns you are having with agents — we'll be here all day to chat!

Vozo AI — Video localization

@jdsalbego Congrats on the launch! Security for agent ecosystems is of course a core problem as OpenClaw grows.

Curious how ClawSecure actually protects the agent runtime though. Is the protection mainly static analysis of skills, or are you monitoring agent behavior during execution as well?

A lot of attacks in agent systems happen at runtime (prompt injection, tool misuse, unexpected shell commands). How do you handle that layer?

ClawSecure

@lightfield Thanks so much! Great question and exactly the right one to ask.

Here's the thing: in the OpenClaw ecosystem, the code IS the attack. Skills ship with full system access by default. There's no sandbox, no permissions model, no verification layer. When a skill contains C2 callback beaconing, credential exfiltration endpoints, or shell execution patterns, that's not a runtime anomaly. That's the code doing exactly what it was written to do.

That's why we built ClawSecure to secure the source, not chase symptoms at execution. Our 3-Layer Audit catches prompt injection in SOUL.md instruction overrides, C2 callbacks to known malicious IPs, credential exfiltration through webhook.site and glot.io, tool misuse patterns, and supply chain vulnerabilities in the full dependency tree. Layer 1 alone (55+ OpenClaw-specific detection patterns) caught 40.6% of all findings across the ecosystem, threats that are structurally invisible to generic static analysis because they don't understand OpenClaw's skill architecture.

Then Watchtower handles what every other scanner ignores: what happens AFTER install. Skills mutate. Dependencies get hijacked. 22.9% of the ecosystem changed their code post-install. Watchtower detects hash drift in real time, triggers automatic rescans through the full 3-layer protocol, and updates the Security Audit Report. Continuous integrity verification across every skill we track.

The Security Clearance API closes the last gap: marketplaces and platforms can programmatically verify any skill's clearance status before granting access to sensitive resources. Secure, Unverified, or Denied, in real time.

Runtime behavioral monitoring solves a different problem, one that matters in sandboxed environments where code is constrained. In OpenClaw, the code isn't constrained. It runs with full access. The right approach is making sure the code is safe before it ever executes, and making sure it stays safe. That's what ClawSecure does.

@jdsalbego Congrats on the launch of ClawSecure, security for AI agents feels like a really important problem to tackle right now.

While exploring the page, I found myself wondering how first-time users usually discover the core value.

There are several interesting capabilities introduced early (scanning, monitoring, hardened deployments), and I’m curious which use case most people gravitate toward first.

Would love to hear how you’re thinking about that.

ClawSecure

@chi_78 Thanks for the kind words and great question!

The overwhelming majority of first-time users come in through the scanner. Paste a skill URL, hit scan, get a full Security Audit Report in 30 seconds. No signup, no friction. That instant "oh wow, I had no idea this skill was doing that" moment is where the core value clicks.

From there, we see two natural paths. Power users who are running agents in production gravitate toward Watchtower because once you realize 22.9% of skills change their code after install, you want continuous monitoring on everything you've already deployed. Platform builders and marketplace operators go straight to the Security Clearance API because they need programmatic verification at install time, not manual scans.

But the scanner is the front door for almost everyone. We designed it that way intentionally. The AI agent security problem is invisible until you actually see the data. Once someone scans a skill they've been running for months and sees it flagged with credential exfiltration patterns or shell execution, the rest of the platform sells itself.

If you have thoughts on how the page communicates that journey, I'd genuinely love to hear them. We're always iterating on how we surface the right capability at the right moment.

@jdsalbego Thanks for the detailed breakdown the scanner-first approach makes a lot of sense. That “oh wow” moment when someone sees what a skill is actually doing sounds like a strong entry point.

One thing I found myself wondering while reading your explanation is the moment right after that first scan. If someone discovers something concerning in the report, they might immediately think “okay, what should I do next?”

I wonder if that moment could be an opportunity to guide users toward the path that fits them best, for example teams running agents in production toward Watchtower, and platform builders toward the Security Clearance API.

I’m curious if you’ve seen users naturally move into those paths yet, or if they tend to explore the product differently.

ClawSecure

@chi_78 Really sharp observation and you're touching on something we're actively thinking about.

You're right that the moment right after a concerning scan result is the highest-intent moment in the entire user journey. Someone just discovered a skill they've been running has credential exfiltration patterns. They're not casually browsing anymore. They want to know what to do.

Right now the report does guide toward next steps. Watchtower status is visible on every report, and skills scoring 80+ earn ClawSecure Verified status which connects to the broader ecosystem. But you're identifying an opportunity to make that post-scan routing much more intentional. Something like: "You're running this in production? Here's how Watchtower keeps watching it.

You're building a marketplace? Here's how the Security Clearance API verifies skills at install time."

To answer your question directly: yes, we're seeing those two paths emerge organically, but the split is happening slower than it should because we haven't built that guided moment yet. Most users scan a few skills, explore the Registry, and then come back when they have a specific production need. Making that bridge explicit rather than hoping people find it is exactly the kind of UX improvement that compounds.

This is genuinely useful product feedback. Appreciate you thinking about it at that level.

@jdsalbego Many congratulations on the launch, J.D. :)

The first time you shared this product with me, I instantly pointed out that while tons of people are building OpenClaw AI agents, there's hardly any security solution for them. This is such a refreshing + critically important category to pioneer. I'm excited to see it hit the leaderboard today, and I endorse it.

ClawSecure

@rohanrecommends Thank you so much! You saw it early and you were right. That conversation stuck with me because it reinforced exactly what we were seeing in the data: massive ecosystem growth, zero security infrastructure. When 41% of the most popular skills have vulnerabilities and nobody is checking, that's not a gap. That's a crisis waiting to happen.

Really means a lot to have your endorsement on launch day! Appreciate you Rohan 🤝

@jdsalbego Really interesting framing.

The idea that “the code is the attack” feels like a fundamental shift compared to traditional security models.

In most systems, you’re trying to detect abnormal behavior at runtime. But in agent ecosystems like OpenClaw, the agent is effectively an unbounded execution environment with full system access.

That makes the problem less about monitoring behavior and more about controlling what is even allowed to exist and execute in the first place.

In that sense, this starts to look less like a security tool and more like a control layer for agent ecosystems.

Curious how you think about this evolving.

Do you see this becoming more like traditional application security over time, or a new category focused on governing autonomous execution environments?

The stat that 22.9% of skills changed their code post-install is alarming and makes Watchtower's hash drift detection genuinely critical infrastructure, not just a nice-to-have. Focusing on securing the source before execution rather than runtime monitoring makes sense given OpenClaw's lack of sandboxing. Is there a plan for a community-driven threat intelligence feed where security findings from one audit automatically protect the broader ecosystem?

ClawSecure

@svyat_dvoretski Really appreciate that framing, and you're exactly right. 22.9% post-install mutation is why we treat Watchtower as core infrastructure, not a feature. A one-time scan is a snapshot. The threat surface moves.

On the community threat intelligence feed: yes, this is actively on our roadmap and something we think about a lot. Right now, every scan already feeds back into the ecosystem in a meaningful way. When Watchtower detects hash drift on a skill and the rescan reveals a new threat pattern, that detection logic gets folded into our proprietary engine for every future scan. So findings from one audit are already protecting the broader ecosystem, just not through a public feed yet.

What we're building toward is exactly what you're describing: a structured threat intelligence layer where ClawHavoc indicators, new C2 endpoints, emerging prompt injection techniques, and supply chain compromise patterns are surfaced programmatically. The Security Clearance API is the foundation for this. Right now it returns real-time clearance status (Secure / Unverified / Denied) for any skill. The natural evolution is enriching that response with threat context: why a skill was flagged, which threat cluster it maps to, and whether similar patterns have been detected across related skills.

The challenge is doing this without giving malicious authors a playbook to evade detection. We're working through that tension carefully. Aggregate threat intelligence (trends, campaign-level indicators, category-level risk signals) can be shared broadly. Specific detection signatures cannot.

Would love to hear your thoughts on what format would be most useful. Are you thinking something closer to a CVE-style advisory feed, or more like a real-time API integration for platforms and marketplaces?

Documentation.AI

Great concept @jdsalbego. Any plans to open-source parts of the scanning engine?

ClawSecure

@roopreddy Thanks! We get this question a lot and it's one we've thought through carefully.

The short answer: we open-source the research, not the detection rules. Our public GitHub repo (github.com/ClawSecure/clawsecure-openclaw-security) has the full OWASP ASI mapping, findings methodology, and security documentation. The scanner itself is free with zero restrictions, no signup, no paywall, no rate limits.

But publishing the actual detection patterns (the 55+ OpenClaw-specific signatures in Layer 1) would give malicious skill authors a blueprint to craft evasions. That's the same reason CrowdStrike and Snyk keep their detection logic proprietary while making their tools widely accessible.

Where we are open: full Trust Center at clawsecure.ai/trust, vulnerability disclosure policy with safe harbor, NIST AI RMF alignment report, and our CSA STAR Registry listing. We believe in transparency of methodology and results. Just not transparency of exact detection signatures.

That said, we're exploring ways to give the community more visibility into how the engine works without compromising detection effectiveness. If you have thoughts on what would be most useful to see opened up, I'm all ears

Grats on launching.

Is it possible to have false positives? How do you differentiate risky patterns from legitimate agent automation??

ClawSecure

@himani_sah1 Great question and one we obsessed over while building the engine. Short answer: yes, false positives are possible with any static analysis tool. It's how you handle them that matters.

This is exactly why we built context-aware intelligence into Layer 1. OpenClaw skills legitimately need to do things that look dangerous in other contexts. A skill calling subprocess to run a build command is normal automation. A skill executing arbitrary shell commands with user-controlled input is a threat. A skill accessing environment variables to configure an API connection is standard. A skill exfiltrating those credentials to webhook.site is malware.

Our proprietary engine understands the difference because it was built specifically for OpenClaw's skill architecture, not adapted from a generic code scanner. It evaluates the full context: what file the pattern appears in, how data flows through the skill, whether external endpoints match known malicious infrastructure, and whether the behavior aligns with what the skill claims to do in its SOUL.md instructions.

That said, static analysis has inherent limits and we're transparent about that. If anyone encounters a false positive, we want to hear about it. You can report through our vulnerability disclosure policy at clawsecure.ai/vulnerability-disclosure or just drop it here. Every report makes the engine smarter.

Nas.io

Are there plans for alerts via Slack or Discord when Watchtower detects suspicious behavior?

ClawSecure

@nuseir_yassin1 Love this idea. Right now Watchtower alerts surface through updated Security Audit Reports on the platform and through the Security Clearance API, which any platform or marketplace can query programmatically for real-time status.

Slack and Discord integrations are on our roadmap. The infrastructure is already there since Watchtower generates the detection event the moment hash drift is caught. It's really a matter of building the notification layer on top. We're thinking Slack, Discord, email, and webhook options so teams can plug alerts into whatever workflow they already use.

If that's something you'd use, I'd love to know the setup. Are you running agents in production where you'd want real-time push notifications, or more for monitoring skills you're evaluating?

Lancepilot

Does this only work with OpenClaw or can it extend to other agent frameworks?

ClawSecure

@priyankamandal You're right, that's a stronger frame. Here's a revised response:

Right now we're focused on OpenClaw because that's where the biggest security gap is. 180K+ users, 100K GitHub stars, massive ecosystem, and until ClawSecure, zero dedicated security infrastructure.

But yes, the plan is to bring this to all major open-source agent frameworks. The core architecture was built for exactly that. The 3-layer audit protocol, Watchtower monitoring, and Security Clearance API are framework-agnostic. Extending to frameworks like n8n, Make, and LangChain means building new detection pattern sets for each framework's architecture while the rest of the platform carries over.

OpenClaw is where we start. Securing every open-source agent framework is where we're headed.

Which frameworks are you working with?

Lancepilot

This is honestly scary. 41% dangerous skills? Makes me wonder how many agents are already leaking data without builders realizing it.

ClawSecure

@istiakahmad That's exactly the right question to be asking. And the honest answer is: probably a lot more than anyone realizes.

The scariest stat isn't even the 41%. It's the 22.9% that changed their code after install. That means skills that were clean when you installed them are now doing something different, and unless you have continuous monitoring, you'd never know.

We built ClawSecure specifically so you don't have to wonder. Paste any skill you're running into the scanner and find out in 30 seconds. You might be surprised what's hiding in your stack.