ClawSecure

A complete security platform for OpenClaw AI agents

605 followers

A complete security platform for OpenClaw AI agents

605 followers

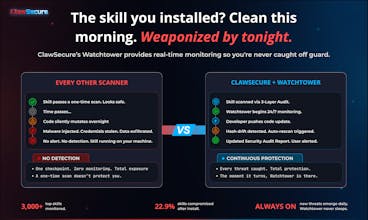

ClawSecure is CrowdStrike for OpenClaw AI agents. 3-layer security audit, real-time Watchtower monitoring, agent marketplace and identity security, and full 10/10 OWASP ASI coverage. 41% of top skills are dangerous. 1 in 5 are sending your data to attackers. Secure your agents in 30 seconds for free. clawsecure.ai

ZeroThreat.ai

Congrats on the launch!

Agent ecosystems are quickly becoming the next major attack surface, especially when third-party skills run with full system access and minimal verification.

At ZeroThreat.ai, we’re seeing a similar trend while testing AI-driven systems, attackers increasingly target agent logic, integrations, and runtime behavior, not just traditional vulnerabilities.

Curious to see how ClawSecure handles runtime security and continuous testing as the ecosystem grows. Security for autonomous agents is going to be a huge space.

Wishing you a successful launch on Product Hunt!

ClawSecure

@sarrah_pitaliya Thanks for the kind words! You're right that agent ecosystems are becoming a major attack surface, and it's good to see more people working on this problem from different angles.

On runtime security: our approach is intentionally source-first. In the OpenClaw ecosystem, skills ship with full system access, no sandbox, no permissions model. When a skill contains C2 callback beaconing or credential exfiltration endpoints, that's not a runtime anomaly. That's the code doing exactly what it was written to do. So we secure the source before execution, then Watchtower continuously monitors for code mutations after install. 22.9% of the ecosystem has already changed their code post-install, so that continuous layer is critical.

Continuous testing is already baked in. Every time Watchtower detects hash drift, an automatic rescan fires through the full 3-layer audit protocol and the Security Audit Report updates in real time. It's not periodic testing, it's integrity verification that never stops.

Appreciate the support and agreed, this space is going to be massive.

jared.so

This is addressing a massive blind spot in the AI agent ecosystem. The stat about 22.9% of skills changing their code after install is genuinely alarming. Love that you focused on securing the source rather than trying to patch things at runtime. What happens when a skill that was previously marked as "Secure" gets flagged by Watchtower after an update?

ClawSecure

@mcarmonas That's the exact scenario Watchtower was built for. Here's what happens:

Watchtower continuously monitors every tracked skill via SHA-256 hash comparison. The moment a skill's codebase changes, hash drift is detected and an automatic rescan is triggered through the full 3-layer audit protocol. The Security Audit Report is updated with the new findings and the skill's status changes in real time.

So a skill that was Secure at 9 AM could be flagged Concerning or Critical by noon if the developer pushed a malicious update. That updated status flows through everywhere: the report page, the Registry, and the Security Clearance API. Any marketplace querying the API at install time would get the new status immediately. Secure becomes Denied the moment the threat is confirmed.

This is why the 22.9% stat matters so much. Those aren't hypothetical risks. Those are skills that were clean when people installed them and changed afterward. Without continuous monitoring, you'd never know. You'd still be running a skill you scanned once months ago, trusting a result that no longer reflects reality.

A one-time scan is a snapshot. Watchtower makes it a living security layer.

Appreciate the thoughtful question and glad the source-first approach resonates!

Security for agents is going to matter a lot more as people start chaining skills together — having visibility + audits early feels like the right move.

ClawSecure

@allinonetools_net Exactly right. Skill chaining is where the risk compounds fast. One compromised skill in a chain doesn't just affect itself, it can cascade through every downstream agent that touches it. That's actually one of the 10 OWASP ASI categories we cover: Cascading Failures. When you chain five skills together and one of them mutates after install, you need visibility across the entire chain, not just the entry point. That's what Watchtower and the Security Clearance API are built for. Appreciate you seeing where this is headed.

Congrats on the launch! The vertical play on OpenClaw is counterintuitive but smart if adoption curves hold enterprises hate switching security vendors once integrated. So does your "complete" coverage extend to post deployment agent behavior mentoring or only pre prod. vulnerabilities?

ClawSecure

@ielrefaae Thanks, and you're seeing the strategy exactly right. Once you're the security layer integrated into an ecosystem's workflow, switching costs are real. That's by design.

To your question: both, and that's what makes the coverage complete.

Pre-deployment, the 3-layer audit catches vulnerabilities before a skill ever runs on your machine. Post-deployment is where Watchtower takes over. It continuously monitors every tracked skill for code changes via SHA-256 hash comparison. When a skill mutates after install (22.9% of the ecosystem already has), hash drift is detected, an automatic rescan fires through the full 3-layer protocol, and the Security Audit Report updates in real time. The Security Clearance API then surfaces that updated status to any marketplace or platform querying it, so a skill that was Secure at deployment can flip to Denied the moment a threat is confirmed.

So it's not just pre-prod vulnerabilities. It's continuous post-deployment integrity verification across the full lifecycle. The gap we saw in every other tool was exactly this: they scan once and walk away. We scan, then we watch. That's the difference between a checkpoint and infrastructure.

Sharp question. Appreciate you thinking about it at the integration and retention level.

Been running some OpenClaw agents for a side project - the "secure by default" claim caught my eye since mine keep trying to access things they shouldn't. Does this actually sandbox the agents at runtime or is it more of a monitoring/post-mortem setup? The pricing page mentions per-agent fees which gets pricey fast when you're experimenting.

ClawSecure

@lliora Great question and want to make sure I clear up a couple things.

ClawSecure isn't a runtime sandbox. We secure the source, not the execution environment. Our approach is: verify the skill before it ever runs on your machine, then continuously monitor it for changes after install. The 3-layer audit catches prompt injection, credential exfiltration, shell execution patterns, and supply chain vulnerabilities. Watchtower then watches for code mutations in real time. The thesis is that in OpenClaw, the code IS the attack, so making sure the code is safe before it executes is the right layer to solve this at.

For agents that keep trying to access things they shouldn't, scanning the skills you're running would tell you immediately whether that behavior is baked into the code or coming from somewhere else. That's a 30-second answer.

Also want to clarify: ClawSecure is completely free. No pricing page, no per-agent fees. Scan as many skills as you want, no signup, no paywall, no limits. You might be thinking of a different tool. Go experiment to your heart's content at clawsecure.ai.

ClawSecure

@manhdakhac Thank you! And you don't need to be technical to use ClawSecure. That was a core design decision. Paste any skill URL, hit scan, and the Security Audit Report breaks everything down in plain language with severity ratings so you can instantly see whether a skill is safe or not.

For learning more about OpenClaw security in general, our blog at clawsecure.ai/blog covers topics ranging from beginner-friendly overviews to deep technical dives. Articles like "Is OpenClaw Safe?" and our OWASP ASI explainers are great starting points if you're new to the space.

As for what's next: we're expanding skill coverage across the ecosystem, building out notification integrations for Watchtower alerts, and working toward supporting additional open-source agent frameworks beyond OpenClaw. Lots more coming.

Appreciate you being here on launch day, and don't hesitate to ask if you have questions as you explore!

FuseBase

Congrats @jdsalbego @fiatretired

Are you mapping agent behavior dynamically or just scanning the skill code?

ClawSecure

@kate_ramakaieva Thanks!

We scan the skill code across three independent layers and then continuously monitor it for changes. That's a deliberate architectural choice, not a gap.

In the OpenClaw ecosystem, the code IS the attack. Skills ship with full system access, no sandbox, no permissions model. When a skill contains C2 callback beaconing, credential exfiltration endpoints, or shell execution patterns, that's not a runtime anomaly. That's the code doing exactly what it was written to do.

So we secure the source rather than chase symptoms at execution. Layer 1 (55+ OpenClaw-specific patterns) catches threats that are structurally invisible to generic scanners because they don't understand the skill format. Layers 2 and 3 handle static/behavioral analysis and supply chain CVEs.

Where we go beyond static scanning is Watchtower. Skills mutate after install. 22.9% of the ecosystem already has. Watchtower detects hash drift in real time, triggers automatic rescans through the full 3-layer protocol, and updates the Security Audit Report. Continuous integrity verification, not just a one-time checkpoint.

The right question isn't "what is the agent doing right now?" It's "should this code be running at all?" That's what ClawSecure answers.