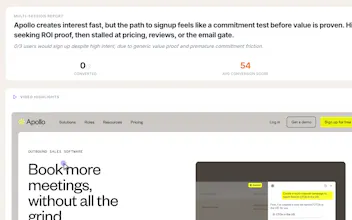

Convert or Not

Simulate first-time users. See why they drop off

119 followers

Simulate first-time users. See why they drop off

119 followers

Convert or Not simulates first-time user sessions on your site. It clicks, scrolls, and attempts to complete key actions like signup, revealing where your target users hesitate, drop and why. Use it to understand and fix conversion gaps before real users are lost.

RaptorCI

Hey Junu, this is a great idea as I'm just launching and would love to know more on how users will use my landing page. I just signed up but now I'm stuck on a blank screen. Any suggestions?

UserCall

@jordan_carroll2 Hi Jordan. Could you go back to main page (convertornot.com) and let me know what you see

RaptorCI

@junetic Just seems to be blank

@junetic @jordan_carroll2 I'm facing the same problem, right now

UserCall

@jordan_carroll2 @matheusdsantosr_dev Ok fix is applied. Thank you Jordan for calling that out. Please refresh the page and try launching simulation again and let me know if it doesn't work

Congrats on the launch! 🎉

Honestly the timing of this is uncanny. I've literally spent today trying to figure out how to get real test users for my own product. Watching people drop off without knowing why is one of the most frustrating parts of being a solo founder. You build something, you ship it, and then... silence. Was it the headline? The pricing? The signup flow? You're guessing.

A simulated first-time session that tells you where people hesitate is genuinely useful , especially in that gap before you have enough real traffic to A/B test anything.

Quick question: how close does the simulation get to real human hesitation? Like does it catch the "I don't understand what this does in 5 seconds" moment, or is it more about UX friction (broken buttons, confusing flows)? Curious where it sits on that spectrum.

Either way, congrats

@maria_fitzpatrick Maria's question didn't really get answered and it's the one I'd most want to know. There's a big difference between "the button was broken" and "I didn't understand what this product does in 5 seconds." One is a fix, the other is a positioning problem. Does the simulation tell you which one you're dealing with?

UserCall

@maria_fitzpatrick @jared_salois it provides qualitative feedback on first time experience based on context of target persona that you can edit and interacts with the site and provides feedback based on what it sees and understands. So yes it goes beyond ‘button is broken’ to ‘I don’t understand why I should click this button’ or ‘I don’t understand what this does and what I’m supposed to do next’

Could be interesting to see or simulate how users will interact. How are you modeling a first-time user? Is it rule based flows, LLM-driven reasoning or something else?

UserCall

@lak7 No rule based flows. LLM reasoning on ux (what it sees, experiences and does) with context about their persona (that you can specify based on who your target user is)

Would you ever work on a version for mobile apps? I would love to be able to simulate first-time user sessions on our mobile app on both iOS and Android and understand more about our user behavior

UserCall

@chris_u_han Do you use any mobile product analytics? ie mixpanel, posthog etc?

@junetic not at the moment, it felt too early for us. We do some event tracking with google analytics, but nothing beyond that

Interesting idea, I wish I knew about this a day before at least.

I tried the website out and it was straight forward except for a couple of things:

1. It wasn't very clear that I can run one single persona and it took me quite a bit of time till I realized how to run a single one not all 3. I thought the Edit part would only edit the persona and not exclusively run it.

2. When I ran that one single persona against my website, it went to the queue (which is fine), however, 45 minutes later and it is still in queue. Not sure if that is intended or not.

Does it notify me when it is done? I kept refreshing to see if it finished the analysis or not.

Nonetheless, congratulations on the release.

UserCall

@vallar thanks for the feedback. You'll be notified when report is ready

Humans perceive visually, while a program works through code text. So a program can easily detect things a human cannot see. How do you handle that?

If you ever add mobile app support, that'd open up a huge use case. The gap between someone tapping 'Get' on the App Store and actually finishing onboarding is where most installs die, and there's no good way to simulate that flow right now. Desktop web is the right starting point but mobile onboarding is where the data gets really interesting.