Convo

Memory & observability for LLM apps

123 followers

Memory & observability for LLM apps

123 followers

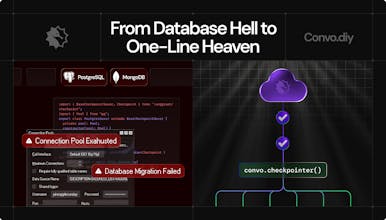

Convo is the fastest way to log, debug, and personalize AI conversations. Capture every message, extract long-term memory, and build smarter LLM agents - with one drop-in SDK.

Linkinize

Giving LLM apps memory and observability is key to making them smarter and more reliable — Convo looks like a must-have for any AI dev stack. Congrats on the launch 🚀

Essays by Paul Graham GPT

@naderikladious Thank you so much! 🙏

Once you have memory and visibility into what your agents are doing, everything starts to click. Excited to see how folks use Convo in their stacks!

I found Convo to be a simple yet powerful tool for logging and personalizing AI conversations