Launching today

Doing

Voice and visual context for AI builders. No subscription.

36 followers

Voice and visual context for AI builders. No subscription.

36 followers

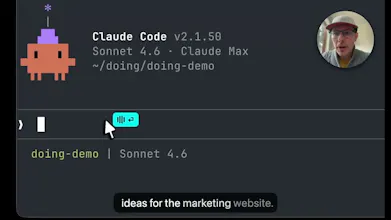

Doing is for AI builders who use voice and screenshots to bring context to Claude Code, Codex, and other AI agents. Tap a hotkey and Doing listens. Optimized over thousands of hours of building with Claude & Codex. Blazing fast, private, local, no account, no subs. Just a quality tool that you own and works well.

Medium

👋 I'm Brian, and I created Doing to help me share the context that's in my head and on my screen with Claude Code, Codex, Gemini, and other AI agents. I've used the other tools and they are slow, expensive, and full of privacy nightmares. Doing is the opposite.

Doing is not a general purpose voice transcription tool like the myriad of other voice apps others out there, but purpose built for pairing with your agent. It is simple, fast, and gets out of your way so you can get the ideas out of your head and into the context window.

A few things I'm proud of:

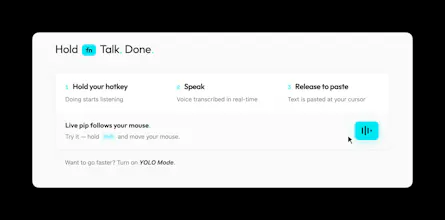

🌱 Simple, effective workflow. Hold a hotkey and Doing listens. The cyan pip follows your mouse, so you'll know where your words will land. (watch the vid above)

🔥 Fastest transcription. NVIDIA's Parakeet is 10-100x faster at transcribing than the alternatives, and it runs entirely on your Mac (Apple Silicon required). There is no latency, no waiting. You really have to try the TDT approach to believe how incredible it truly is.

⚡ YOLO mode. Auto-submit your prompt after pasting. Talk, release, and your words and screenshots are already submitted. Avoid the self-editing anti-pattern and let the LLM do its thing.

🙅♂️ Hands free mode. Tap shift to go hands free and just talk. Tap it again to wrap it up.

📸 Screenshots. Drag a rectangle to grab visual context that's automatically added to your transcription.

📝 Markdown transcripts. Transcripts are saved locally as daily .md files. Integrates perfectly with your Obsidian knowledge base.

🔒 Truly local. Your audio never leaves your machine. Not a privacy policy, it's architecture.

📚 Nerd words. Doing is for AI builders and understands AI Engineering, Software Engineering, Product & Biz dev terms and terminology. You can add your own dictionaries, terms, and common corrections.

⚒️ Customize with skills. Post-process your transcriptions with LLM based skills, and tune based on target app. Follows the SKILLS.md standard.

Free trial, no account needed. If you like it, it's $49 and yours forever. I'll be here all day and would love to hear your questions and feedback!

bold claim on the YOLO auto-submit. that works fine for prose context but for technical specifics - variable names, file paths, error codes - voice introduces correction overhead that slows you down.

Medium

@mykola_kondratiuk i hear you. but if you're working with a frontier model, it can easily infer those specific technical details from your voice prompt by using its tools to grep/search/explore the code. figuring our variable names, file paths, error codes shouldn't be your job. focus on higher level things.

fair point. frontier models are better at inference than they were 18 months ago. my concern is more about edge cases in complex workflows where inference breaks down - not the average use case.

RaptorCI

Hey! This is super interesting to me as someone who has brought a lot of ai products into my businesses but I have one question. I spend a lot of time refining guardrails for a project, goals, acceptance criteria and describing the workflow such as following TDD. Is there a way to set this up so that some of it is reusable across projects and code bases like we have with AGENTS.md today?