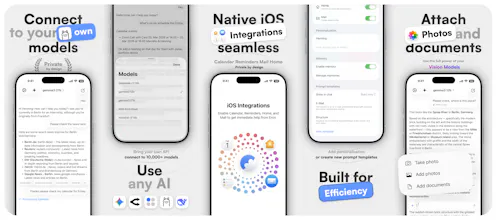

Launched this week

Eron

Portable Ollama instance for working with your own Al

131 followers

Portable Ollama instance for working with your own Al

131 followers

Connect your local or hosted instance using your URL and API key. All available models load automatically and you can switch between them, start new chats, or continue existing ones in a clean interface. The chat stays simple and responsive. You can add images or documents and use web search when needed. Eron also supports integrations like calendar events, reminders, Home control and email drafts. No accounts, no tracking. All data is sent only to your configured server.

Product Hunt

@curiouskitty Hey, everything goes only through the user’s configured server, including chats, uploads and model responses. There are no accounts, no tracking and no hidden routing. Web search is optional and clearly separated as an additional capability which you can turn off, so the boundary remains transparent and users always know what stays within their own setup.

Banyan AI Lite

Does it only works with Ollama? Any other offline LLMs planned?

@davitausberlin Eron is built around OpenAI compatible APIs and works equally well with local, self hosted and hosted backends. That includes Ollama, but it’s by no means limited to it. The whole experience stays clean, native and easy to use, regardless of the complexity behind the endpoint.