Banyan AI Lite

AI detecting & preventing SaaS churn

399 followers

AI detecting & preventing SaaS churn

399 followers

Churn is the #1 killer of SaaS. Up to 50% of SaaS struggle with high churn. Banyan AI is here to help. Our tool enables you to detect churn before it happens and prevent it. With Banyan AI, you can unify your most critical revenue data (CRM, billing, support, product usage) into a single interface. Based on this data, you can identify churn risks and expansion opportunities (customers ready to buy). Time to value: minutes. Results: measurable and quantifiable. Churn prevented, revenue saved.

Banyan AI Lite

Hey Product Hunt 👋,

I’m Davit, co-founder of Banyan AI, and we’re excited to launch here for the first time.

Did you know that a 5% monthly churn rate can reduce your annual revenue by nearly half? Or that many SaaS companies lose 2–5% of their revenue to leakage? New leads matter, but your existing customers are your real treasure.

If you're struggling with churn or finding it hard to expand revenue, we’ve got you covered. Welcome to Banyan AI 🌳🚀

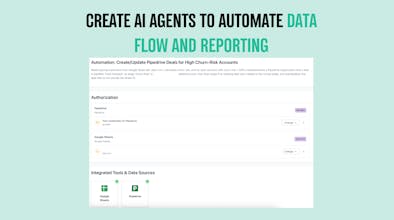

Our platform unifies data across your tool stack (billing, CRM, product analytics, support) and detects signals that are scattered across those tools. Banyan AI automatically detects:

Customers likely to churn

Hidden revenue leaks

Expansion opportunities

Instead of digging through dashboards, you get clear AI insights about your revenue health and what needs attention. Check out our website or our blog.

I’ll be here in the comments all day. Thanks for checking out Banyan AI 🙏

Tobira.ai

@davitausberlin Revenue leakage is the most underrated one. Lost 2-3% in a previous product for months before realizing it was just billing edge cases stacking up silently. Support tickets, usage, CRM all looked fine. What's the most common source of leakage you see across your customers?

Banyan AI Lite

@olia_nemirovski thanks for feedback and comment. I think it is mostly differences between CRM and billing. f. e. sales closes the deal at 100 USD/m, but customer, in last minute decides to go for a lower tier, lets say, 69. Now you have 100 in CRM and 69 in billing, without sales knowing about it. Result is: you predict X and get Y. Solution, surface such cases, approach customers and ask them to stick to their word ;)

Really interesting direction.

From the outside Banyan reads as a churn detection / revenue analytics tool.

But looking at how the system actually behaves — unifying billing, product usage, support and CRM signals into a single layer — it feels closer to something deeper.

Almost like a decision layer for revenue, where the goal is not just to understand what’s happening, but to guide actions (who to save, when to act, where expansion exists).

If that evolves, it seems like the value shifts from “understanding churn” to “controlling revenue outcomes”.

Curious how you think about this internally.

Do you see Banyan primarily as an analytics layer, or evolving toward infrastructure that actively drives revenue decisions?

Banyan AI Lite

@cauan_martins Thanks Cauan, good observation. We prefer to be viewed as decision layer for execs. Think of command central for decision makers.

@davitausberlin that makes a lot of sense, “command central for decision makers” is a strong framing

what’s interesting with that direction is that once you position as a decision layer, expectations shift quite a bit

it’s no longer just about surfacing insights, but about how much the system actually influences or drives decisions

curious how you think about that boundary, is Banyan more of a recommendation system for execs, or something that could eventually move toward more automated or system-driven actions?

In education, churn looks different than in typical SaaS — a student finishes a course and leaves, which is not churn, it is a natural end. But someone who stops halfway through is a completely different signal. How well does the detection handle that difference, where inactivity does not always mean risk?

Banyan AI Lite

@klara_minarikova Thanks for the question Klara! Well, if natural end can be counted as churn, all of us have 100% churn guarantee :D But jokes aside: a very good question. If customer was less active during last week, is he about to churn, or is he in vacation? You can add seasonality as variable, simply ask AI to calculate how customer behaviour might be related to seasonal behaviour in respective country. And then, most importantly, when you just watch one data stream (f. e. only billing, CRM, or product usage) then your insights are limited. But now add other info layers, f. e. how many support tickets during last week? Any failed payments? And you get clearer picture:

failed payment + less usage = churn signal

failed payment + less usage + many critical support tickets = strong churn signal

less usage + seasonality effect (summer) + recent upgrade = no churn signal

Banyan AI Lite

@klara_minarikova Great point, that’s exactly where a lot of generic churn models break.

Banyan doesn’t treat inactivity as a universal risk signal. It looks at behavior in the context of expected lifecycle. In your example, completing a course and dropping off is “healthy”, while stopping mid-way is not.

In practice, this means we define expected patterns first. Things like typical course duration, completion rates, and engagement milestones. Then we compare each user against that path rather than against a global average.

So inactivity after completion is ignored, while inactivity before key milestones gets flagged. Same signal, very different interpretation depending on context.

This is also why we let teams adjust logic based on their model, because education, SaaS, and marketplaces all behave very differently here.

@klara_minarikova Hi Klara, that's a good question. Most people don't think about churn that way.

You're right. A student finishing a course and leaving is a success, not churn. But someone stopping halfway through is a real risk signal.

The difference comes down to intent data. If a student stops engaging but still has weeks left in the course, that's a problem. If they stop because they finished, that's a win.

I'd like to see how Banyan handles that distinction too. Maybe something like allowing custom rules per customer type so natural endings don't get flagged as churn.

Banyan AI Lite

@klara_minarikova @taimur_haider1 Taimur, appreciate your comment! Banyan is like a white board, you ask AI and it does for you. Just make sure you feed the data. Once it has data, you can ask to dig as deeper as you want. You can even ask it to measure correlation between SaaS usage spikes and weather in New York vs price of Pizza in Napoli. Of course, it a joke, but I want to say, there is no limitation, no predefined set of reports, its really flexibility pure. Like paradise for data nerds.

Banyan AI Lite

@klara_minarikova @taimur_haider1 Facts! The model needs to separate lifecycle completion from behavioral drop-off. In practice this means:

defining expected lifecycle per segment (course duration, contract term)

labeling terminal events as “successful completion” vs “early exit”

combining intent signals like engagement decay, inactivity, and remaining time

Banyan handles this via segment-specific rules + signal weighting, so churn is only triggered when behavior deviates from expected lifecycle, not when the lifecycle is fulfilled.

The "text-to-API" approach for connecting data sources is clever – removing the integration bottleneck is probably the single biggest thing that determines whether a tool like this actually gets adopted or sits unused after the trial.

One question: at what point does Banyan become useful in terms of data volume? If I'm an early-stage SaaS with 50-100 customers, is there enough signal for meaningful churn predictions, or does this really shine once you hit a certain scale?

Banyan AI Lite

@aaron0403 Very good question! I guess, over 50 paid accounts it is already starting to get relevant. When you have 10-20 customers, you know everyone by name and still remember how you closed them and know what to expect. Once it gets more and more, you lose a track on "human level" and you need data. I think 50 accounts is good place to start. Especially if they pay not 10-20 USD/m but medium or big SaaS ticket

Banyan AI Lite

@aaron0403 I agree with Konstantin's response. Once churn starts to hurt, time to turn to data-driven approach

Good luck team! Cool idea. How long does the setup usually take if someone wants to connect their SaaS tools?

Banyan AI Lite

@steffen_rehmann Thanks for asking Steffen. Normally it takes just minutes. Most tools can be connected via OAUTH2 or bearer token. Hardest part is (in some tools) finding these tokens. But once you have them, it takes a minute, give or take

Banyan AI Lite

@steffen_rehmann Thanks a lot, appreciate it!

Setup is usually pretty quick. Most teams are up and running in under an hour. Connecting core tools like billing, CRM, and support is straightforward, and you start seeing first insights shortly after.

This looks pretty useful, especially for teams that struggle with churn but don’t have a clear way to connect all their data. Pulling CRM, billing, support, and product usage into one place makes a lot of sense.

Curious how accurate the churn predictions are in practice though. Is it more rule-based or does it adapt over time as it learns from the data?

Banyan AI Lite

@zerodarkhub Its predictive in terms, that it can generate likelihood of churn, f. e. based on 10 various factors one account has 10% probability, other 70%. You can adjust the reports and factors in a way that better depicts your experience with the your customers. f. e. high number of support tickets isn't a churn signal, but rather a positive signal (customers are engaged with the tool), or short trial to paid period isn't necessarily good account health sign (which AI can assume), but rather easy come, easy go mentality. You can consider these effects while creating reports and scanning accounts.

Congrats on the launch! What signals have ended up being the most accurate early indicators of churn in your models so far?

Banyan AI Lite

@thegreatphon It is change in usage. If account reduces the usage significantly over specific period of time (adjusted for seasonalities and random events) this can be a major predictor.