Launched this week

Google Search Live

Interactive, multimodal conversation in AI Mode

360 followers

Interactive, multimodal conversation in AI Mode

360 followers

Google is expanding Search Live globally which was teased during the event last year, to all languages and locations where AI Mode is available.

It's super cool man!

Indie.Deals

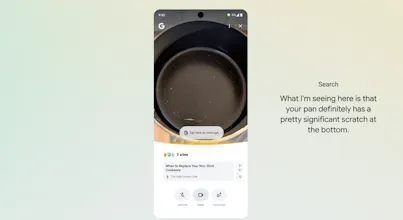

**Search Live** is now available globally — 200+ countries, all languages, voice and camera included.

Powered by Gemini 3.1 Flash Live, conversations are more natural, multilingual by default, and work the way you think: just speak.

**How it works:**

- Open the Google app on Android or iOS and tap the Live icon under the Search bar

- Ask questions out loud and get audio responses, with follow-up support and web links

- Enable your camera to add visual context — Search sees what you see and responds accordingly

- Already using Google Lens? Tap Live at the bottom to start a real-time conversation about what's in front of you.

Check out the video demo here

@adithya How well does the camera handle real-world chaos, like identifying ingredients in a busy Indian market while chatting in Hindi?

Features.Vote

the expansion to all languages is the part that actually matters here. search live in english is already impressive, but the real unlock is when someone in portuguese or hindi can point their camera at something and have a live conversation about it

curious how the multimodal memory works across a session though. if i show it something early in the conversation, does it still reference that context when i ask a follow-up a few exchanges later?

Congrats on the global rollout, this is huge for making AI search actually conversational instead of just typed queries. The camera integration sounds really clever for those moments when you need to ask about something right in front of you but can't quite describe it in words.