LTX Desktop

Local open-source LTX video editor optimized for GPUs

88 followers

Local open-source LTX video editor optimized for GPUs

88 followers

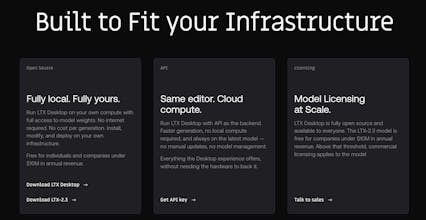

LTX Desktop combines a full non-linear video editor with on-device AI generation. Free, open-source, runs locally on your machine. Powered by LTX-2.3.

Hey Hunters, I am excited to hunt LTX Desktop today! 🚀

If an engine is truly powerful, it should enable real products to be built on top of it — and that’s exactly the idea behind LTX-2.3.

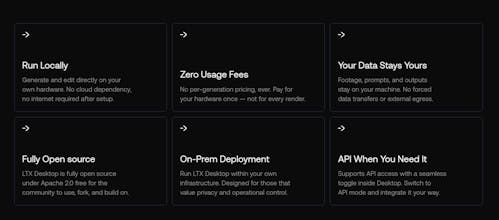

LTX Desktop is a fully local, open-source video editor running directly on the LTX engine, optimized for NVIDIA GPUs and compatible hardware. That means:

• No mandatory cloud dependency

• No per-generation pricing

• Your data stays on your own device

The engine is the hard part — the interface shouldn’t be. And now it’s yours, free.

LTX Desktop is a great demonstration of what the LTX engine can already power, and more importantly, what developers and creators can build with it.

Curious to hear what the community thinks and what you would build on top of this engine. 👇

What specific SDK or API capabilities does the LTX-2.3 engine provide for developers who want to build their own specialized video tools or integrate the engine into existing creative workflows?

Nice to see LTX go desktop curious whether the local rendering communicates back to your cloud at all for model updates or asset sync, or is it fully air-gapped once installed? Would change a lot about how teams think about data residency.

The on-device AI generation with no per-gen pricing is compelling, but curious about the minimum hardware sweet spot for smooth workflows, What's the min GPU spec you'd recommend for this?

The video quality is excellent! Congratulations on the launch. Are there any topic restrictions when generating?

Love that this runs fully local with no per-generation pricing. The open-source NLE + on-device AI combo is exactly what creators need to experiment freely without worrying about cloud costs.

Vois

There is an increasing population of users with Apple devices with those specs and your listing mentions "runs locally on your machine" Apple(MacOS) Excluding. You may want to mention that.