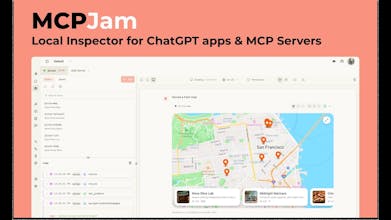

MCPJam inspector is the local testing tool for ChatGPT apps and MCP servers. Build your apps locally with MCPJam's widget emulator and test against any LLM in the playground. Inspect your MCP server’s tools, resources, prompts, and OAuth flows. No more ngrok or ChatGPT subscription needed.

MCPJam Inspector

Hey, it's Matt from MCPJam 👋.

We started MCPJam because the dev experience around building ChatGPT apps, MCP apps, and MCP servers wasn't great.

To develop a ChatGPT app, you had to get a ChatGPT pro subscription, ngrok you local MCP server, then connect it remotely on ChatGPT. There were also no good ways to test MCP servers and see how they behave in a real production environment.

That inspired us to build our open source inspector at MCPJam. We introduced:

Local ChatGPT and MCP apps emulator.

LLM playground to test your MCP server / apps in a real chat environment with any LLM

OAuth debugger to visualize MCP authorization at every step

and a bunch of other features that addresses the pains of MCP development.

If you're building a ChatGPT app, MCP app, or MCP server, I highly invite you to give us a try! Feel free to email me if you have any questions or feedback.

GitHub: https://github.com/MCPJam/inspector

Email: matthew@mcpjam.com

@matteo8p congrats on the launch. Does it also work with Claude Sonnet?

MCPJam Inspector

@austin_heaton Thank you Austin! It does have support for Claude Sonnet 4.5. You can provide your own API key, but we also provide Sonnet for free too!

Nice focus on the local development workflow - the "no more ngrok or ChatGPT subscription needed" angle is a real pain point.

The tool inspector for resources, prompts, and OAuth flows looks especially useful. Debugging OAuth in MCP integrations has been frustrating in my experience.

One question: does the LLM playground support streaming responses, or is it request/response only? We've found streaming adds complexity when testing tool interactions.

Great launch - the MCP tooling ecosystem needs more developer-focused products like this.

MCPJam Inspector

@kxbnb The LLM playground has both. If you bring your own API key, it's streaming. We also provide models for free, and those are done with completion. We haven't seen a ton of issues around streaming complexity with tool interactions. What have you seen?

@matteo8p The main thing we've hit: when a tool call fails mid-stream, it's hard to tell if the issue was in the LLM's generated arguments, the tool's response parsing, or something in the transport layer. The streamed partial responses make it tricky to reconstruct what actually happened.

We ended up building toran.sh partly for this - it sits in front of the upstream API and shows you the exact request/response without touching your code. Helpful when you're not sure if the bug is in your MCP server or in how the LLM formatted the call.

Curious if you've thought about adding request/response capture to the Inspector - would pair well with the tool testing flow you already have.

MCPJam Inspector

@kxbnb We've been using Vercel AI-SDK for streaming. They handle tool calling really well even with partial responses.

We do surface JSON-RPC messages to the inspector, it's the lowest request / response layer.

The OAuth debugger looks really useful for troubleshooting authentication flows. I'm curious about testing MCP servers that connect to multiple external services — does the inspector support visualizing parallel OAuth flows or handling multiple tokens at once?

MCPJam Inspector

@yamamoto7 The OAuth debugger inspects OAuth of a single MCP server. Parallel OAuth flows are not a part of the MCP spec!

@matteo8p The ngrok + ChatGPT subscription tax for local dev is such an underrated pain point. Every extra hoop kills momentum. We’re building consumer-facing AI (group chat participant) and the iteration bottleneck hits us too. Do you see MCPJam eventually supporting testing with custom personas/personalities, or is it purely for tool/server validation?

MCPJam Inspector

@michael_trifonov Could you elaborate on what you mean by custom personas? Are you talking about configuring the LLM? We do have an LLM playground where you can test your apps with different models, system prompts, temperatures, etc, if that's what you're asking!

MCPJam has been my go to tool for testing and evaluation of my remote mcp server ax-platform. I’m a huge fan of the SDK for automated testing and the chat is fantastic for having AI evaluate the tools, I consider it more of a UAT from an agents perspective on how the tools work for them. Great job MCPJam team!

MCPJam Inspector

@jacob_taunton Thank you so much Jacob, awesome to hear that you're adopting MCP evals.

Our team at Tadata builds MCP servers every day. We maintain one of the leading open source MCP projects and are shipping a large number of MCP servers for our agent builder. And we're huge fans of the OG MCPJam tool for debugging OAuth issues -- the visual flow is so helpful! Excited to try out the new stuff.

MCPJam Inspector

@tori_seidenstein Thanks for the support Tori! Been following Tadata for a long time, awesome to see your work on FastAPI MCP and now the agent builder on top of MCP! Really quality stuff.

MCP Jam is my goto testing tool when I build ChatGPT apps. The UI is neat and it provides all tools necessary to debug yours!

MCPJam Inspector

@bruno_perez Thanks for sharing Bruno!!