Mosaic

Zapier for Video Editing

246 followers

Zapier for Video Editing

246 followers

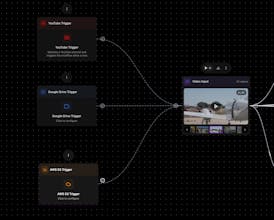

Mosaic allows you to automate any video edit — from Rough Cuts to Motion Graphics and anything in between. Our node-based canvas is an interface to setup video editing workflows that scale. Once created, these can be reused as templates or triggered programmatically via API or event-based triggers. From any step along the way, seamlessly export your timeline back into traditional tools like Premiere Pro / Final Cut / DaVinci Resolve or to popular Media Asset Management softwares.