NexaSDK for Mobile

Easiest solution to deploy multimodal AI to mobile

643 followers

Easiest solution to deploy multimodal AI to mobile

643 followers

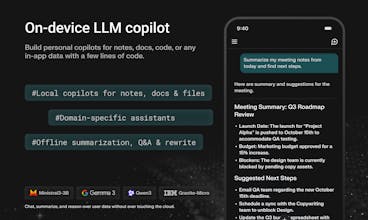

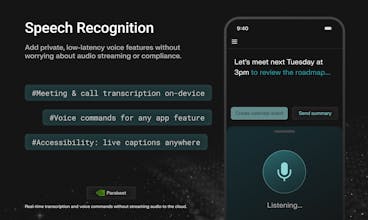

NexaSDK for Mobile lets developers use the latest multimodal AI models fully on-device on iOS & Android apps with Apple Neural Engine and Snapdragon NPU acceleration. In just 3 lines of code, build chat, multimodal, search, and audio features with no cloud cost, complete privacy, 2x faster speed and 9× better energy efficiency.

Fish Audio

NexaSDK for Mobile

NexaSDK for Mobile

@hehe6z Thank you and look forward to more feedbacks!

BizCard

Any plans for on-device guardrails / safety filters? (Especially for multimodal inputs)

MYND

NexaSDK for Mobile

@shashank_keshri Thank you Shashank for the support!

Love seeing Snapdragon NPU + Apple Neural Engine mentioned explicitly. Most SDKs hand-wave performance.

GNGM

What types of models are supported today (e.g., language, vision, speech), and how easy is it to bring your own model?

NexaSDK for Mobile

@polman_trudo We support language (LLM models), vision (VLM, CV models), speech (ASR models). Our SDK has converter to support bringing your own model for enterprise customers.

NexaSDK for Mobile

@polman_trudo We support almost all model types and tasks: vision, language, ASR, embedding models. NexaSDK is the only SDK that supports latest, state-of-the-art models on NPU, GPU, CPU. It is easy to bring your own model as we will release an easy-to-use converter tool soon.

Any support for “hybrid mode”? Local inference by default, optional cloud fallback for bigger tasks?

NexaSDK for Mobile

@qiwap Thanks for the feedback. This is a great idea and it is on the roadmap!

Zawa

This is huge for cutting cloud costs. We've been hesitant to add heavy multimodal features just because of the API bills and latency, but running this on-device solves both. 9x energy efficiency is wild if it holds up in real-world usage. Does this support custom quantized models yet?

NexaSDK for Mobile

@tony_hsieh2 Thank you Tony for the support. Please let me know if you have any question or feedback. Yes, we support custom quantized models.