Launching today

OpenBerth

AI-assistant native self-hosted deployment platform

56 followers

AI-assistant native self-hosted deployment platform

56 followers

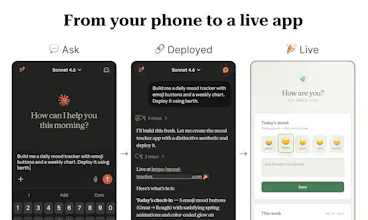

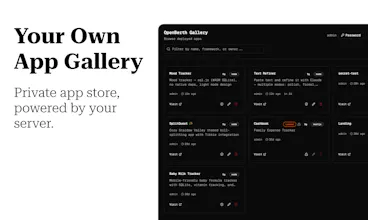

OpenBerth is a self-hosted deployment platform that AI assistants can use natively. Tell Claude, your agent, or any AI tools you use on your phone or desktop to deploy an app, it does. Store and use secrets securely, AI references them by name without seeing values. Works from CLI, AI chat, or a single file drop. Your server, your rules. Connect any AI tools to MCP server and start building.

The secrets-by-name-without-seeing-values architecture is the most interesting part here. How does that work exactly when Claude is orchestrating a deployment — does the MCP server do a local substitution at runtime, or are the values never in the process environment that the LLM context can reach? The threat model matters a lot depending on the answer.

@sounak_bhattacharya Secrets are stored encrypted at rest on the server. When Claude (or any AI client) calls berth_secret_list/get, the MCP response only includes names (for referring) and descriptions (for knowing the purpose so LLM can understand when to pick that up), values never enter the LLM context.

At deployment time, the server decrypts and injects secrets as environment variables into the container (Isolated using gVisor so no access outside the running instance). This happens entirely server-side; the MCP protocol never transmits secret values.

So the boundary is: the AI agent knows which secrets exist and can reference them by name in deployment configs (or being asked by the user to use what, for instance, use my stored stripe key when integrating with Stripe), but the actual values only live on the server and inside the running container and outsiders are allowed to refer to secrets not having knowledge on the value.

How does this compares to tools kike dokku?

@nayan_surya98 Great question! Your AI tools can be deployed directly via MCP, no CLI or git push required. OpenBerth also provides a development sandbox with hot reloading, allowing you to iterate on live code before promoting it to production.

While other platforms excel in their own areas, they weren’t specifically designed for AI-assisted workflows. OpenBerth, on the other hand, is built with this focus in mind.

Additionally, OpenBerth includes built-in secret management, enabling your AI tools to securely reference and use secrets within a project, without exposing API keys.

You can explore a full comparison in the documentation: https://openberth.io/docs/compare