Launching today

OpenBox

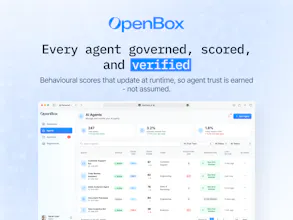

See, verify, and govern every agent action.

299 followers

See, verify, and govern every agent action.

299 followers

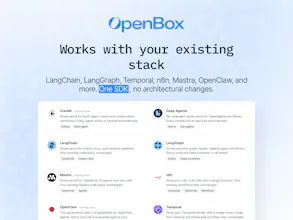

OpenBox provides a trust platform for agentic AI, delivering runtime governance, cryptographic verification, and enterprise-grade compliance. Integrates via a single SDK with LangChain, LangGraph, Temporal, n8n, Mastra, and more. Available to every organization with no usage limits.

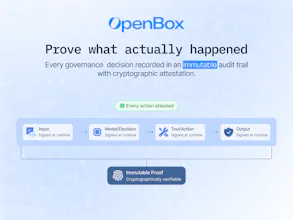

Stoked to see this launch, the cryptographic audit trails piece is what really caught my attention here. How do you handle the performance overhead when you're signing every single agent action in a high-throughput environment?

OpenBox

@zerotox Hi thanks for the question. We make each governance event gets hashed and added to a Merkle tree asynchronously before and after actual Agent execution with governed decision. At session end, the tree is finalized and signed once. The signing worker scales independently from governance, so it never becomes a bottleneck.

ConnectMachine

I've been dealing with audit nightmares from our ML ops team and that OPA policy engine integration could actually save us months of compliance work it seems.

OpenBox

@syed_shayanur_rahman Glad to hear that, this is exactly the kind of audit/compliance pain we’re solving with OpenBox.

We’ve been helping teams streamline OPA integrations and reduce audit overhead significantly. Happy to walk you through how it could fit your setup.

Would love to connect, feel free to drop me a note at aswin@openbox.ai, or we can set up a quick call.

Documentation.AI

I've been thinking about this space a lot lately and honestly most governance solutions I've seen are either too heavyweight for dev teams or just basic logging that doesn't actually prevent anything bad from happening.

How does this handle the performance hit when you're doing real-time policy checks on every agent action, especially for high-frequency workflows where latency actually matters?

OpenBox

@roopreddy We provide a "Trust Lifecycle" where the agent needs the gain trust along its life time. Imagine like your human employee. The more trustful and experienced it get, the less frequent and thorough you need to check. With our Trust Tier, you can configure how much attention overhead it need.

In addition, we use the combination of static and dynamic enforcement at different places, with short-circuit aggressively for optimization.

In short, there is still latency but would not be noticeable.

Nice work on this. How does it integrate with existing agent frameworks like LangChain or similar tools?

Thanks @riya_singh91 .

OpenBox is built to plug into existing agent frameworks like LangChain, LangGraph, Temporal, n8n, Mastra, and similar stacks through a single SDK, so teams can add governance, verification, and runtime visibility without rebuilding their workflows.

Here's a some more detailed info: https://docs.openbox.ai/getting-started/

Love the direction here. Are you targeting enterprise use cases first or keeping it flexible for smaller teams as well?

Hey @pulkit_maindiratta

We've launched an enterprise-grade product that is accessible to teams of all sizes so you can meet your compliance and governance needs.

At the same time, we're also working with larger enterprises that need more custom setups and deeper integrations.

Simple Utm

This is a problem that does not get enough attention yet. Everyone is focused on making agents more capable, but the question of "how do you prove they acted within policy" is going to matter a lot more as agents start touching real workflows at scale.

The cryptographic verification angle is interesting. Most governance approaches I have seen are audit logs after the fact. Proving compliance at the point of execution is a different thing entirely.

Question: how does OpenBox handle governance for agents that are pulling context from multiple systems with different access policies? For example, an agent that reads from both a public knowledge base and a restricted HR system in the same workflow. Does the governance layer enforce per-source permissions, or is it more at the action level?

Really thoughtful point @najmuzzaman, and you’re right, this is exactly where governance starts to matter as agents move into real workflows.

It’s handled at both the source and action level. Each context source is evaluated with its own identity and access policy, so an agent can read from public data while restricted systems like HR remain permission-gated.

When the agent composes a workflow, OpenBox then checks whether that specific action is allowed given the combined context, and can block or redact steps if sensitive data would flow into an unauthorized tool or output.

OpenBox

What Tahir has laid out here is what we have been building toward: a platform that governs every agent action at the point of execution, with full observability and cryptographic proof, from day one. If you are building with agents and want to understand how it works technically, happy to answer everything here.