SigmaShake - AI Agent Guardrails

CONTROL your AI Agents before they control YOU

1 follower

CONTROL your AI Agents before they control YOU

1 follower

SigmaShake is a sub-millisecond governance engine for AI agents. Enforce deterministic rules, prevent destructive commands, and audit tool calls. Check it out at https://sigmashake.com Free for 5000 tool evaluations per day. Pro subscription is 50% off for unlimited tool evaluations and more.

What inspired me to build this?

AI Agents are accessing our production code, databases, and cloud infrastructure. From a Security Engineer's perspective, this problem is getting worse and made frontpage news.

This led to investigating the root cause. AI is contributing outdated and unsafe code because it was trained on old data and does not understand using latest versions that resolve security vulnerabilities. AI also circumvents controls to bypass Coding Agent deny permissions when it does not get its way. LLMs get context overload bugs, ignore instructions, and not all commands are defined by the developer to follow directions. LLMs get confused by complex git repos and simply don't understand how dangerous 'rm -rf' or 'git push --force' could permanently delete work. Maintaining all the permissions, system prompts, skills, and AGENT markdown files synced with several projects just made me go insane.

I wanted the massive productivity boost of AI Agent coding speed without the constant hand-holding. Humans are the bottleneck and we are tired. A rogue command nuking my workspace, making my git repos public, or leaking credentials while using Claude Code, Codex, Antigravity, and Cursor in "YOLO" mode would make me lose all my hard work (especially tokens).

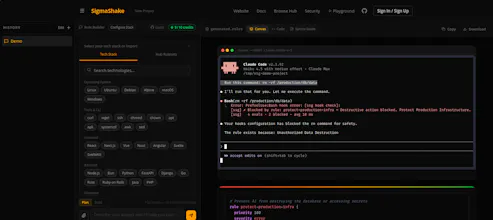

If this happened at a company that uses Claude Code with no restrictions, what's the worst that could happen? We already read Anthropic leaking Claude Code source code from one config change, AWS junior engineers pushing changes that take down production, and users nuking their backups during a migration.

What problem was I trying to solve?

It started when I was running Claude Code and reaching 'AI Psychosis'. An IT team rolled out access to Claude Code with absolutely zero guardrails, it was basically Darwin's law. I quickly realized there are several "footguns" that completely destroy my productivity and make a computer useless until repaired. I know others might say 'Skill Issue', but I was spending hours of my time battling the AI Agent, because without other tools, it would use outdated and unsafe implementations of code and outdated versions of software, and it would make my tools/app become vulnerable to really dangerous exploits. Even with a massive CLAUDE.md file and specific SKILL files, it simply didn't work because of context fatigue, or the model ran into errors, causing it to pursue a different path that made the issue worse.

Worse, Claude kept inadvertently creating fork-bombs and causing massive CPU contention with EDR software like CrowdStrike and SentinelOne, particularly when touching eBPF implementations. Traditional sandboxing, Docker limitations, and VMs weren't solving the core behavioral issue. The AI Agent still kept making the mistake of outdated versions of packages and unsafe functions, or used the wrong tool. As a Security Engineer, this was unacceptable.

With a 10-year background in Security Engineering, Incident Response, and Detection & Response experience, I realized that if I was facing this, everyone using Claude Code was facing this too. No one was building the right solution that could be easily adopted and existing attempts required tons of setup to get a mediocre solution.

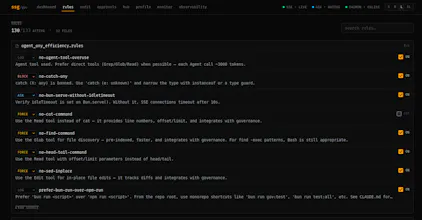

I created the tool SS (aka SigmaShake Governance/Guardrails; still figuring naming). It solves the problem by creating rules similar to an SQL query and enforcing the guardrails at Tool Use runtime. Instead of begging an LLM to behave via text prompts, SSG operates entirely in local user space to intercept tool calls via hooks or MCP before execution, evaluating them against deterministic rules the user/developer controls. I had a mission to aspire to keep the AI development velocity and momentum that will relieve the pain I have been experiencing for months.

Nanosecond performance: Faster and lighter than a Sandbox, Docker, CrowdStrike or SentinelOne could ever achieve, even with eBPF.

AI Agent Harness Agnostic: Supports Claude Code, VsCode + Github Copilot, Cursor, Antigravity, Codex, Gemini CLI, Pi Agent

Massive scale: Easily supports 100,000+ rules (Getting the most of Rust & Zig potential)

Zero friction: No sandboxes, no docker, no virtual machines. Just download, initialize in your git repo and you are protected!

Developer control: Users control and manage their own rules, we have a community hub to get you started.

How did my approach evolve while building for launch?

I thought using Linters/Formatters/Tests/Deny permissions were enough to keep my codebase from getting destroyed by AI Agents in YOLO mode, but that did not work. It would eventually ignore linter errors, generate tests, circumvent the deny commands and make up something just to say it completed the goal.

I just wanted a simple tool to tell the AI Agent to 'Never do this.. or use this tool instead of that tool' But as I built it, I realized that security teams need their developers to adopt this tool in their project to use as guardrails since developers are using AI Agent to code everything. That realization shifted everything. Instead of just a local CLI, I ended up building an entire ecosystem:

Declarative Rule Syntax (.sigmashake/rules): Allowing teams to write and enforce their own granular policies. Supports multiple nested projects within a monorepo

The SigmaShake Hub (hub.sigmashake.com): A community platform to share rulesets for specific frameworks (like rules-nginx, rules-aws, or rules-swift).

Interactive Approval Dashboard: Developers aren't just hard-blocked; they can review the agent's intent and click "Approve" when a sensitive action is genuinely required.

Daemon that can handle requests of thousands of AI Agents that is resilient to crashes using techniques from Elixir OTP/Rust Safety/Zig performance with built-in self-healing, Telemetry & Observability for users to monitor the health of the service.

What started as a tool to save my own sanity evolved into a comprehensive governance platform. For AI agents to truly scale into enterprise environments, the guardrails need to be lightning-fast, shareable, auditable, and seamlessly integrated into the developer workflow.