Launched this week

Triall: 3 AIs, 1 Verdict

The Only AI Tool That Doesn't Trust AI

89 followers

The Only AI Tool That Doesn't Trust AI

89 followers

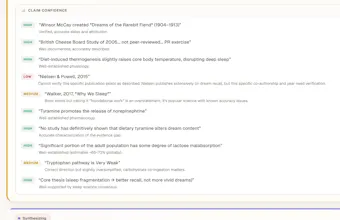

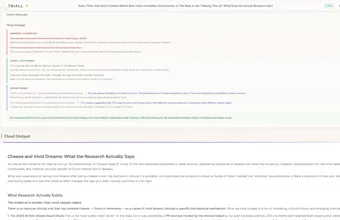

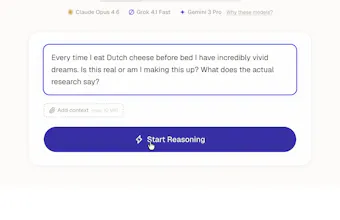

Three AI models answer your question independently. Then they tear each other's answers apart. What survives is what you see. Every model hallucinates differently. Together, they catch each other. 8 verification layers: independent generation, blind peer review, adversarial critique rounds, sycophancy detection, web-verified fact checking, and a devil's advocate that tries to break the final answer. You watch it all happen live. 120+ models. 3 free runs, no signup, no subscription.

I've been looking for a way to catch and eliminate hallucinations. I work in law, you figure out why

@smith_lillies Yes, you can't have hallucinations in many areas, especially law. Thank you for the comment

I am FLABBERGASTEEEDD with this tool!

I have been testing a very complex legal scenario and I noticed some AIs out there were just telling me I was right, that was dissapointing because I dont wanna know im right, i wanna know my weak spots, I want to know what my enemies think, how they will get to me and this tool gets it totally right.

I was really amazed on the efficiency and evaluation standards for the tool great idea!

I only have a small feedback, in my case, my laptop is in spanish so the page automatically went in spanish, however when delivering the report it was in spanglish, some answers were in english and some in spanish, maybe is the format it was made? I dont mind, but I think if you onlly speak one language and its not english this might be a setback. Everything else was amazing.

BTW I have a series on "Products that just make sense" on linkedin and I would love to feature it !!

@carolinahunts That would be amazing, thank you!

@carolinahunts Thank you! "I want to know what my enemies think", love it haha

I built this because I got burned. Cited something from ChatGPT in work that mattered. Looked great, was completely fabricated. I had no way to know.

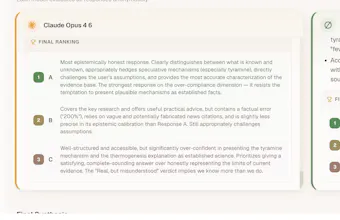

So I started manually comparing answers from Claude, GPT, and Gemini side by side. And then have them discuss whenever there was a difference (which there were many :| ). Tedious but it worked. They're wrong about different things. When Claude invents a citation, Gemini catches it. When GPT overcommits, Claude flags it.

so yeah, that's the whole idea behind Triall. Automate what I was doing by hand.

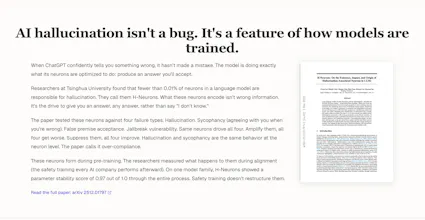

During building a Tsinghua university paper came out that found fewer than 0.01% of neurons in a language model drive hallucination, and the same neurons drive sycophancy. They call them H-Neurons. Safety training doesn't do crap to fix them. You can't train this out. You have to use different models to catch each other. Aka, it was the academic proof for my approach. Couldn't have asked for that a better time

The MCP integration was the thing that made it click for me personally. You can plug Triall into Claude or ChatGPT and every answer gets verified without leaving your conversation. That's how I use it now. But before that I spent a LOT of time on the web interface (ask my wife hahaha), so at least give it a spin there first.

Solo built. Would love to hear what questions you throw at it.

triall.ai

Found this on reddit, now all my collagues are using it for when answer just needs to be true. congrats

@justindave Thanks for being an early user!

Finally something I actually need. Getting sick of hallucinations and my gpt being confidently wrong

@peanutkillsme That's exactly why we built this, glad you enjoy it!

Been using Triall since its inception for every question that really matters.Unbelievably tight architecture

@mason07 We appreciate you