TrueFoundry AI Gateway

Connect, observe & control LLMs, MCPs, Guardrails & Prompts

2.1K followers

Connect, observe & control LLMs, MCPs, Guardrails & Prompts

2.1K followers

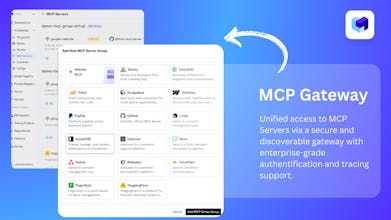

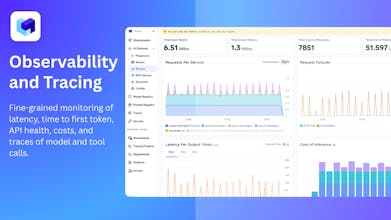

TrueFoundry’s AI Gateway is the production-ready, control plane to experiment with, monitor and govern your agents. Experiment with connecting all agent components together (Models, MCP, Guardrails, Prompts & Agents) in the playground. Maintain complete visibility over responses with traces and health metrics. Govern by setting up rules/limits on request volumes, cost, response content (Guardrails) and more. Being used in production for 1000s of agents by multiple F100 companies!

Interactive

Free Options

Launch Team / Built With

This is impressive. What stood out to me is how you’ve taken all the messy parts of running agents in production—MCP auth headaches, scattered logs, model swaps, guardrails—and pulled them into one place. The trace visibility alone feels like a huge win for anyone trying to move beyond demos.

I’m curious to see how this scales as more teams start chaining complex workflows. Great work and congrats on the launch!

TrueFoundry AI Gateway

@priyanshu_de1 Thank you so much - you articulated the exact problem space we kept hearing from teams building real agent systems.

Once you move beyond demos, everything becomes messy very quickly:

• MCP auth + permissioning scattered across tools

• Logs and traces split across providers

• Model swaps breaking workflows

• Guardrails implemented differently in every service

• Zero visibility into how agents reason across steps

The goal with the Gateway was to compress all of that operational complexity into one coherent layer — so teams get:

Unified authentication + access control for every model, tool, and agent

Consistent logs + traces across all providers, making debugging actually possible

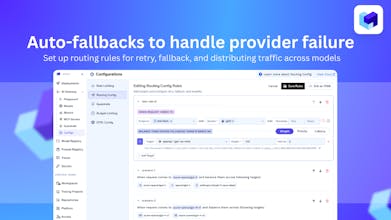

Model routing + swaps without code changes

Guardrails that run uniformly across all requests

A managed backbone that agents can trust as they start chaining tools and calling each other

Your question on scaling complex workflows is spot on — that’s where we’re heading next with:

Agent-to-Agent (A2A) protocol,

Agent registry + discovery,

Semantic caching, and

Runtime-level safety + observability for multi-step agent chains.

Super grateful for your thoughtful feedback - it’s exactly the direction we’re building toward. Thanks again for the support! 🙏

Adjust Page Brightness - Smart Control

This is the missing glue for agent workflows. Love how everything sits under one control plane.

TrueFoundry AI Gateway

@kshitij_mishra4 100%. Thanks!

🚀 Congrats on the launch! Love how TrueFoundry AI Gateway simplifies multi-model access, centralizes keys, and adds real observability + guardrails. Feels like the missing layer for taking AI apps from prototype to production. Great work!

Trace-AI

EasyFrontend

Congratulations on the lunch. Best wishes for the team TrueFoundry

TrueFoundry AI Gateway

@getsiful Thanks for your support! Would love for the team to try out the product post launch.

Calk AI

this is the kind of product that would benefit a lot of teams thought!

TrueFoundry AI Gateway

@quentin_fournier_martin Thanks for your support!

DiffSense

Whats the top 3 usecases for this?

TrueFoundry AI Gateway

@conduit_design Great question! The top 3 use cases we see:

Multi-provider routing & failover: Easily run OpenAI, Anthropic, local models, etc. behind one API and switch based on price, latency, or quality.

Guardrails & compliance: Apply consistent policies (PII redaction, safety checks, access control) across all LLMs and agent tool calls.

Deep tracing & debugging: Visualize entire LLM/tool call flows, catch failures, add fallbacks, and tune prompts with production-grade observability.