TrueFoundry AI Gateway

Connect, observe & control LLMs, MCPs, Guardrails & Prompts

2.1K followers

Connect, observe & control LLMs, MCPs, Guardrails & Prompts

2.1K followers

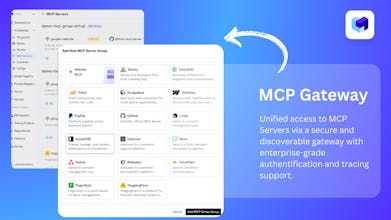

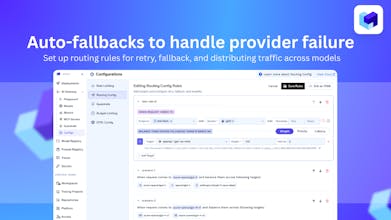

TrueFoundry’s AI Gateway is the production-ready, control plane to experiment with, monitor and govern your agents. Experiment with connecting all agent components together (Models, MCP, Guardrails, Prompts & Agents) in the playground. Maintain complete visibility over responses with traces and health metrics. Govern by setting up rules/limits on request volumes, cost, response content (Guardrails) and more. Being used in production for 1000s of agents by multiple F100 companies!

Interactive

Free Options

Launch Team / Built With

Congrats on the launch. Having a unified OpenAI compatible endpoint across all LLM providers is a game changer for devs!

TrueFoundry AI Gateway

@dheerajmundhra Thanks for the support! Do try it out here https://www.truefoundry.com/ai-gateway and share feedback!

Triforce Todos

Congrats on the launch! This control plane approach feels like exactly what enterprises need for scaling LLMs safely.

TrueFoundry AI Gateway

@abod_rehman Thank you for your support.

TrueFoundry AI Gateway

@abod_rehman Hey Abdul, thanks a lot! Do try and give us your feedback! https://www.producthunt.com/products/truefoundry-ai-gateway

Love how this evolved from routing into true control and accountability. SecureSlate complements this by keeping that production AI traffic compliant and audit ready across SOC 2, ISO, and HIPAA, so platform teams can scale confidently without extra operational burden.

TrueFoundry AI Gateway

@nirvaya1 Love this, thank you! One of our big goals was exactly that -move beyond simple routing into real governance, visibility, and accountability for all AI traffic.

HabitGo

Love how this goes beyond simple routing – finally a serious control layer for production-grade AI stacks. Impressive work! 🔥

TrueFoundry AI Gateway

@kui_jason Thanks for your feedback!

CodeBanana

Great product! Congrats on the launch on PH.

TrueFoundry AI Gateway

@zethleezd Thanks for your support!

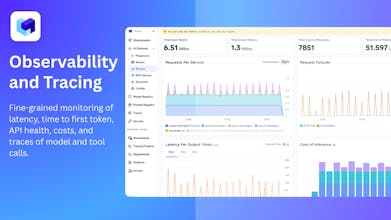

I've been testing this for a while, and the observability layer is revolutionary on its own.

Having model latency breakdowns, token usage insights, agent traces, and failure cases all in a single interface saves a huge amount of time when debugging. This is exactly the kind of tooling that pushes LLM applications toward true, mature engineering systems.

We’ve been testing the TrueFoundry AI Gateway for a few weeks, and it genuinely solved one of our biggest headaches — managing multiple LLM providers and internal models without drowning in glue code. The observability layer alone is worth it; being able to trace prompts, responses, latency, and failures in one place has saved us hours of debugging.

If you’re running production AI workloads or multiple teams rely on LLMs, this is a game-changer. If you’re just experimenting, it might be overkill — but for scaling, governance, and reliability, it’s one of the cleanest solutions we’ve tried.