I asked AI to Build a Competitor to My Own Product. It Did. Here’s What I Learned.

Last month, I did something that felt slightly insane.

I took our product description, fed it into ChatGPT, and asked it to build a competitor. Not a parody. A real competitor. Better features, better positioning, better everything. I told it to be ruthless.

It did!

The output was polished. Confident. Structured like a real go-to-market plan. It named features we don’t have. It positioned itself against us. It looked like a threat on paper.

Then I spent two weeks stress-testing that competitor against real market data. What I found changed how I think about AI, competition, and the actual moats that matter.

The Experiment

I gave ChatGPT a simple prompt:

"You are a product strategist. Here is a description of Rankfender. Build a superior competitor. Give it a name, a feature set that beats us, a target audience, a pricing model, and a positioning statement. Be brutal."

The AI delivered.

It named the competitor "ClarityFlow" (not the real name, but the archetype). It gave it features we don’t have yet. It positioned ClarityFlow as "the enterprise-grade alternative for teams who outgrew us." It priced it higher to signal premium value. It even wrote a sample homepage headline.

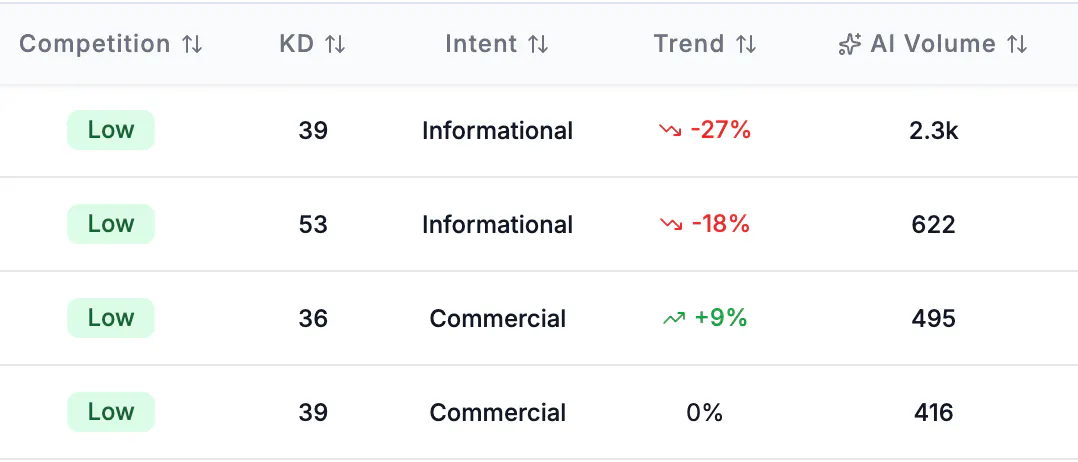

On paper, ClarityFlow was scary. I checked all the metrics of the keywords related to it, specially the AI volume metric and my data was right :

Then I asked myself: would this competitor actually win? Not in a PowerPoint deck. In the real world, where AI systems decide what to recommend, where buyers ask ChatGPT for options, and where authority takes years to build.

I ran the data.

What AI Got Right

The competitor AI designed was structurally perfect for one specific environment: the world of AI citations.

It had:

A clear category definition (the exact language AI systems look for)

Feature comparison tables against incumbents (the #1 content type AI cites)

FAQ sections optimized for the questions people actually ask

A pricing model presented in a structured format AI loves to extract

This matters because AI doesn't read. It extracts.

From Rankfender’s research on AI visibility statistics:

Pages with structured FAQ schema get cited 4.2x more than pages without.

Comparison pages (“vs.” content) appear in 92% of top-recommended SaaS brands.

Content with clear entity definitions and schema markup sees a 30% improvement in AI citation frequency (Princeton / Georgia Tech GEO Study).

The AI competitor was engineered for extraction. It would have won citations faster than we did at launch. That was humbling.

What AI Missed Completely

Here’s where ClarityFlow fell apart.

1. Authority takes time. AI assumes it’s instant.

The AI competitor assumed that if you build it, AI systems will cite it. That’s not how it works.

Rankfender’s data shows 67% of brands are not mentioned at all when AI is asked about their product category. That’s 2 out of 3 brands — including many with great products — completely invisible. ClarityFlow would launch into a landscape where two-thirds of competitors don’t exist in AI. But that also means it would start at zero citations, regardless of how perfect its feature set was.

2. Share of Voice is earned, not assumed.

The AI competitor didn’t account for existing competitors already owning the category in AI answers.

In our category, the top 3 mentioned brands capture 71% of AI product recommendations. That’s not a coincidence. Those brands have spent years building the content, reviews, and third-party coverage that AI systems trust. According to the same stats page, 84% of AI-mentioned brands have extensive Wikipedia or third-party coverage.

ClarityFlow had none of that. Its Share of Voice would have been 0%. Not because its features were worse, but because AI systems recommend what they already cite.

I wrote more about this in our guide on AI Share of Voice. SOV isn’t about being the best. It’s about being the most cited. Those are different things.

3. Original data is the only moat AI can’t copy.

When I asked AI to build a competitor, it generated generic claims. "Best-in-class." "Enterprise-grade." "Trusted by teams."

What it couldn’t generate was proprietary data from our own customers. It didn’t know our churn rate. It didn’t know the specific workflow our users loved. It didn’t have the internal benchmark that made our product different.

From the Princeton / Georgia Tech GEO study cited in our stats: content with structured statistics and original sources sees a 40% increase in AI citation rate. AI can copy your feature list. It can’t copy your data. That’s the real moat.

4. AI ignores platform variance.

ClarityFlow was designed as a single entity. But AI systems don’t agree on anything.

What wins on ChatGPT doesn’t always win on Perplexity. What Gemini cites often differs from Claude. Rankfender tracks across 7 AI systems because we learned that platform-specific Share of Voice varies dramatically. ClarityFlow would have optimized for ChatGPT and lost everywhere else.

5. The timeline problem.

The AI competitor assumed it could launch and win immediately. But from our stats: 6–18 months is the typical lead time before new content is reflected in AI model training data. That’s not a bug. It’s how the system works.

ClarityFlow would have existed on day one. It would have been cited in AI answers maybe a year later. By then, we would have moved twice.

The Real Competitive Landscape

I ran ClarityFlow through the lens of what actually drives AI visibility in SaaS.

From our SaaS industry research:

73% of B2B buyers now start with AI search

91% of SaaS brands have zero AI visibility

Traffic from AI-referred brand mentions converts at 2.8x higher than generic search

Brands with dedicated comparison pages earn 4.2x more AI mentions

The AI competitor looked dangerous on paper. But in the actual market, it would have launched into a category where 91% of competitors are invisible, where even great products take months to get cited, and where Share of Voice compounds over years, not days.

What I Actually Learned

1. AI is a great strategist but a poor realist.

It can design a perfect competitor on paper. It cannot simulate the competitive moat built from real citations, real authority, and real Share of Voice accumulated over time. The gap between a perfect product and a cited product is where real businesses live.

2. If your brand doesn’t own AI Share of Voice today, someone else does.

In our category, the top 3 mentioned brands capture 71% of AI recommendations. That’s not a coincidence. Those brands didn’t just build better products. They built the content, the third-party coverage, the comparison pages, and the FAQ schema that AI systems trust.

If you want to understand how that works, I wrote a deep dive on AI Share of Voice — how to measure it, how it varies by platform, and why it’s the only competitive metric that matters in AI search.

3. Original data is the only moat AI can’t copy.

AI can generate a competitor. It can write better copy. It can structure better features. It cannot generate proprietary data from your customers. It cannot replicate the internal benchmarks you’ve built. It cannot know what your users actually complain about.

The AI visibility statistics page has a stats that still surprises me: content with structured statistics and sources sees a 40% increase in AI citation rate. That’s not about being louder. It’s about being verifiable. AI trusts what it can source. Give it your data. Make it the only source.

4. Category ownership takes years. AI doesn’t account for time.

The AI competitor wanted to win overnight. Real markets don’t work that way. Share of Voice compounds. Authority builds slowly. Citations accumulate. The brands winning AI visibility today started two, three, five years ago. That’s not a flaw in AI. It’s a feature of reality.

5. The best defense is being cited everywhere.

ClarityFlow was designed to beat us on one platform. But real competitive advantage comes from being cited across all of them. Rankfender tracks 7 AI systems because we’ve learned that platform variance is the rule, not the exception. If you’re only visible on ChatGPT, you’re invisible on 6 other platforms where your competitors might be winning.

How This Changed What We Build

After this experiment, we stopped worrying about hypothetical competitors and started doubling down on what actually matters.

We doubled our investment in structured content: more comparison pages, more FAQ sections, more data-backed articles.

We started tracking Share of Voice weekly across all 7 platforms to see where competitors were gaining ground.

We published more original data from our own customer base — things AI can’t replicate.

We built Rankfender to do all of this automatically for other SaaS companies facing the same problem.

If you’re building in SaaS, the competitor you should worry about isn’t the one AI designs. It’s the one that’s already winning citations in your category while you’re invisible.

Your Turn

Ask AI to build a competitor to your product. Let it be ruthless.

Then ask yourself:

What does it assume that isn’t true?

What does it miss about your actual market?

What data do you have that it can’t copy?

How long would it take for that competitor to actually get cited?

The gap between AI’s perfect competitor and your actual competitive moat is where your real advantage lives.

Imed Radhouani

Founder & CTO – Rankfender

Helping SaaS companies own their AI visibility

Resources from This Post

AI Visibility for SaaS — industry-specific data and benchmarks

AI Share of Voice Guide — how to measure and improve your competitive position

AI Visibility Statistics 2026 — 28 data points on AI search adoption, citations, and ROI

Replies

As a solo indie dev, I've been using AI (Claude through Cursor) to build my first app from scratch. It's wild how it can analyze competitive positioning too. Great experiment.

Rankfender

@anthony_martino Appreciate that — and congrats on building your first app solo. That's a massive lift.

What's wild is that AI can help you build the app, help you position it, help you analyze competitors — but at the end of the day, someone still has to ship it, support it, and grind out the distribution. That's the part no model can shortcut.

What's your app? Would love to check it out.

MockRabit

I found your findings interesting.

Rankfender

@ishwarjha Appreciate that — thank you.

Honestly, the most surprising part was how much of the competitor's "advantage" came down to content structure, not product features. The AI didn't invent anything we couldn't build. It just knew exactly how to package it for extraction.

Have you run this experiment on anything you're working on? Would love to hear what it surfaced.

This is a really smart exercise. I tried something similar with one of my projects and the AI competitor it came up with was honestly better at positioning than what I had. Made me rethink my landing page copy completely.

The point about AI being a "great strategist but poor realist" is spot on. It can dream up perfect features and pricing but it has no idea about the 6-18 months of content grinding needed to even show up in AI search results. That moat is invisible to the model.

The 91% stat about SaaS brands having zero AI visibility is wild. Feels like the new version of "most businesses don't have a website" from 15 years ago.

Rankfender

@mihir_kanzariya That means a lot — especially coming from someone who actually ran the experiment themselves.

The positioning piece is so real. AI has perfect hindsight and zero ego. It looks at your landing page and thinks "this could be clearer, this could be punchier, this could win more." It's like having a brutally honest copywriter with no emotional attachment. I've rewritten half our homepage based on what AI suggested in that competitor exercise.

The moat being invisible to the model is the thing I keep coming back to. AI can simulate a feature set. It cannot simulate the grind of getting cited 50 times over 18 months. It cannot simulate the trust built from 200 comparison pages across the web. It cannot simulate the proprietary data you collect from real users. Those are the real moats. And they're invisible to the model exactly because they're not in its training data.

The 91% stat haunts me. It's exactly like "most businesses don't have a website" in 2005. Back then, having a website was a competitive advantage. Now it's table stakes. Same thing is happening with AI visibility. The window where most brands are invisible is closing. The ones who start now will own the category. The ones who wait will be catching up for years.

What was the project you ran this on? Curious what AI got wrong about your market that you knew instantly.

Very strong insight.

AI can generate a polished competitor in minutes, but it can’t compress the trust, distribution, and content depth needed to actually win in the market. A lot of founders will underestimate that.

Rankfender

@mikita_aliaksandrovich Thank you. That "compress" word is exactly right.

AI collapses time in analysis but can't collapse it in reality. It can generate a 12-month go-to-market plan in 12 seconds. It can't generate the 12 months of actual work — the outreach, the failed experiments, the slow accumulation of trust.

The founders who underestimate that are the ones who wake up a year later wondering why their "perfect" product hasn't been cited once. The ones who overestimate it are the ones grinding on content, comparisons, and customer data while everyone else waits for AI to magically put them in answers.

What's been the hardest part for you to build that AI can't speed up?

This is a great exercise. I'm building a tool that evaluates side project ideas with AI, and the "great strategist but poor realist" point hits hard. AI can generate a perfect competitor analysis on paper, but it completely misses the messy reality — like whether anyone actually searches for that problem, or whether the market is too small to sustain a business.

The original data moat is real. The one thing AI can't fake is what your actual users do and say.

Rankfender

@nerdkick11 Exactly this.

The "messy reality" gap is where actual businesses live. AI can simulate a perfect competitor analysis, but it can't tell you if someone's already tried and failed. It can't tell you if the problem is urgent enough for people to pay. It can't tell you if the market is five people on Reddit or 5,000 companies with budget.

The original data moat is the only thing that scales. Features get copied. Positioning gets copied. Messaging gets copied. Your customer conversations, your onboarding drop-off points, your "we almost didn't buy because X" insights — those can't be scraped. Those are yours.

For what you're building — evaluating side project ideas — the real gold might be less about what AI says is perfect and more about what early users actually do when they hit your product. That messy signal is the signal that matters.

What's your tool? Curious to see where you're going with it.

@imed_radhouani it's called IdeaDose — ideadose.dev. you feed it a startup idea and it runs market size, competition, monetization viability, and technical feasibility checks. then gives you a kill/pivot/go verdict.

the early signal thing you mentioned is exactly what i'm focused on right now. 78 visitors, 2 signups, both bounced in 8 seconds. that messy data is already telling me more than any AI analysis could about what needs fixing before launch.

launching on PH next thursday actually. would love your take on it when it's live.

Rankfender

@nerdkick11 IdeaDose — love the name. Direct, memorable, tells you exactly what it does.

That 78 visitors / 2 signups / 8 seconds data is brutal but priceless. Most founders would ignore it or panic. You're reading it like a map. Those two people who signed up? They were curious enough to click but left because something didn't match expectation. That's the signal AI can't give you.

What did they land on? Pricing page? Dashboard? Something that made them hesitate. That's your gold.

Definitely want to see it when it launches next Thursday. DM me the link when you're live and I'll swing by. Always happy to support makers who treat messy reality as data, not noise.

Good luck with the launch — and with those 8 seconds.

appreciate the breakdown — "meet expectation vs reality" gap is exactly what i'm debugging right now. will DM you launch day. thanks for the support

Out of curiosity, I created infographic out of it, (I am visual learner)

The most useful reframe: ClarityFlow isn't a competitive threat — it's a content audit. The AI built a competitor optimized for extraction (FAQ schema, comparison tables, structured entity definitions) because those are exactly the signals AI systems reward. Running the experiment against your own product reveals not what a competitor could do, but what your citation profile is currently missing. The scary part isn't that AI can build your competitor — it's that the output tells you precisely where your own content stack fails to match what AI systems actually trust.

Rankfender

@giammbo You're absolutely right. ClarityFlow wasn't a competitor. It was a mirror.

The AI built a product optimized for extraction because that's what the training data rewards. FAQ schema. Comparison tables. Structured entity definitions. Clear category language. It didn't invent those features out of nowhere — it pulled them from the patterns that actually win citations.

So when it designed a "better" version of my product, it was really telling me: here's where your current content doesn't match what AI trusts.

That changes everything. It's not a threat to defend against. It's a content audit you can run for free.

The scary part isn't that AI can build your competitor. The scary part is realizing that the gaps in your citation profile were sitting there in plain sight, and you never noticed because no one ever showed you what AI actually looks for.

I've started running this experiment quarterly now. Not to find competitors. To find the gaps in our own content that AI would exploit if someone else filled them first.

Thank you for this. Honestly one of the most valuable comments I've had.

Ran a similar exercise. The AI competitor it generated was sharper on messaging than what I had shipped. That was humbling but useful.

The thing it missed entirely was distribution logic -- it assumed discoverability follows product quality. In practice the citation moat, the review corpus, the third-party coverage, all of that is path-dependent. You can't shortcut it with a better feature set. What did the AI get wrong about your specific market that you spotted immediately?

Rankfender

@chinedu_chidi_ikejiani That's the exact moment it shifts from scary to useful — when the messaging is sharper and you have to admit it.

The thing the AI got wrong about our market was trust signals. It assumed trust follows feature quality. In SaaS, especially B2B, trust follows third-party validation. The AI competitor had perfect features but zero authority. No G2 reviews. No SOC2 badge. No mention in any analyst report. No case studies with logos.

In the real market, a product with 80% of the features but 100% of the authority wins every time. AI can't simulate that because it's not in the training data — it's in the 6-18 months of grinding to get cited, reviewed, and mentioned.

The second thing it missed: integration moats. Our product connects to 30+ tools customers already use. The AI competitor assumed users would switch. In reality, switching costs are brutal. No feature set overcomes the friction of reconfiguring your entire stack.

What about your market? What did AI assume that you knew instantly wouldn't work?

My Financé

I think this is a really cool idea but i get the sense you are using llm's to generate a lot of the content here, which makes it hard to interact with. id encourage you to try and increase the authenticity and i bet you could get more bang for your buck with this concept!

Rankfender

@catt_marroll You're right — and I appreciate you saying it.

I've been deep in the data and the product for months. When it came time to write this thread, I defaulted to structured, polished, "safe" language. The irony isn't lost on me: I wrote about AI generating a perfect competitor, then used AI to make my own writing too perfect :D !

The core insight of the thread is real. Running that experiment was uncomfortable and genuinely changed how I think about competition. But the delivery got away from me.

I'll take your advice. Next thread I'll write it raw — typos, rough edges, the whole thing. More me, less LLM.

Thanks for the honest nudge.

MacQuit

This is a brilliant experiment and I love the honesty in the findings. As someone who's been building products for 10 years (both Mac utilities and now an AI-powered financial podcast app), I've seen this from the other side. I actually use AI heavily in my own product pipeline.

What struck me most is your point about the gap between a polished plan and real-world execution. AI is incredibly good at synthesizing existing patterns and producing confident-sounding strategies. But it fundamentally lacks two things: taste from real user feedback loops, and the willingness to make ugly tradeoffs that only come from shipping and iterating.

When I built my Mac utility apps, the features that actually drove retention were never the ones that looked impressive on paper. They came from watching real users struggle with specific workflows. AI can generate a perfect feature matrix, but it can't sit in a support thread and feel the frustration behind a bug report.

Your moat isn't the features you have today. It's the compounding knowledge you build from every user interaction. That's something no AI-generated competitor can replicate from a prompt.

Rankfender

@lzhgus This is such a generous and grounded perspective — thank you.

The "taste from real user feedback loops" is the phrase I didn't know I needed. AI can simulate a feature matrix. It cannot simulate the gut feeling you get after the fifth support ticket about the same obscure workflow. It cannot simulate the decision to kill a feature that looks good on paper but confuses every new user. That taste comes from shipping, failing, and shipping again.

The Mac utility apps example hits home. The features that keep people coming back are never the ones that look impressive in a comparison table. They're the ones that just work when someone is in a hurry at 2am. AI can't build that. It can only see the output, not the hours of grinding to make it feel invisible.

Your AI-powered financial podcast app sounds fascinating. What's been the ugliest but most valuable tradeoff you've made building it? Always curious what survives the gap between plan and reality.

MacQuit

@imed_radhouani Great question. The ugliest tradeoff was accepting that AI-generated content can sound "perfect" but feel lifeless. Early versions were technically flawless but nobody wanted to come back. We had to deliberately make things feel more human, more opinionated, less polished. It looked worse on paper but engagement went up.

The other painful one: choosing depth over breadth. We could generate a lot more content per day, but users told us they'd rather have fewer pieces that actually help them understand what's going on. Less output, more value. Both decisions felt wrong at first but they're what drives retention now.