I asked AI to Build a Competitor to My Own Product. It Did. Here’s What I Learned.

Last month, I did something that felt slightly insane.

I took our product description, fed it into ChatGPT, and asked it to build a competitor. Not a parody. A real competitor. Better features, better positioning, better everything. I told it to be ruthless.

It did!

The output was polished. Confident. Structured like a real go-to-market plan. It named features we don’t have. It positioned itself against us. It looked like a threat on paper.

Then I spent two weeks stress-testing that competitor against real market data. What I found changed how I think about AI, competition, and the actual moats that matter.

The Experiment

I gave ChatGPT a simple prompt:

"You are a product strategist. Here is a description of Rankfender. Build a superior competitor. Give it a name, a feature set that beats us, a target audience, a pricing model, and a positioning statement. Be brutal."

The AI delivered.

It named the competitor "ClarityFlow" (not the real name, but the archetype). It gave it features we don’t have yet. It positioned ClarityFlow as "the enterprise-grade alternative for teams who outgrew us." It priced it higher to signal premium value. It even wrote a sample homepage headline.

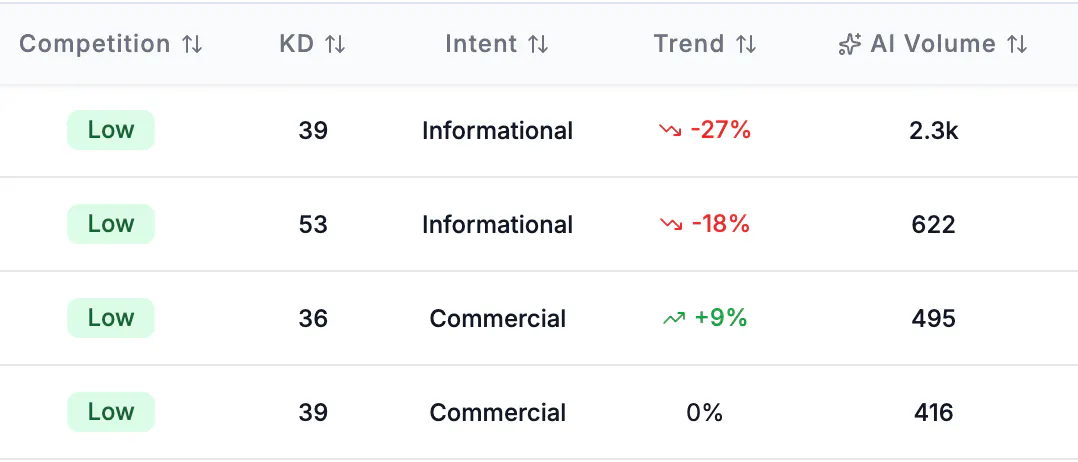

On paper, ClarityFlow was scary. I checked all the metrics of the keywords related to it, specially the AI volume metric and my data was right :

Then I asked myself: would this competitor actually win? Not in a PowerPoint deck. In the real world, where AI systems decide what to recommend, where buyers ask ChatGPT for options, and where authority takes years to build.

I ran the data.

What AI Got Right

The competitor AI designed was structurally perfect for one specific environment: the world of AI citations.

It had:

A clear category definition (the exact language AI systems look for)

Feature comparison tables against incumbents (the #1 content type AI cites)

FAQ sections optimized for the questions people actually ask

A pricing model presented in a structured format AI loves to extract

This matters because AI doesn't read. It extracts.

From Rankfender’s research on AI visibility statistics:

Pages with structured FAQ schema get cited 4.2x more than pages without.

Comparison pages (“vs.” content) appear in 92% of top-recommended SaaS brands.

Content with clear entity definitions and schema markup sees a 30% improvement in AI citation frequency (Princeton / Georgia Tech GEO Study).

The AI competitor was engineered for extraction. It would have won citations faster than we did at launch. That was humbling.

What AI Missed Completely

Here’s where ClarityFlow fell apart.

1. Authority takes time. AI assumes it’s instant.

The AI competitor assumed that if you build it, AI systems will cite it. That’s not how it works.

Rankfender’s data shows 67% of brands are not mentioned at all when AI is asked about their product category. That’s 2 out of 3 brands — including many with great products — completely invisible. ClarityFlow would launch into a landscape where two-thirds of competitors don’t exist in AI. But that also means it would start at zero citations, regardless of how perfect its feature set was.

2. Share of Voice is earned, not assumed.

The AI competitor didn’t account for existing competitors already owning the category in AI answers.

In our category, the top 3 mentioned brands capture 71% of AI product recommendations. That’s not a coincidence. Those brands have spent years building the content, reviews, and third-party coverage that AI systems trust. According to the same stats page, 84% of AI-mentioned brands have extensive Wikipedia or third-party coverage.

ClarityFlow had none of that. Its Share of Voice would have been 0%. Not because its features were worse, but because AI systems recommend what they already cite.

I wrote more about this in our guide on AI Share of Voice. SOV isn’t about being the best. It’s about being the most cited. Those are different things.

3. Original data is the only moat AI can’t copy.

When I asked AI to build a competitor, it generated generic claims. "Best-in-class." "Enterprise-grade." "Trusted by teams."

What it couldn’t generate was proprietary data from our own customers. It didn’t know our churn rate. It didn’t know the specific workflow our users loved. It didn’t have the internal benchmark that made our product different.

From the Princeton / Georgia Tech GEO study cited in our stats: content with structured statistics and original sources sees a 40% increase in AI citation rate. AI can copy your feature list. It can’t copy your data. That’s the real moat.

4. AI ignores platform variance.

ClarityFlow was designed as a single entity. But AI systems don’t agree on anything.

What wins on ChatGPT doesn’t always win on Perplexity. What Gemini cites often differs from Claude. Rankfender tracks across 7 AI systems because we learned that platform-specific Share of Voice varies dramatically. ClarityFlow would have optimized for ChatGPT and lost everywhere else.

5. The timeline problem.

The AI competitor assumed it could launch and win immediately. But from our stats: 6–18 months is the typical lead time before new content is reflected in AI model training data. That’s not a bug. It’s how the system works.

ClarityFlow would have existed on day one. It would have been cited in AI answers maybe a year later. By then, we would have moved twice.

The Real Competitive Landscape

I ran ClarityFlow through the lens of what actually drives AI visibility in SaaS.

From our SaaS industry research:

73% of B2B buyers now start with AI search

91% of SaaS brands have zero AI visibility

Traffic from AI-referred brand mentions converts at 2.8x higher than generic search

Brands with dedicated comparison pages earn 4.2x more AI mentions

The AI competitor looked dangerous on paper. But in the actual market, it would have launched into a category where 91% of competitors are invisible, where even great products take months to get cited, and where Share of Voice compounds over years, not days.

What I Actually Learned

1. AI is a great strategist but a poor realist.

It can design a perfect competitor on paper. It cannot simulate the competitive moat built from real citations, real authority, and real Share of Voice accumulated over time. The gap between a perfect product and a cited product is where real businesses live.

2. If your brand doesn’t own AI Share of Voice today, someone else does.

In our category, the top 3 mentioned brands capture 71% of AI recommendations. That’s not a coincidence. Those brands didn’t just build better products. They built the content, the third-party coverage, the comparison pages, and the FAQ schema that AI systems trust.

If you want to understand how that works, I wrote a deep dive on AI Share of Voice — how to measure it, how it varies by platform, and why it’s the only competitive metric that matters in AI search.

3. Original data is the only moat AI can’t copy.

AI can generate a competitor. It can write better copy. It can structure better features. It cannot generate proprietary data from your customers. It cannot replicate the internal benchmarks you’ve built. It cannot know what your users actually complain about.

The AI visibility statistics page has a stats that still surprises me: content with structured statistics and sources sees a 40% increase in AI citation rate. That’s not about being louder. It’s about being verifiable. AI trusts what it can source. Give it your data. Make it the only source.

4. Category ownership takes years. AI doesn’t account for time.

The AI competitor wanted to win overnight. Real markets don’t work that way. Share of Voice compounds. Authority builds slowly. Citations accumulate. The brands winning AI visibility today started two, three, five years ago. That’s not a flaw in AI. It’s a feature of reality.

5. The best defense is being cited everywhere.

ClarityFlow was designed to beat us on one platform. But real competitive advantage comes from being cited across all of them. Rankfender tracks 7 AI systems because we’ve learned that platform variance is the rule, not the exception. If you’re only visible on ChatGPT, you’re invisible on 6 other platforms where your competitors might be winning.

How This Changed What We Build

After this experiment, we stopped worrying about hypothetical competitors and started doubling down on what actually matters.

We doubled our investment in structured content: more comparison pages, more FAQ sections, more data-backed articles.

We started tracking Share of Voice weekly across all 7 platforms to see where competitors were gaining ground.

We published more original data from our own customer base — things AI can’t replicate.

We built Rankfender to do all of this automatically for other SaaS companies facing the same problem.

If you’re building in SaaS, the competitor you should worry about isn’t the one AI designs. It’s the one that’s already winning citations in your category while you’re invisible.

Your Turn

Ask AI to build a competitor to your product. Let it be ruthless.

Then ask yourself:

What does it assume that isn’t true?

What does it miss about your actual market?

What data do you have that it can’t copy?

How long would it take for that competitor to actually get cited?

The gap between AI’s perfect competitor and your actual competitive moat is where your real advantage lives.

Imed Radhouani

Founder & CTO – Rankfender

Helping SaaS companies own their AI visibility

Resources from This Post

AI Visibility for SaaS — industry-specific data and benchmarks

AI Share of Voice Guide — how to measure and improve your competitive position

AI Visibility Statistics 2026 — 28 data points on AI search adoption, citations, and ROI

Replies

Good exercise! AI can spin up a “better” product instantly, but it seriously underestimates how difficult it is to build real visibility and trust.

Rankfender

@npmitaart Exactly right. The spin-up time is instant. The trust time is measured in years.

AI looks at the market and sees a clean slate. "Here's a better product. Launch it. Win." It doesn't see the 200 comparison pages you need. The 50 FAQs that answer real questions. The 18 months of citations slowly accumulating. The reviews that take years to earn.

The hardest part of building isn't the product. It's the visibility that makes the product matter. AI hasn't learned that yet because it's not in the training data. You can't scrape trust.

Have you run this experiment on something you're building? Would be curious what AI got wrong about your market.

The quarterly cadence is the right move — the gaps don't stay static as more brands fill in structured content around you. Good luck with it.

Rankfender

@giammbo You're absolutely right — and that's what I didn't fully appreciate until we started tracking it weekly at Rankfender.

The gaps don't stay static. Competitors add comparison pages. New players enter the category. Platforms change what they surface. What was a gap in January is someone else's moat by June.

That's why the quarterly cadence stuck. Every three months, the landscape shifts enough that last quarter's assumptions are already stale. The AI competitor you built in January? By April, it's already missing context.

Appreciate the good luck — and the insight. Are you tracking this in your own space? Would love to hear what you've seen shift over time.

@imed_radhouani really liked the idea, it gives you a different perspective about your brand positioning. I think this also could be mapped with the real competitors so the experiment can extend its depth and give more depth about your brand vs the market, what do you think?

Rankfender

@ayman_elafifi1 That's a great extension — and honestly, that's exactly where we ended up with Rankfender.

The AI competitor experiment is a powerful mirror, but it's still a mirror. You're looking at yourself. When you map it against real competitors in your category — their citations, their Share of Voice, their content structure — the mirror becomes a map.

Suddenly you're not just asking "what does my brand look like to AI?" You're asking "why is my competitor cited 4x more than me for the same query?" "What content do they have that I'm missing?" "Where are they winning that I didn't even know existed as a category?"

That depth is where the real strategy lives. The experiment shows you the gaps. Real competitor data shows you how to fill them.

Have you run this against actual competitors in your space? Curious what surfaced for you.