The philosophy of the project in one image:

by•

The Innovation:

Most transformers suffer from "semantic friction" in standard attention. I replaced the attention mechanism with a native E8 Root System Lattice. By leveraging the densest sphere packing in 8D, LILA-E8 achieves a state of "Geometric Resonance" that standard architectures simply cannot reach at this scale.

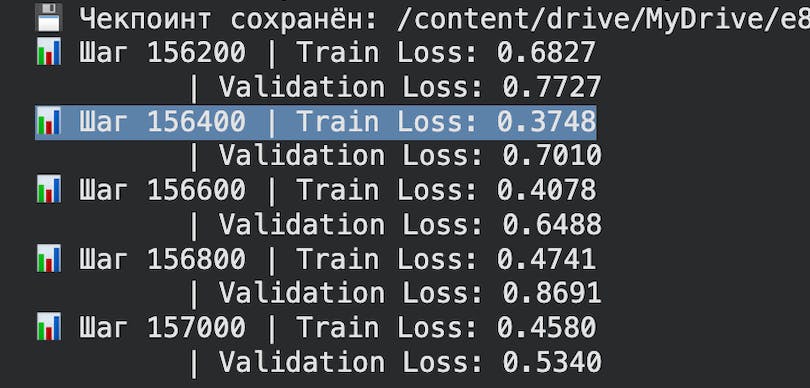

The Results (TinyStories Benchmark):

Model Size: 40M parameters.

Performance: 0.37 Train / 0.44-0.53 Val Loss (outperforming standard 60M baselines).

Context: Stable 1000+ token generation with zero semantic looping.

Hardware: Designed to run fully offline on mobile NPU/CPU

5 views

Replies