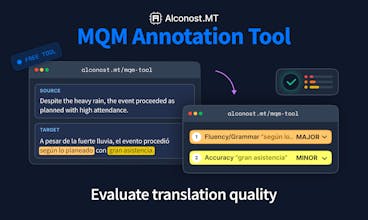

Alconost MQM Annotation Tool

Annotate translation errors, score quality, get PDF reports

54 followers

Annotate translation errors, score quality, get PDF reports

54 followers

Free MQM annotation tool for evaluating translation quality. Mark errors by category and severity, set custom weights, and export as CSV, TSV, JSON, or PDF. No installation and no signup required.

Alconost Localization

@margarita_s88 Good luck in the race!

Alconost Localization

@julia_zakharova2 Thanks very much, Julia!

Hi, I am interested in understanding translation quality for our product, but I haven’t heard of MQM before. When I check translations with other translators, I just need to know whether there are any mistakes or not, right? What’s the deal with MQM?

@lippert thank you for this question! sometimes you just need to know the mistakes. but MQM helps when you need to compare quality objectively, for example:

- Does your localization vendor meet your quality threshold?

- Which MT engine performs best for your content?

- Is a translation with 4 minor errors better or worse than one with 1 major error?

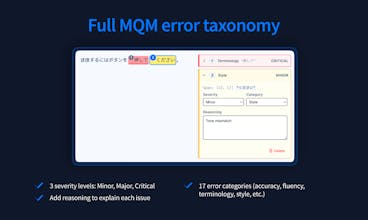

- MQM gives each error a weight based on type and severity, then calculates an overall score.

This turns subjective 'good enough' into measurable 'passed with 98.2'

@lippert Your translator marks a mistake, you ask "What's wrong with this translation?" They anwer: "It doesn't sound natural." or "It feels awkward." These are subjective answers. MQM says what exactly is wrong and in which category (Accuracy/Mistranslation; Fluency/Spelling etc.) + gives severities to these issues and overall score. Now you have an objective answer that you can measure and compare.

Kudos on your launch! What do you think, what's the real value of your tool for Localization Managers? The first thing I thought of was: If a company has a few loc. providers, a Localization Manager can use your tool for unbiased evaluation of their performance, then compare the scores and clearly see who performs best, what typical errors exist, etc. But are there any other use cases then? Cheers!

@natalia_est yessss, you are right that using MQM-based evaluation in our tool helps choose the best provider

other use cases:

you can pick the best-performing AI engine to implement AI translations

researchers can use the annotated data as datasets for machine learning (Quality Evaluation models)

Hope this helps! ;)

Glam AI

We're evaluating MT engines for our app. Would this help us compare outputs?

@dshakhbazyan yerrrr, that's one of the main use cases. run the same source content through different MT engines, annotate the outputs using the same criteria, and compare the scores. the reports show error breakdown by category so you can see which engine struggles with terminology vs. fluency, for example

Glam AI

I’ve played with it a bit and I see the uploaded samples already contain marked errors. Is this some kind of AI that does preliminary annotation job?

@anna_kuznetsova12 No AI involved here.

We uploaded samples that were already annotated by human linguists to show you what marked errors look like. We wanted to give you a quick way to explore the interface without starting from scratch.

Interesting tool. You mentioned one can do LQA with it - but LQA is different from just annotating/marking errors.

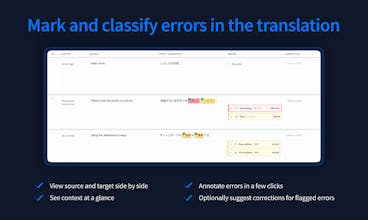

@liza_diagel that's right, the tool's primary purpose is translation evaluation. but there's also a field for correcting translations, so you can use it for full LQA workflows, not just error marking

Congrats, nicely done! Just tried it with a few segments. The error selection panel is intuitive, didn't need to read any documentation.

@margarita_tsygankova Thank you Margarita, happy that you liked our tool! ☺️