LobeHub

Agent teammates that grow with you

875 followers

Agent teammates that grow with you

875 followers

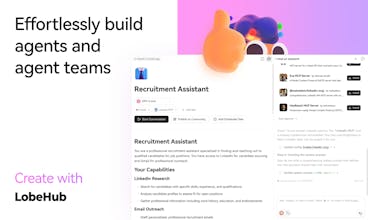

Today’s agents are one-off, task-driven tools — isolated, slow, costly, and hard to build — failing to unlock the full potential of AI models.LobeHub changes that. We build long-term agent teammates that grow with you. Anyone can easily create and collaborate with agent teams to deliver complex, end-to-end work. With multi-model support, LobeHub is faster, more cost-effective, and goes beyond single-agent systems.

Triforce Todos

How do users decide what an agent should remember vs forget?

@abod_rehman Our memory system is capable of allowing the user to define their preferences against agents, this is not only valid in memory system, for daily works, workflows you created with agents, and actions you asked the agents to do, it helps too. Try out product, and see if the current design of the memory system works for you, we know that agents are not perfect, feel free to raise bad cases that you find, so we can improve it together 🎉

Verdent

I tried the stock trading agent group and it provided clear, structured reports with risk analysis. Well done guys!

LobeHub

@charlene_he1 Thank you! We really appreciate the support.

LobeHub

Most “agents” today are chat sessions pretending to be coworkers: stateless loops, siloed context, and brittle hand-offs. You end up doing the real orchestration yourself—copy/paste between tabs, re-explaining intent, paying tokens to reconstruct state, and losing the thread of why the work mattered in the first place.

The human part matters as much as the system part. Memory should not be a black box that quietly profiles people; it should be legible and editable. LobeHub’s approach is “white-box” memory: keep what’s useful, discard what isn’t, and let agents adapt to how someone works without taking away agency. The goal isn’t more AI output—it’s less cognitive load, fewer context switches, and work that stays coherent over time.

Flexprice

The co-evolving human–agent model is interesting. Curious how you think about trust and control as agents accumulate long-term memory.

LobeHub

@shreya_chaurasia19 I think the core is human controllability (human in the loop). As long as users are given enough control, they will increasingly trust their agent teammate

LobeHub

@missbernstein hi leyla! you can use my code: arvin

congrats on the launch! the agent memory feature looks really useful 👀

I noticed the LobeChat project shortly after it was open-sourced. I'm not a programmer, but this was indeed the first open-source application I deployed on a server.

Initially, it was to take advantage of the free quotas offered by various platforms, as many large language model (LLM) providers offer generous free credits upon registration. I used all of these through LobeChat.

Later, I found that LobeChat's design philosophy greatly helped me in understanding "how to interact with AI" and "how to use AI" in the early stages. I even shared my deployed LobeChat with colleagues and friends, which was truly a wonderful memory.

Although I rarely use it now, I'm delighted to see them introduce a new generation of AI interaction methods!