Most validation tools measure intent. Market Physics measures adoption behavior. Submit your pitch → 12 stakeholder personas are generated → 10,000 synthetic agents simulate adoption across 12 market periods → you get an Idea Fitness Index, friction profile, and benchmark comparison against Airbnb, Uber, Stripe, and Slack. Grounded in Prospect Theory, Bass Diffusion, and network diffusion science. Free tier — 3 simulations, no credit card required.

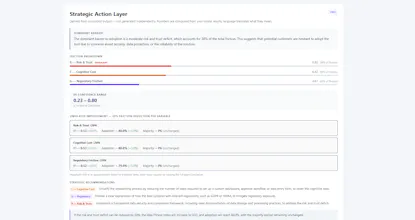

Ran UCP Checker through this — infrastructure compliance tool for an emerging protocol standard, so not the typical consumer SaaS pitch. Got IFI 0.51, 78.5% adoption probability, Uber as closest match. The Uber parallel actually makes sense: platform-by-platform rollout dynamics, trust-building before acceleration, regulatory as a background variable rather than the main blocker.

What I found most useful was the friction profile breakdown. Risk (6.92) came out as the dominant barrier over cognitive cost (6.25) and regulatory (4.58) — which validated something I suspected but hadn't quantified: the "will this standard win?" uncertainty is a bigger adoption drag than any technical or regulatory friction. That's a genuinely useful strategic signal.

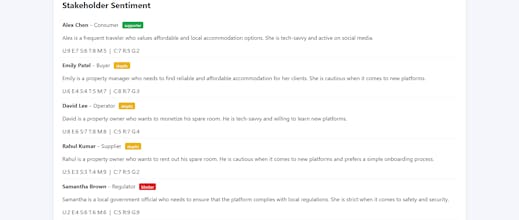

The stakeholder personas were surprisingly well-calibrated. The Early Adopter and Operator personas nailed the actual buyer profiles I see, and the Risk Officer persona correctly identified longitudinal data reliability as the key concern — not something obvious from the pitch alone.

The scenario engine is a nice touch — being able to toggle regulatory pressure and trust infrastructure independently shows how sensitive the model is to each variable. Would love to see a side-by-side comparison view of multiple scenario runs in a single report to make the deltas easier to read.

Great execution on making behavioral economics models accessible without dumbing them down. The methodology transparency (Bass, Prospect Theory, Watts-Strogatz) builds trust — you're not hiding behind a black box.

@benjifisher Ben, this is exactly the kind of feedback the engine is built for — a real pitch, from someone who knows the market, stress-testing whether the output holds up.

The Risk > Cognitive > Regulatory ordering on UCP Checker makes complete sense in hindsight. Protocol adoption lives or dies on the "will this standard win?" question before anything else. The technical and regulatory friction is secondary until that trust threshold is crossed — which is exactly what the Uber parallel captures.

The side-by-side scenario comparison is going straight to the roadmap. You're right that the delta between scenarios is where the strategic signal is, and right now you have to read them sequentially. That's a friction point in the tool itself.

Genuinely useful stress test. Thank you for running it seriously.

@benjifisher This is a great read — especially your point on risk vs cognitive cost. That “will this standard win?” uncertainty showing up as the dominant friction is exactly the kind of signal we’re trying to surface early. It’s hard to get from surveys or intuition alone. Also glad the stakeholder calibration matched real buyer profiles — that’s been a big focus (making sure personas aren’t generic abstractions but map to actual decision-makers).

On your suggestion: we actually shipped the side-by-side scenario comparison right after this. You can now run a second scenario and see IFI deltas, friction shifts, and dual adoption curves in one view.

Really appreciate you taking the time to run a non-standard pitch through it — those are the most interesting cases.