OpenAI WebSocket Mode for Responses API

Persistent AI agents. Up to 40% faster.

121 followers

Persistent AI agents. Up to 40% faster.

121 followers

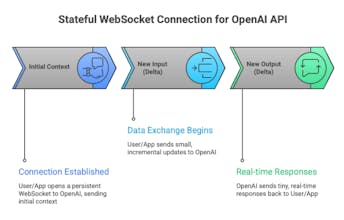

Every agent turn, you're resending the full context. Again. That overhead compounds fast. WebSocket Mode for the Responses API keeps a persistent connection, sends only incremental inputs, and cuts end-to-end latency by up to 40% on heavy tool-call workflows.

I'm happy to hunt this one WebSocket Mode for the Responses API looks like a small infra update but it's quietly one of the more important shifts in how production agents get built.

Most agentic workflows today are built on a protocol designed for single-turn interactions. Every tool call resends the full conversation history. The model reprocesses what it already knows. Your infrastructure pays that toll on repeat, invisibly, at scale.

This changes the contract.

⚡ What's different with WebSocket Mode:

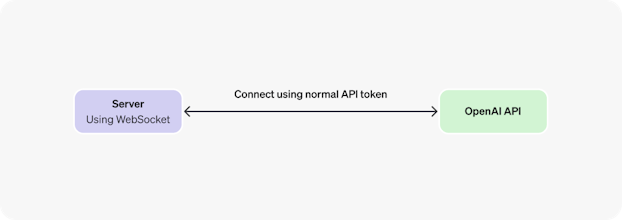

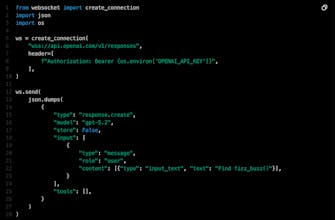

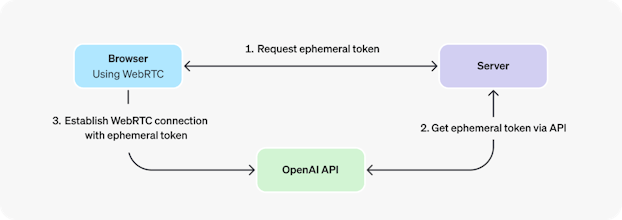

One persistent connection to /v1/responses -- no new HTTP handshake per turn

Only incremental inputs travel over the wire, not the full context

Session state lives in memory -- the model picks up exactly where it left off

Cline tested this in production: ~39% faster on complex multi-file tasks, up to 50% in best cases

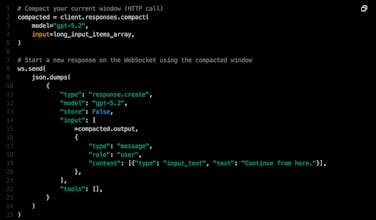

Pair with server-side compaction and you can run agents for hours without hitting context limits

🎯 Who this is actually for:

Teams running agentic coding tools with repeated tool calls

Computer-use and browser automation loops

Orchestration systems where agent latency affects user-perceived quality

⚠️ One honest caveat: the WebSocket handshake adds slight TTFT overhead on short, simple tasks. This compounds value on heavy workloads, not light ones. Know your use case before you swap.

For teams already running production agents, is latency or context limits the bigger blocker right now? Curious what this unlocks for people here. 👇

@rohanrecommends What specific kinds of agent applications benefit most from the WebSocket Mode (coding agents, orchestration loops, voice assistants)

Persistent connection with incremental inputs is a big deal for long-running agents. The 39% latency reduction on multi-file tasks is impressive. Does session state survive a server-side deployment or scaling event, or does the client need to handle reconnection and state replay?

This is actually pretty cool. Keeping the connection open and only sending new inputs feels much cleaner than constantly re-sending full context every turn.

This solves a real pain — constantly resending context was both slow and expensive. Persistent sessions should make long-running agents feel much more practical in production.

Adjust Page Brightness - Smart Control

Open AI will always be the king!