Launching today

OpenBox

See, verify, and govern every agent action.

190 followers

See, verify, and govern every agent action.

190 followers

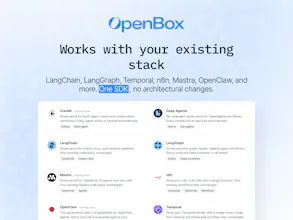

OpenBox provides a trust platform for agentic AI, delivering runtime governance, cryptographic verification, and enterprise-grade compliance. Integrates via a single SDK with LangChain, LangGraph, Temporal, n8n, Mastra, and more. Available to every organization with no usage limits.

Hey Product Hunt, I'm Tahir, co-founder and CTO of OpenBox AI. Today we're thrilled to introduce OpenBox, the trust platform for agentic AI that makes enterprise grade governance available to everyone.

AI agents are now operating across workflows, systems, and organizations at scale. The question every team building with agents faces is the same:

How do you know what your agents are doing

How do you prove they acted within policy

How do you meet compliance requirements without rebuilding your entire stack

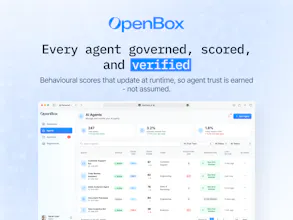

OpenBox answers that. It delivers runtime governance, cryptographic verification, and enterprise grade compliance at the point of execution, enforcing identity, authorization, policy, and risk across every agent action and cross system interaction.

OpenBox integrates via a single SDK with no architectural changes to your existing stack. It works natively with LangChain, LangGraph, Temporal, n8n, Mastra, and more.

You get:

Production grade SDK

Cryptographic audit trails

OPA based policy engine

Built in runtime guardrails

Dynamic risk scoring

Human in the loop controls

Full observability from day one

We built OpenBox on the belief that trust should be a right, not a privilege. Every organization deploying AI agents deserves the same governance and accountability infrastructure, whether they are a startup or a regulated enterprise.

That is why the core platform is available in production, with no usage limits and no credit card required.

Would love to hear from everyone building with AI agents today:

What are you building

How are you handling governance

What is missing in your stack

Happy to answer everything here 👇

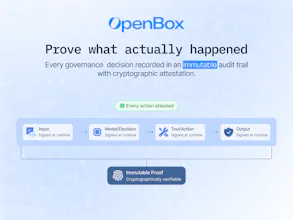

Cryptographic verification of agent actions is the interesting piece here. What exactly is being signed — the prompt, the tool call, the output, all of the above? And when you say 'verify,' is that post-hoc audit trail or can you actually halt an action mid-execution if it fails a policy check?

Great question@sounak_bhattacharya .

OpenBox signs the execution envelope around an agent action, not just a single element. That can include the prompt context, tool call, inputs, outputs, and the policy decision tied to that step.

Verification isn’t just post-hoc. Policies are evaluated before and during execution, so actions can be halted mid-flow if a check fails, while still leaving a cryptographically verifiable audit trail of what was attempted and why it was blocked.

Triforce Todos

Very good to see you guys live, How are you handling policy enforcement across different agent frameworks without adding latency?

Congrats on the launch BTW 🎉

Thanks@abod_rehman, really appreciate it.

OpenBox enforces policies at runtime across every agent action, with a lightweight SDK that sits alongside agent frameworks rather than inside the execution path.

This allows identity, authorization, and risk checks to happen in real time without blocking the agent, while keeping integrations consistent across different frameworks.

Do you think openbox or other similar tools in future will become a standard layer in every agent stack, like auth or logging today?

Great question@lak7 .

I do think this becomes a standard layer over time.

As agents get more autonomy, teams will need visibility, policy enforcement, and verifiable execution by default, similar to how auth and logging became essential. That’s exactly the layer OpenBox is aiming to provide.

@grover___dev Agreed!

Nice work on this. How does it integrate with existing agent frameworks like LangChain or similar tools?

Thanks @riya_singh91 .

OpenBox is built to plug into existing agent frameworks like LangChain, LangGraph, Temporal, n8n, Mastra, and similar stacks through a single SDK, so teams can add governance, verification, and runtime visibility without rebuilding their workflows.

Here's a some more detailed info: https://docs.openbox.ai/getting-started/

Love the direction here. Are you targeting enterprise use cases first or keeping it flexible for smaller teams as well?

Hey @pulkit_maindiratta

We've launched an enterprise-grade product that is accessible to teams of all sizes so you can meet your compliance and governance needs.

At the same time, we're also working with larger enterprises that need more custom setups and deeper integrations.

Simple Utm

This is a problem that does not get enough attention yet. Everyone is focused on making agents more capable, but the question of "how do you prove they acted within policy" is going to matter a lot more as agents start touching real workflows at scale.

The cryptographic verification angle is interesting. Most governance approaches I have seen are audit logs after the fact. Proving compliance at the point of execution is a different thing entirely.

Question: how does OpenBox handle governance for agents that are pulling context from multiple systems with different access policies? For example, an agent that reads from both a public knowledge base and a restricted HR system in the same workflow. Does the governance layer enforce per-source permissions, or is it more at the action level?

Really thoughtful point @najmuzzaman, and you’re right, this is exactly where governance starts to matter as agents move into real workflows.

It’s handled at both the source and action level. Each context source is evaluated with its own identity and access policy, so an agent can read from public data while restricted systems like HR remain permission-gated.

When the agent composes a workflow, OpenBox then checks whether that specific action is allowed given the combined context, and can block or redact steps if sensitive data would flow into an unauthorized tool or output.