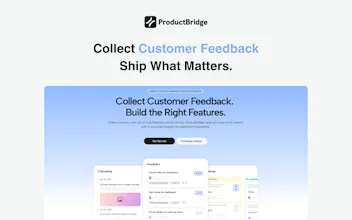

Your feedback is everywhere — Slack threads, Intercom support tickets, review sites, DMs. ProductBridge's AI agent collects it all automatically, organizes it, deduplicates, and helps your team ship what users actually want. Users request features, upvote, and watch ideas move through your public roadmap. Teams prioritize with data, publish changelogs, and auto-notify users when their feature ships. One platform. Complete feedback loop. Flat pricing. No seat fees. No surprises. Ever.

We collect client feedback across several channels at once — and deduplication is what interests me most. The same request often arrives three times, worded differently, and it's hard to tell if it's one problem or three. How does ProductBridge decide two pieces of feedback actually belong together?

ProductBridge

Great question @klara_minarikova — this is core to how ProductBridge works.

We use advanced RAG + LLM to match feedback by intent, not just wording. But the real differentiator is context — our AI already knows your full board. Knowledgebase, existing feedback posts, what's on your roadmap, what you've already shipped in the changelog.

So if someone requests something you launched 2 months ago, it knows. If 3 people describe the same problem differently, it groups them.

Timelaps

Hey Hareesh, congrats on the launch! Interesting tool solving a real problem.

Question: Feedback online is highly skewed and biased (as it takes particular types of personas to post, with no proper way to 'control' via experimental design). Is a product roadmap built on online feedback the best path forward for builders?

ProductBridge

@harryzhangs Thanks for the support! and honestly, it's a fair challenge!

ProductBridge helps in two ways: user tagging with MRR and revenue data means you weigh who's asking, not just how many. And pulling from multiple channels — tickets, Slack, emails — broadens the signal beyond just the people who bother to post.

This is a very interesting idea. In our business, we receive a lot of feedback from multiple channels that never really gets processed as data as such, so this idea could actually be relevant, but I have a couple of doubts that came to mind:

The actionable insights sound great, but how does the app process contradictory feedback from clients to decide which side to lean to? Is there a process of prioritizing certain types of feedback over others? It would be super interesting to get a bit more info about this.

Anyways, congratulations for the launch!

ProductBridge

@carlos_alfredo_davila_aguilar Thank you! Really glad it resonates.

On contradictory feedback: ProductBridge doesn't pick a side automatically. Instead it shows you the full picture — how many people said what, and who they are. That context is what helps you make the call.

On prioritization: it's not just vote counts. You can tag users with properties like MRR or plan type. So if 10 free users want one thing and 3 paying customers want the opposite, you can see that clearly and decide what actually matters for your business.

The goal is to give you better information. 🙌

@hareesh_vemasani Thanks for your reply Hareesh! This actually clarifies my doubt.

ProductBridge

@artsci00 Thank you! Great questions.

Teams can directly chat with users on the feedback post itself. The user gets notified via email, so the conversation actually happens. You can dig deeper, ask follow-up questions, or invite them for a user interview — all in context of the feedback they submitted.

And once a feature is built, you can reach back out on the same thread, let them know it's ready, and invite them to try it out. The whole conversation lives in one place from request to resolution.

timing is everything with this. built something myself and the biggest mistake was collecting feedback too late. question: does it consolidate across app store reviews too or mainly social/community platforms

ProductBridge

@renkethye 100% — feedback collected too late is almost as bad as no feedback at all.

App store reviews — great timing on the question! We support Slack, Intercom, support tickets and more right now. App store integration is coming in the next couple of weeks. It's high on our list and almost there.

Stay tuned — and thanks for the kind words! 🚀

How does the deduplication work when users describe the same issue in completely different words? Congrats on the launch!

ProductBridge

@borrellr_ Thanks! 🙌

Our dedup works at the intent level, not keywords. We use advanced RAG + LLM, so "the app is slow" and "keeps timing out" get grouped correctly even though they share zero common words.

The AI also has full context — your existing feedback, roadmap, and changelog — so it's matching against everything, not just other incoming posts. And nothing merges without your review. 🚀

The cross-platform aggregation problem is harder than it looks on the taxonomy side. When you're pulling feedback from, say, Reddit vs. G2 vs. in-app surveys, the same underlying complaint gets expressed in completely different registers. If you're using any kind of category classifier to group feedback, watch for the taxonomy itself creating false signal — I ran into a case where a hand-maintained category list was so specific to one domain's vocabulary that topically identical ideas from a different source never clustered together. The fix isn't better keywords, it's letting the classifier infer categories from the actual content distribution rather than pre-labeling buckets.