Launched this week

QuickCompare by Trismik

Compare LLMs on your data, measure, and pick the best.

342 followers

Compare LLMs on your data, measure, and pick the best.

342 followers

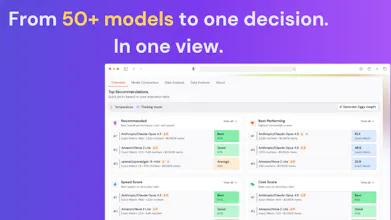

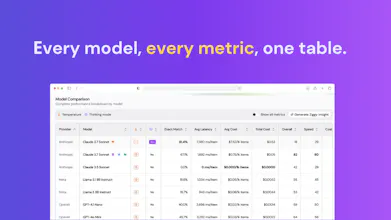

Stop guessing which LLM to use. Upload your data, compare 50+ models, and see quality, cost, and speed side by side. Pick the best model for your use case without manual testing or generic benchmarks.

Klariqo AI Voice Assistants

Great launch! Btw, can I compare models for specific tasks like marketing, coding, or support?

QuickCompare by Trismik

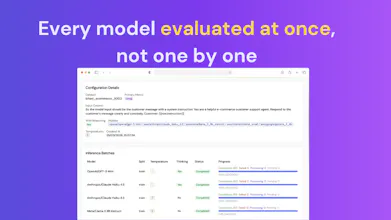

@ansh_deb Hi! Absolutely. You bring a dataset that represents the task you care about (or select one from Hugging Face), Ziggy helps you pick a sensible metric or set up an LLM-as-Judge, and QuickCompare runs the models you've picked against your data and reports back the performance. LLM-as-Judge in particular makes this easy for the more open-ended tasks like marketing or support, where there isn't always a clean right answer to compare against.

QuickCompare by Trismik

Hi Product Hunt, I’m excited to share that our Cambridge spinout, Trismik, is launching QuickCompare today.

We built QuickCompare to help AI teams compare LLMs on their own tasks and data, so they can make better decisions before deployment, fine-tuning, or migration.

As both a Cambridge academic and a co-founder, I’ve seen how difficult model selection can be in practice. There’s no shortage of models, but there is still a real need for fast, practical evaluation on real world use cases.

QuickCompare is for teams asking:

Which model performs best for our workflow?

Which model is most reliable?

Which model is worth deeper investment?

Thank you for taking a look, we’d really love your feedback!

Nigel, co-founder of Trismik

One Click Crypto: AI + DeFi

How is this better than LLM Arena?

QuickCompare by Trismik

@romain_blumberger Thanks for the great question!

We see them as serving different needs.

LMArena is excellent for broad, public model comparison. QuickCompare is built for teams who need to evaluate models on their own tasks and data.

The big difference is that QuickCompare lets you compare models on your actual workflow, with trade-offs like quality, latency, and inference cost visible side by side. So the question becomes less “which model is best in general?” and more “which model is best for our use case?”

And importantly, with QuickCompare your data stays private rather than being shared publicly.

Krisp

Interesting! This can be really useful for our research team. Our pipelines are a mix of different model usually.

QuickCompare by Trismik

@asti_pili Thanks, we really appreciate that. We’ve seen the same pattern: different models often make sense in different parts of a pipeline. We designed QuickCompare to help teams surface those choices more systematically instead of relying on guesswork. I'm curious, if you can share how you're evaluating those trade offs today?

Great project idea. I actually need to compare which AI services can analyze a LinkedIn profile at the lowest cost.

QuickCompare by Trismik

@natalia_iankovych Thanks! Love that use case. Are you testing this on a specific dataset or just prompts for now? Interested in how you’re thinking about cost vs quality when you compare models.

@alice_pernthaller Tomorrow the employee who handles this will be testing it. Before that, they tested each AI separately manually.

Just tried it on a prompt I've been wrestling with for weeks - seeing GPT, Claude, and Gemini side-by-side immediately settled an argument I was having with myself. Nice UX too. Best of luck on PH today!

QuickCompare by Trismik

@katarzyna_krynska Thanks, we really appreciate it. Glad that the side by side LLM comparison and UX were helpful!

CatDoes

Great launch! 🚀 Have to try it out for optimizing our LLM based flows at CatDoes.

QuickCompare by Trismik

@mahdi_nouri Thanks so much - we really appreciate your support! Let us know how it goes. We’d love to hear what matters most for your team when you compare LLM models, whether that’s quality/latency/inference cost, or something else.