Hey, I'm Sacha, co-founder at @Edgee

Over the last few months, we've been working on a problem we kept seeing in production AI systems:

LLM costs don't scale linearly with usage, they scale with context.

As teams add RAG, tool calls, long chat histories, memory, and guardrails, prompts become huge and token spend quickly becomes the main bottleneck.

So we built a token compression layer designed to run before inference.

Edgee

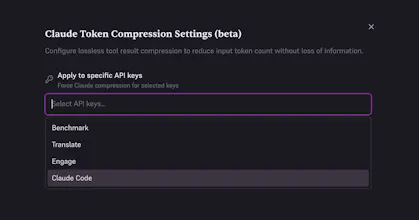

❤️ Today, we're launching the @Edgee Claude Code Compressor.

I want to show you what it does with a real-world test scenario, so I recorded this video.

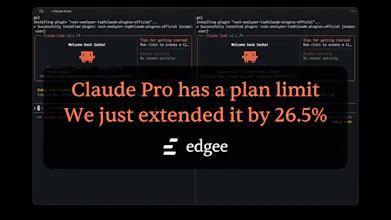

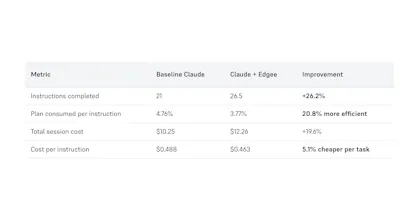

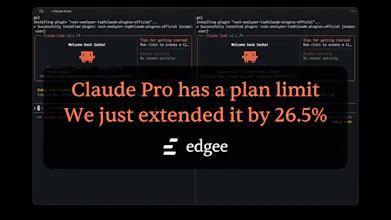

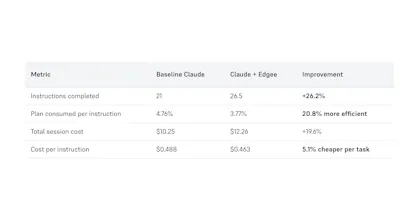

I created two separate Claude Code sessions, each connected to a dedicated plan. Same codebase, same task, same instructions: one side standard Claude Code; the other routed through Edgee with compression enabled.

Left side stops at 21 instructions. Right side reaches 26.5.

+26.5% more session before hitting your plan limit.

Here's how it works: Edgee sits between Claude Code and the Anthropic API. Before each request is sent, it strips redundant context, deduplicates instructions, and sends a leaner prompt. Claude sees less noise. You get more range.

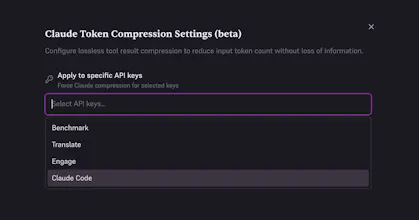

To install: curl -fsSL https://install.edgee.ai | bash

Then: edgee launch claude

That's it. Free. Takes 30 seconds to set up.

If you're a Claude Code user who's hit the plan wall mid-task, this is for you. If you're running Claude on Anthropic's API and watching your token bill grow, this is also for you.

We've been in beta for a few weeks. Today it's out for everyone.

neat product - keep up the great work, @sachamorard and team 👏👏

Edgee

You rock @fmerian ! Thank you very much for supporting and highlighting this incredible feature.

Cipherwill

Edgee

@shivam1337 Absolutely not, on the contrary. Compression for Claude Code applies to tool results that are very often too verbose. For example, when the model asks your Claude Code to execute a git log, the model doesn't need unnecessary details. Our compressor cleans up all the polluting elements.

Edgee

Would be great to see a breakdown or visualization of what’s being removed vs kept. That could help build trust in the compression layer.

Edgee

Hit that Claude limit mid-flow way too many times 😅 this kind of compression feels like a simple fix that actually saves real time + money.

Edgee

@allinonetools_net Simple and efficient. Just a simple CLI install (with brew or culr), then `edgee launch claude`... and that's it, you save up to 50% of token cost :)

Interesting that the fallback sends the original prompt when BERT score is too low. Smart safety net. One thing I'd watch though: Claude Code already runs its own context compression internally, and there are known issues where that causes it to lose track of CLAUDE.md instructions. Adding another compression layer on top might amplify that. Have you tested how the two interact?

Using Edgee already, really great product.

Super simple idea but actually makes a difference on costs

Edgee

More tokens, fewer plan interruptions 🙌

Edgee

@maxwell_timothy Thanks a lot. Don't hesitate to try it, it's 100% free