Sudo is a unified API for LLMs — the faster, cheaper way to route across OpenAI, Anthropic, Gemini, and more. One endpoint for lower latency, higher throughput, and lower costs than alternatives. Build smarter, scale faster, and do so with zero lock-in

Sudo AI

👋 Hey Product Hunt!

I’m Ventali — I’ve been building in AI since before GPT-3, and one thing has always bugged me: the AI development stack is fragmented and clunky.

• Inference is expensive (and unpredictable).

• Managing context data and memories is complicated.

• Billing end-users requires complex tracking

• There are very few ways to monetize

So we built Sudo:

⚡ One API to route across top models — faster routing, lower costs, zero lock-in

💽 Context management system (CMS) to turn AI apps into stateful, knowledgeable, memory-aware agents

💳 Real-time billing (usage, subscription, or hybrid) — we will charge your end-users for you

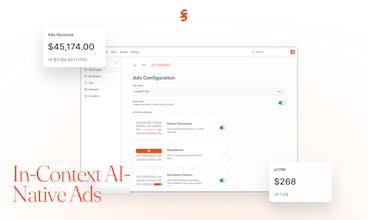

📈 Optional AI-native ads — contextual, policy-controlled, and personalized

The beta is live now with routing + dashboards. Context + billing + ads will be toggling on during the beta window.

✨ To thank early testers, we’re giving a 10% bonus credit purchased as well as $1 of free credits when you first sign up so you can test the API immediately.

We’d love your feedback:

• What feels clunky?

• What’s missing for your use case?

• What would make Sudo a true drop-in for you?

🔑 Get a dev key: https://sudoapp.dev

📚 Docs: https://docs.sudoapp.dev

💬 Discord: https://discord.gg/UbPf5BgrfK

@ventali Congratulations on the launch. I'm just curious, are there any significant differences between Sudo & OpenRouter?

Sudo AI

@thepmfguy Hi Gaurav - nice to meet you and great question! Sudo and OpenRouter are both unified APIs for LLMs. The big differences are:

• Performance & cost → we benchmarked Sudo directly against OpenRouter, and Sudo delivers faster routing + lower cost across models like DeepSeek, GPT-5-nano, Grok-4-0709, Gemini-2.0-Flash, Claude-3.7-Sonnet, etc. (benchmarks here: https://github.com/sudoappai/sudo-benchmarks)

• Vertical features → we’re adding context management (so apps can be stateful + memory-aware) and monetization tools (like in-context ads + built-in billing). All through a single API endpoint.

Curious — from your perspective, is performance or developer-experience tooling more important for you right now?

Agnes AI

Awesome product to solve the tedious routing problem... just curious - how does it differ from openrouter etc? There are way too many model routers products in the market so far tbh

Sudo AI

@cruise_chen Thanks for the thoughtful question! I agree there are a lot of routing products in the market.

The way I see it, OpenRouter is aiming to be the best horizontal player — broad routing coverage, model stats, and distribution. With Sudo, we’re focused on what it means to go vertical: not just routing, but solving the chain of problems developers face when building AI products.

• On routing, we benchmarked directly against OpenRouter and consistently come out faster + lower cost (benchmarks: https://github.com/sudoappai/sudo-benchmarks).

• On context, developers today have to duct-tape LangChain, vectorDBs, and a lot of custom code — which costs time and complexity. We’re making context + memory management native.

We are also thinking about how to help developers monetize their API calls through optional in-context ads etc.

Sudo AI

@cruise_chen In short, we're putting the whole AI developer experience in a single platform! It goes beyond routing.

LiveDocs

I've been following this project for a while, and the vision is exciting! Congrats to the team for shipping!

Sudo AI

@arslnb Arsalan!! Thanks so much for following along and for the kind words 🙏 We’re excited to finally get Sudo into developers’ hands.

Out of curiosity — what’s the part of the product you’d be most excited to try first (faster/cheaper routing, context management, billing, or ads)? Any feedback there would really help us shape the roadmap.

Magiclight

Love the one endpoint for all LLMs idea — super practical. Wishing you a successful launch! Curious: do you plan to expand support to open-source models too?

Sudo AI

@jason123 Yes absolutely — we’re expanding support to open-source models alongside adding multiple providers, and we’re also considering hosting to make access even smoother.

Out of curiosity, which open-source models would you most like to see added first?

@ventali Just 14 days ago, I was working with a SaaS and faced a problem that they have to buy different API for different LLMs services. That was cutting their money. I was think, hush!!!! if there is an app or service that can provide a single API to access any LLMs and today I see this. That is brilliant idea to help new SaaS builders to use LLMs's API with a low cost. I don't how you came up with this idea? What is the story behind this?

Sudo AI

@foyjul_i Thanks so much 🙏 That’s exactly the pain point we kept hearing too.

I’d built consumer AI products before, and many of my founder friends in the space would complain about how painful the dev experience was — juggling multiple APIs, high inference costs, and tough conversion economics. That pushed me to think about cost structure + revenue structure as a problem space, not just infra.

Curious — in your SaaS work, was cost the main blocker, or did the integration overhead bite just as much?

@ventali both of them. In fact due to the cost issue, we tried so many black hat tactics to get free APIs (which is obviously not work well) and also the integration issues with so many touchpoint.

I’ve had the chance to work with Ventali and the Sudo team — they’re incredibly competent at what they do.

At Macaron AI, billing and monetization are at the core of building sustainable consumer AI apps. We spend a lot of time thinking about this problem ourselves, and a product like Sudo (one API for all models, plus billing + monetization features) would be a huge unlock. We’ll definitely be trying it out.

Curious to hear from others here — how are you thinking about the monetization problem in AI apps?

Sudo AI

@kaijiechen Really appreciate your perspective — and totally agree, billing + monetization sit at the core of consumer AI apps. That’s exactly why we’re building those layers into Sudo on top of routing.

Out of curiosity — since you’ve spent a lot of time thinking about this at Macaron, what’s been the toughest challenge for you in billing/monetization? (conversion, pricing models, infra?) Would love to compare notes.

YouMind

Congrats on the beta launch — excited to see a unified API tackling routing, cost and context management.

Quick question: what's the latency improvement I can expect when switching from OpenRouter or a single-provider setup, and do you expose per-model routing logs for debugging? Also curious about pricing predictability once billing features go live — will there be rate caps or alerts for end-user charges?

Sudo AI

thanks @jaredl!

On some models, we perform over 50% faster than OpenRouter on the base provider (OpenAI, Anthropic, etc). You can see our benchmarks on the GitHub.

We do not (currently) have routing logs, but that is a feature we could implement soon. Are inference logs something that you'd be interested in to help your development needs?

There won't be pricing caps -- you can set pricing rates to whatever you want. The end-user will pay the price you define for them. As for usage caps, we are looking into allowing per-API-key rate limits, so you can issue a key for each end-user or agent you deploy, with that key having a hard cap on usage. We would also allow you to set a usage limit for your subscribing users, such that they won't go above the usage amount you alot to them as part of their subscription.

What are your most outstanding demands when it comes to billing end-users?